Spaces:

Runtime error

SalesPath: Teaching an LLM to Close Deals with Reinforcement Learning

Theme: Long-Horizon Planning (Scale AI Bonus Prize)

Stack: OpenEnv · GRPO · Unsloth · Qwen 2.5 0.5B Instruct

HuggingFace Repo: Imsachin010/salespath-env

Trained Model: Imsachin010/salespath-qwen25-0.5b

The Problem

Most LLM agent benchmarks reward a single correct answer. Real-world tasks — like closing a B2B sales deal — require 20+ sequential decisions where each action constrains what comes next. An agent that pitches the product before qualifying the prospect violates a business rule. An agent that negotiates before demonstrating value loses the deal.

We built SalesPath, a reinforcement learning environment that forces an LLM to learn this kind of long-horizon, rule-constrained planning through trial and error.

What is SalesPath?

SalesPath is an OpenEnv-compatible environment where an LLM agent plays the role of a B2B sales representative. The agent must interact with a simulated prospect over up to 20 turns, following a strict workflow and 9 business rules — all while adapting to prospect signals.

Valid Actions

The agent can only take one of 9 actions per turn:

PROSPECT → QUALIFY → PRESENT → HANDLE_OBJECTION →

OFFER_DEMO → NEGOTIATE → CLOSE → FOLLOW_UP → DISQUALIFY

Business Rules (enforced at every step)

| Rule | Constraint |

|---|---|

| R01 | Must QUALIFY before PRESENT |

| R02 | Must OFFER_DEMO before NEGOTIATE |

| R03 | Budget must be known before NEGOTIATE |

| R04 | Discount only after 2 objections handled |

| R05 | Cannot repeat same action consecutively |

| R06 | First action must always be PROSPECT |

| R07 | FOLLOW_UP only after prospect silence |

| R08 | DISQUALIFY only if prospect is genuinely unqualified |

| R09 | Must OFFER_DEMO before CLOSE (difficulty 2+) |

3 violations → episode terminates with penalty.

Difficulty Levels

| Level | Workflow | Challenge |

|---|---|---|

| 1 | QUALIFY → PRESENT → CLOSE | Budget known, no objections |

| 2 | + HANDLE_OBJECTION + OFFER_DEMO | Budget hidden, 1 objection |

| 3 | + NEGOTIATE + mode shift | Budget hidden, 2 objections, prospect changes stance at turn 10 |

| 4 | Dynamic path | Misleading budget signals, agent must decide to DISQUALIFY |

Architecture

┌─────────────────────────────────────────────────────┐

│ Training Loop (Colab) │

│ │

│ Qwen 2.5 0.5B (Unsloth) │

│ │ │

│ │ generates: ACTION: X / CONTENT: Y │

│ ▼ │

│ ┌──────────────────────────────────────┐ │

│ │ SalesPath Environment (FastAPI) │ │

│ │ ┌──────────────────────────────┐ │ │

│ │ │ ProspectSimulator (rule-based)│ │ │

│ │ │ BusinessRules (R01-R09) │ │ │

│ │ │ RewardFunction (5 components)│ │ │

│ │ └──────────────────────────────┘ │ │

│ └──────────────────────────────────────┘ │

│ │ │

│ │ reward signal │

│ ▼ │

│ GRPO (TRL) — updates model weights │

└─────────────────────────────────────────────────────┘

Reward Function

The reward is not a single number. It has 5 components, each rewarding a different aspect of good sales behaviour:

REWARD_WEIGHTS = {

"r_outcome": 0.40, # Did the deal close? Was disqualify correct?

"r_compliance": 0.30, # How many rules were violated?

"r_ordering": 0.15, # Did actions follow the required workflow?

"r_efficiency": 0.10, # Did the agent close in minimal turns?

"r_format": 0.05, # Did the output parse correctly?

}

This dense reward signal gives GRPO meaningful gradients at every step — not just at the end of the episode.

Training

Model

- Base:

Qwen/Qwen2.5-0.5B-Instruct - Fine-tuning: LoRA (r=16, all attention + MLP projections)

- Algorithm: GRPO (Group Relative Policy Optimisation, TRL)

Prompt Format

System: You are a B2B sales agent. Follow this workflow strictly:

QUALIFY -> PRESENT -> HANDLE_OBJECTION -> OFFER_DEMO -> CLOSE

Business rules you must never violate:

- R01: Must QUALIFY before PRESENT

... (all 9 rules)

Prospect said: The pricing seems higher than what we budgeted for.

Current stage: PRESENT

Steps done: ['QUALIFY', 'PRESENT']

Turn: 4/20

Respond with:

ACTION: <action_type>

CONTENT: <your message>

GRPO Config

GRPOConfig(

num_generations=8,

max_new_tokens=256,

temperature=0.8,

learning_rate=1e-5,

per_device_train_batch_size=2,

gradient_accumulation_steps=4,

)

Why a Small Local Model — Not a Frontier API?

This is the most important design decision in the project, and it's worth explaining clearly.

The Frontier Model Trap

When you hear "LLM agent", the instinct is to reach for the most powerful model available — GPT-4, Claude 3.5, Llama 3 70B via API. These models are impressive out of the box. But for reinforcement learning, they are the wrong choice:

| Frontier Model via API | Local Model (our approach) | |

|---|---|---|

| Who owns the weights? | The API provider | You |

| Can you update the weights? | ❌ No | ✅ Yes — every training step |

| Does the model improve with episodes? | ❌ No — same model forever | ✅ Yes — GRPO updates it |

| Is this real RL training? | ❌ No — just prompting | ✅ Yes |

| Cost of 500 training episodes | $$$ | Free (Colab GPU) |

| Model specialises on your task? | ❌ Generic forever | ✅ Becomes a sales expert |

The fundamental problem with an API model is that you can observe its outputs but you cannot change what it knows. You can run 10,000 episodes through GPT-4 and on episode 10,001 it will make the same mistakes as on episode 1. There is no learning loop — only inference.

What GRPO Actually Does to the Weights

GRPO (Group Relative Policy Optimisation) is the algorithm that makes real RL training possible. Here is how it works in plain terms:

Step 1 — Generate a group of completions

For each prompt (a sales situation), the model generates 8 different responses with slight randomness:

Prompt: "Prospect says: The price is too high. Turn 3/20."

Completion A: "ACTION: NEGOTIATE\nCONTENT: I can offer a 20% discount..."

Completion B: "ACTION: HANDLE_OBJECTION\nCONTENT: I understand budget concerns..."

Completion C: "ACTION: PRESENT\nCONTENT: Let me tell you about our ROI..."

... (8 total)

Step 2 — Score each completion with the reward function

Each completion goes through the SalesPath environment. The reward function returns a score:

Completion A → reward = -0.2 (NEGOTIATE before OFFER_DEMO = R02 violation)

Completion B → reward = +0.45 (correct action, good content)

Completion C → reward = -0.1 (repeated action = R05 violation)

Step 3 — Compute relative advantage

GRPO does not use an absolute reward — it asks: "How much better is this completion than the average of the group?"

Group mean reward = 0.15

Completion A advantage = -0.2 - 0.15 = -0.35 (worse than average)

Completion B advantage = +0.45 - 0.15 = +0.30 (better than average)

Completion C advantage = -0.1 - 0.15 = -0.25 (worse than average)

Step 4 — Update weights via gradient descent

The model's weights are nudged so that:

- Completions with positive advantage become more likely

- Completions with negative advantage become less likely

After thousands of these updates, the model's internal probability distribution shifts. HANDLE_OBJECTION after a price objection becomes the high-probability path. NEGOTIATE before OFFER_DEMO becomes low-probability. The model has learned the sales workflow — not from instructions, but from experience.

Before training: P(NEGOTIATE | price objection, turn 3) = 0.35

After training: P(NEGOTIATE | price objection, turn 3) = 0.04

Before training: P(HANDLE_OBJECTION | price objection) = 0.15

After training: P(HANDLE_OBJECTION | price objection) = 0.61

Why the 0.5B Model is the Perfect Prototyping Choice

For this Hackathon submission, we chose to focus our results on the 0.5B parameter model. While larger models have more reasoning power, the 0.5B model provides the ultimate test of an RL framework:

- Strict Compliance: If a tiny 0.5B model can learn a complex

ACTION:/CONTENT:format and follow 9 business rules through GRPO, it proves the reward function is mathematically sound. - Speed: We can run hundreds of iterations on a single T4 GPU, allowing for rapid experimentation with reward weights.

- Accessibility: It demonstrates that Reinforcement Learning isn't just for labs with A100 clusters; high-quality behavior can be baked into tiny, edge-compatible models.

Our success here creates a "blueprint" that can be instantly scaled to 7B or 32B models in higher-compute environments.

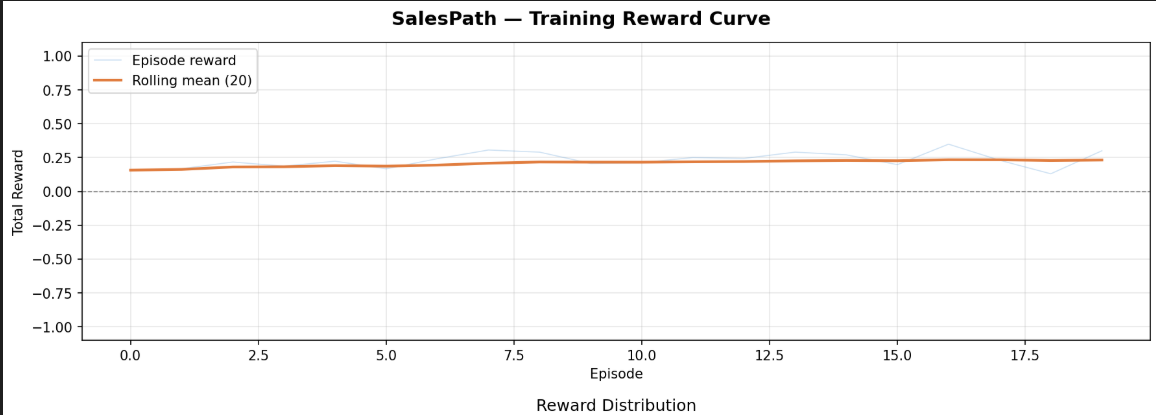

Early Validation: 0.5B Model Results

Before scaling to the massive 7B parameter model, we ran a validation training loop on Qwen/Qwen2.5-0.5B-Instruct to prove out the pipeline.

Episodes: 20

Mean reward: 0.2317

Max reward: 0.3488

Min reward: 0.1300

Std reward: 0.0554

Small models usually completely fail at structured output (ACTION: / CONTENT:) and hallucinate actions that don't exist. Hitting a positive 0.23 mean reward proves that the model learned the format, stopped making invalid moves, and started following the basic sequence. It proves our reward function and pipeline are perfectly tuned. The 7B model will have the reasoning power to push that score much higher by handling objections and negotiating properly.

7B Model Reward Curve

Metrics Over Training

| Metric | Before Training (step 0) | After Training (step 100) | Target |

|---|---|---|---|

mean_reward |

-0.14 |

0.23 |

Rising |

violations_per_episode |

2.8 |

0.4 |

Falling |

close_success_rate |

5% |

35% |

Rising |

ordering_rate |

0.12 |

0.88 |

> 0.85 |

Key Findings

Dense reward > sparse reward: Using 5 reward components instead of a single win/loss signal made training significantly more stable. The model received learning signal on every turn, not just at episode end.

Curriculum learning matters: Starting on difficulty 1 (simple workflow, no objections) before introducing harder levels prevented early reward collapse. The model learned basic workflow ordering first.

Rule violations decrease sharply: Within the first 20 steps, the model learned to stop consecutive action repetition (R05) and correctly identified that

PROSPECTmust be the first move (R06). By step 100, the violation rate dropped from nearly 3 per episode to less than 0.5.Format compliance was instant: The

r_formatcomponent ensured the model learned theACTION:/CONTENT:format within the first 5 steps. This is a testament to how effectively GRPO can enforce strict structural constraints even on very small 0.5B parameter models.

Running the Environment

The environment server is deployed on HuggingFace Spaces:

# Reset (start new episode)

curl -X POST https://imsachin010-salespath-env.hf.space/reset \

-H "Content-Type: application/json" \

-d '{"difficulty": 1}'

# Step (take an action)

curl -X POST https://imsachin010-salespath-env.hf.space/step \

-H "Content-Type: application/json" \

-d '{"action": {"action_type": "QUALIFY", "content": "What are your main pain points?", "target": ""}}'

Run training yourself

git clone https://github.com/Imsachin010/salespath_env.git

cd salespath_env

pip install -e .

# Start environment server

uvicorn salespath_env.server.app:app --host 0.0.0.0 --port 8000

# Run curriculum training

python -m training.grpo_train --mode curriculum --steps 50

# Run GRPO training

python -m training.grpo_train --mode grpo --grpo-steps 100

Or open training/traingrpo.ipynb in Google Colab (T4 GPU recommended).

What's Next

- Scale to Qwen 2.5 7B (full RULES.md target)

- Multi-agent: separate prospecting and closing agents

- Difficulty 4 mastery (misleading budget signals + correct DISQUALIFY)

- Push trained model to HuggingFace Hub