Use Docker images

docker run --gpus all \

--shm-size 32g \

-p 30000:30000 \

-v ~/.cache/huggingface:/root/.cache/huggingface \

--env "HF_TOKEN=<secret>" \

--ipc=host \

lmsysorg/sglang:latest \

python3 -m sglang.launch_server \

--model-path "nics-efc/VPR-Tic-Tac-Toe" \

--host 0.0.0.0 \

--port 30000# Call the server using curl (OpenAI-compatible API):

curl -X POST "http://localhost:30000/v1/chat/completions" \

-H "Content-Type: application/json" \

--data '{

"model": "nics-efc/VPR-Tic-Tac-Toe",

"messages": [

{

"role": "user",

"content": "What is the capital of France?"

}

]

}'VPR-Tic-Tac-Toe

🌐 Project Page | 📝 Paper | 💻 Code

Model Description

This is the Tic-Tac-Toe-trained checkpoint from VPR: Verifiable Process Rewards for Agentic Reasoning, initialized from Qwen3-4B.

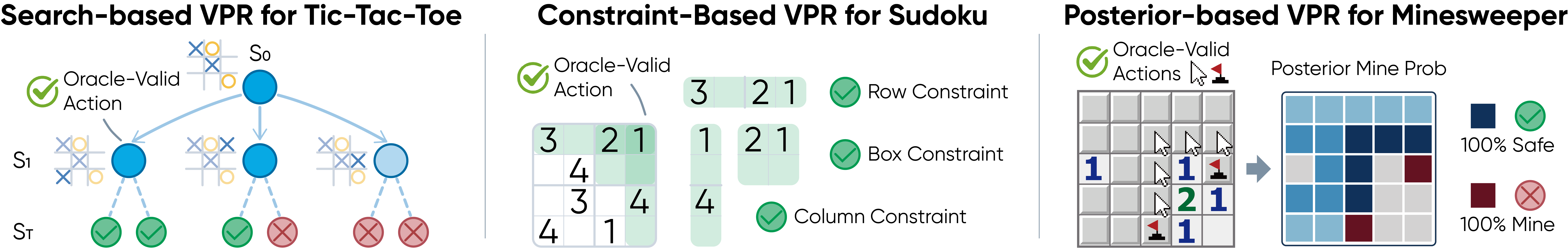

VPR turns verifiable oracles into dense turn-level rewards for long-horizon agentic reasoning. This checkpoint is trained with search-based VPR on Tic-Tac-Toe, where MCTS lookahead identifies strategically optimal moves and provides turn-level feedback.

Overview

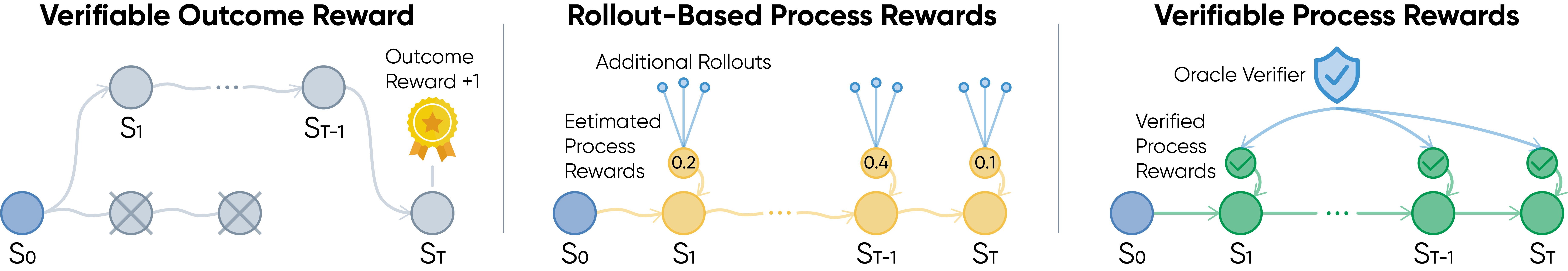

Reinforcement learning from verifiable rewards usually rewards only final success. In long-horizon agentic tasks, this creates a credit assignment problem: a trajectory may fail after many correct steps, or succeed despite flawed intermediate decisions.

VPR studies densely-verifiable agentic reasoning problems, where each intermediate action can be checked by a task-specific oracle. Instead of learning a noisy process reward model or estimating step values through extra rollouts, VPR uses task structure itself to provide reliable turn-level supervision.

Method

VPR converts sparse trajectory-level feedback into dense process rewards:

r_t^VPR = V(s_t, a_t)

For Tic-Tac-Toe, VPR uses a search-based verifier. MCTS lookahead evaluates legal moves and rewards strategically strong actions according to the estimated search value.

Results

In-Domain Tic-Tac-Toe

Tic-Tac-Toe reports average return against a strong MCTS opponent.

| Method | First Player | Second Player |

|---|---|---|

| Base | -0.31 | -0.35 |

| OR | -0.18 | -0.21 |

| MC-PR | -0.11 | -0.20 |

| VPR | -0.09 | -0.11 |

Zero-Shot Transfer

| Benchmark group | Metric | VPR-Tic-Tac-Toe |

|---|---|---|

| General reasoning | Average pass@1 | 62.16 |

| General reasoning | AIME24 | 33.33 |

| General reasoning | AIME25 | 21.33 |

| General reasoning | BBH | 88.96 |

| Agentic reasoning | ALFWorld success rate | 27.34 |

| Agentic reasoning | WebShop score | 30.88 |

| Agentic reasoning | WebShop success rate | 1.83 |

Usage

from transformers import AutoModelForCausalLM, AutoTokenizer

model_id = "nics-efc/VPR-Tic-Tac-Toe"

tokenizer = AutoTokenizer.from_pretrained(model_id, trust_remote_code=True)

model = AutoModelForCausalLM.from_pretrained(

model_id,

torch_dtype="auto",

device_map="auto",

trust_remote_code=True,

)

messages = [

{"role": "user", "content": "Solve this step by step: If a train travels 180 miles in 3 hours, what is its average speed?"}

]

text = tokenizer.apply_chat_template(

messages,

tokenize=False,

add_generation_prompt=True,

enable_thinking=True,

)

inputs = tokenizer([text], return_tensors="pt").to(model.device)

outputs = model.generate(**inputs, max_new_tokens=1024)

print(tokenizer.decode(outputs[0], skip_special_tokens=True))

Intended Use

This checkpoint is intended for research on verifiable rewards, process supervision, reinforcement learning for LLM agents, and transfer from game-like agentic training environments to broader reasoning tasks.

The released checkpoint contains the trained language model. Environment simulators, verifiers, and training code are provided in the project repository.

Citation

If you find this model helpful, please cite:

@misc{yuan2026verifiable,

title={Verifiable Process Rewards for Agentic Reasoning},

author={Huining Yuan and Zelai Xu and Huaijie Wang and Xiangmin Yi and Jiaxuan Gao and Xiao-Ping Zhang and Yu Wang and Chao Yu and Yi Wu},

year={2026},

eprint={2605.10325},

archivePrefix={arXiv},

primaryClass={cs.AI},

url={https://arxiv.org/abs/2605.10325}

}

- Downloads last month

- -

Install from pip and serve model

# Install SGLang from pip: pip install sglang# Start the SGLang server: python3 -m sglang.launch_server \ --model-path "nics-efc/VPR-Tic-Tac-Toe" \ --host 0.0.0.0 \ --port 30000# Call the server using curl (OpenAI-compatible API): curl -X POST "http://localhost:30000/v1/chat/completions" \ -H "Content-Type: application/json" \ --data '{ "model": "nics-efc/VPR-Tic-Tac-Toe", "messages": [ { "role": "user", "content": "What is the capital of France?" } ] }'