File size: 4,184 Bytes

ffe9e2a | 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 76 77 78 79 80 81 82 83 84 85 86 87 88 89 90 91 92 93 94 95 96 97 98 99 100 101 102 103 104 105 106 107 108 109 110 111 112 113 114 115 116 117 118 119 120 121 122 123 124 125 | ---

license: apache-2.0

language:

- en

base_model:

- Qwen/Qwen3-4B

pipeline_tag: text-generation

library_name: transformers

tags:

- qwen3

- reinforcement-learning

- verifiable-rewards

- process-rewards

- agentic-reasoning

- reasoning

- tic-tac-toe

arxiv: 2605.10325

---

# VPR-Tic-Tac-Toe

[**🌐 Project Page**](https://thu-nics.github.io/VPR/) | [**📝 Paper**](https://arxiv.org/abs/2605.10325) | [**💻 Code**](https://github.com/thu-nics/VPR)

## Model Description

This is the Tic-Tac-Toe-trained checkpoint from **VPR: Verifiable Process Rewards for Agentic Reasoning**, initialized from Qwen3-4B.

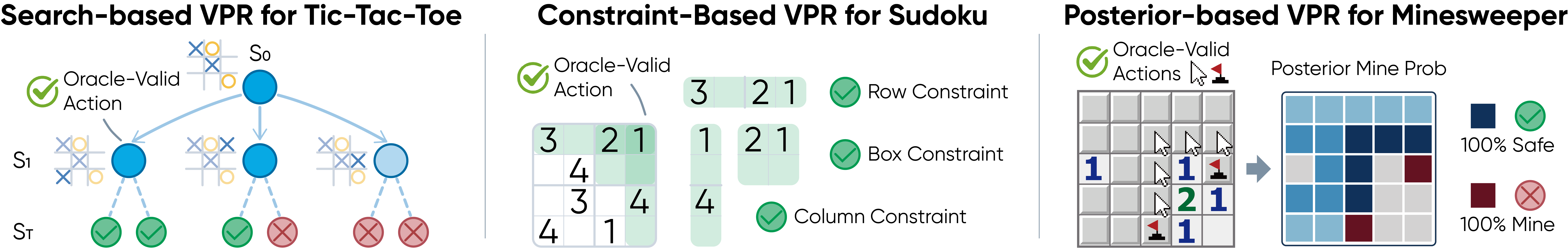

VPR turns verifiable oracles into dense turn-level rewards for long-horizon agentic reasoning. This checkpoint is trained with **search-based VPR** on Tic-Tac-Toe, where MCTS lookahead identifies strategically optimal moves and provides turn-level feedback.

## Overview

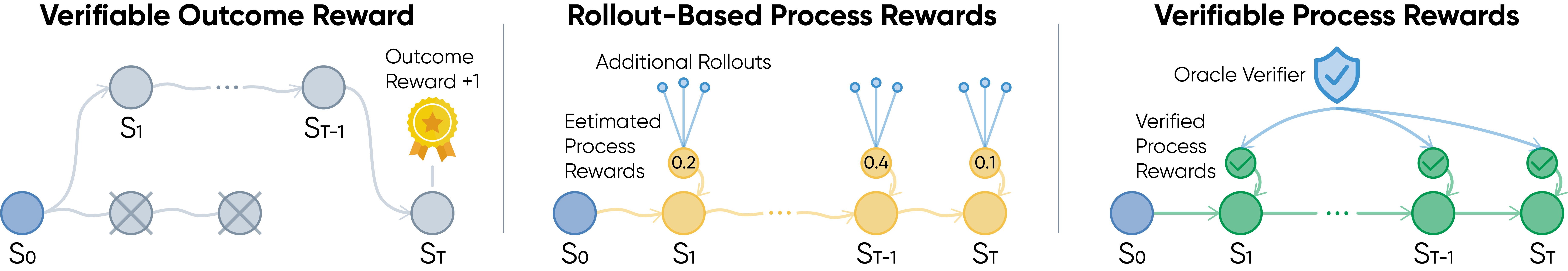

Reinforcement learning from verifiable rewards usually rewards only final success. In long-horizon agentic tasks, this creates a credit assignment problem: a trajectory may fail after many correct steps, or succeed despite flawed intermediate decisions.

VPR studies densely-verifiable agentic reasoning problems, where each intermediate action can be checked by a task-specific oracle. Instead of learning a noisy process reward model or estimating step values through extra rollouts, VPR uses task structure itself to provide reliable turn-level supervision.

## Method

VPR converts sparse trajectory-level feedback into dense process rewards:

```text

r_t^VPR = V(s_t, a_t)

```

For Tic-Tac-Toe, VPR uses a search-based verifier. MCTS lookahead evaluates legal moves and rewards strategically strong actions according to the estimated search value.

## Results

### In-Domain Tic-Tac-Toe

Tic-Tac-Toe reports average return against a strong MCTS opponent.

| Method | First Player | Second Player |

|---|---:|---:|

| Base | -0.31 | -0.35 |

| OR | -0.18 | -0.21 |

| MC-PR | -0.11 | -0.20 |

| **VPR** | **-0.09** | **-0.11** |

### Zero-Shot Transfer

| Benchmark group | Metric | VPR-Tic-Tac-Toe |

|---|---:|---:|

| General reasoning | Average pass@1 | **62.16** |

| General reasoning | AIME24 | **33.33** |

| General reasoning | AIME25 | **21.33** |

| General reasoning | BBH | **88.96** |

| Agentic reasoning | ALFWorld success rate | 27.34 |

| Agentic reasoning | WebShop score | 30.88 |

| Agentic reasoning | WebShop success rate | 1.83 |

## Usage

```python

from transformers import AutoModelForCausalLM, AutoTokenizer

model_id = "nics-efc/VPR-Tic-Tac-Toe"

tokenizer = AutoTokenizer.from_pretrained(model_id, trust_remote_code=True)

model = AutoModelForCausalLM.from_pretrained(

model_id,

torch_dtype="auto",

device_map="auto",

trust_remote_code=True,

)

messages = [

{"role": "user", "content": "Solve this step by step: If a train travels 180 miles in 3 hours, what is its average speed?"}

]

text = tokenizer.apply_chat_template(

messages,

tokenize=False,

add_generation_prompt=True,

enable_thinking=True,

)

inputs = tokenizer([text], return_tensors="pt").to(model.device)

outputs = model.generate(**inputs, max_new_tokens=1024)

print(tokenizer.decode(outputs[0], skip_special_tokens=True))

```

## Intended Use

This checkpoint is intended for research on verifiable rewards, process supervision, reinforcement learning for LLM agents, and transfer from game-like agentic training environments to broader reasoning tasks.

The released checkpoint contains the trained language model. Environment simulators, verifiers, and training code are provided in the project repository.

## Citation

If you find this model helpful, please cite:

```bibtex

@misc{yuan2026verifiable,

title={Verifiable Process Rewards for Agentic Reasoning},

author={Huining Yuan and Zelai Xu and Huaijie Wang and Xiangmin Yi and Jiaxuan Gao and Xiao-Ping Zhang and Yu Wang and Chao Yu and Yi Wu},

year={2026},

eprint={2605.10325},

archivePrefix={arXiv},

primaryClass={cs.AI},

url={https://arxiv.org/abs/2605.10325}

}

```

|