FinBERT AI Detector for Financial Texts

This model is a fine-tuned version of yiyanghkust/finbert-pretrain designed specifically to detect AI-generated text in financial documents, such as corporate annual reports (e.g., 10-K filings).

The model is used in working paper:

Perlin, Marcelo and Foguesatto, Cristian and Karagrigoriou Galanos, Aliki and Affonso, Felipe, The use of AI in 10-K Filings: An Empirical Analysis of S&P 500 Reports (January 21, 2026). Available at SSRN: https://ssrn.com/abstract=6108946 or http://dx.doi.org/10.2139/ssrn.6108946

Model Details

- Base Model:

yiyanghkust/finbert-pretrain - Task: Sequence Classification (Binary: Human vs. AI-Generated)

- Language: English

- License: MIT

Training Data and Method

The model was trained on a custom dataset compiled from human-written financial texts (derived from SEC annual reports) and AI-generated equivalents.

- Data Generation: Actual human texts from corporate annual reports were compiled. State-of-the-art Large Language Models (LLMs), including OpenAI's ChatGPT, Google's Gemini, and Anthropic's Claude, were then prompted to rewrite these sections or generate similar artificial financial texts.

- Training Method: The base

finbert-pretrainmodel—already pre-trained on a large corpus of financial text—was fine-tuned on this mixed dataset to classify whether a given segment of text is human-written or generated by an AI.

Performance

Total cases (AI & Human): 6000 Total cases (AI): 3000

Estimation cases: 4200 Test cases: 1800

| Metric | Value |

|---|---|

| accuracy | 89.16% |

| f1 | 88.57% |

| precision | 92.64% |

| recall | 84.84% |

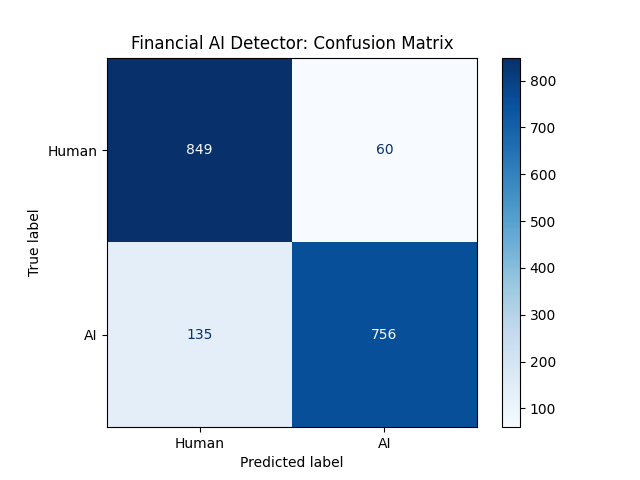

Confusion Matrix

Uses

This model is intended for researchers, financial analysts, and auditors who want to verify the authenticity of corporate disclosures and determine if a financial text (like an annual report or an earnings call transcript) was written by an AI or a human.

How to Get Started with the Model

There are two ways to use this model, you can either use a python package that handles the model internaly, or the standard transformers library (default for hugging face).

Using Python package finbert-ai-detector

Install locally using pip:

pip install finbert-ai-detector

Run it with the following code:

from finbert_ai_detector import FinbertAIDetector

# Initialize the detector (downloads the model if not cached)

detector = FinbertAIDetector()

# Example text

text = "The Tax Cuts and Jobs Act enacted in 2017 in the United States, significantly changed the tax rules applicable to U.S.-domiciled corporations. Changes such as lower corporate tax rates, full expensing for qualified property, taxation of offshore earnings, limitations on interest expense deductions, and changes to the municipal bond tax exemption may impact demand for our products and services."

# Predict a single text

result = detector.predict(text)

print(f"Prediction: {result['label']}")

print(f"AI Probability: {result['ai_probability']:.2%}")

Using transformers library.

Here is a quick example of how to make predictions using Python and PyTorch:

import torch

from transformers import AutoTokenizer, AutoModelForSequenceClassification

# Load the model and tokenizer

model_id = "msperlin/finbert-ai-detector"

device = torch.device("cuda" if torch.cuda.is_available() else "cpu")

tokenizer = AutoTokenizer.from_pretrained(model_id)

model = AutoModelForSequenceClassification.from_pretrained(model_id).to(device)

model.eval()

# Sample text to test

text = "The Tax Cuts and Jobs Act enacted in 2017 in the United States, significantly changed the tax rules applicable to U.S.-domiciled corporations. Changes such as lower corporate tax rates, full expensing for qualified property, taxation of offshore earnings, limitations on interest expense deductions, and changes to the municipal bond tax exemption may impact demand for our products and services."

# Preprocess the input

inputs = tokenizer(

text,

return_tensors="pt",

truncation=True,

max_length=512,

padding=True

).to(device)

# Run inference

with torch.no_grad():

outputs = model(**inputs)

probabilities = torch.nn.functional.softmax(outputs.logits, dim=-1)

# Index 1 typically corresponds to "AI-generated" (Verify with model.config.id2label if needed)

prob_ai = probabilities[0][1].item()

print(f"Probability of being AI-generated: {prob_ai:.2%}")

Intended Use & Limitations

- Intended Usage: Analyzing formal financial reports, press releases, corporate filings, and similar structured financial disclosures.

- Limitations: The model is optimized specifically for the formal, complex tone of financial documents. Its accuracy may be lower when applied to texts outside the financial domain, such as social media posts, casual emails, news articles, or creative text.

- Length Constraint: The underlying standard FinBERT architecture implies a maximum sequence length of 512 tokens. Texts longer than this will be truncated.

- Downloads last month

- 122

Model tree for msperlin/finbert-ai-detector

Base model

yiyanghkust/finbert-pretrain