Distilling a Small Utility-Based Passage Selector to Enhance Retrieval-Augmented Generation

📖 Conference Paper | 🤗 Model | 🤗 Annotation Data | 🛠️ Github |

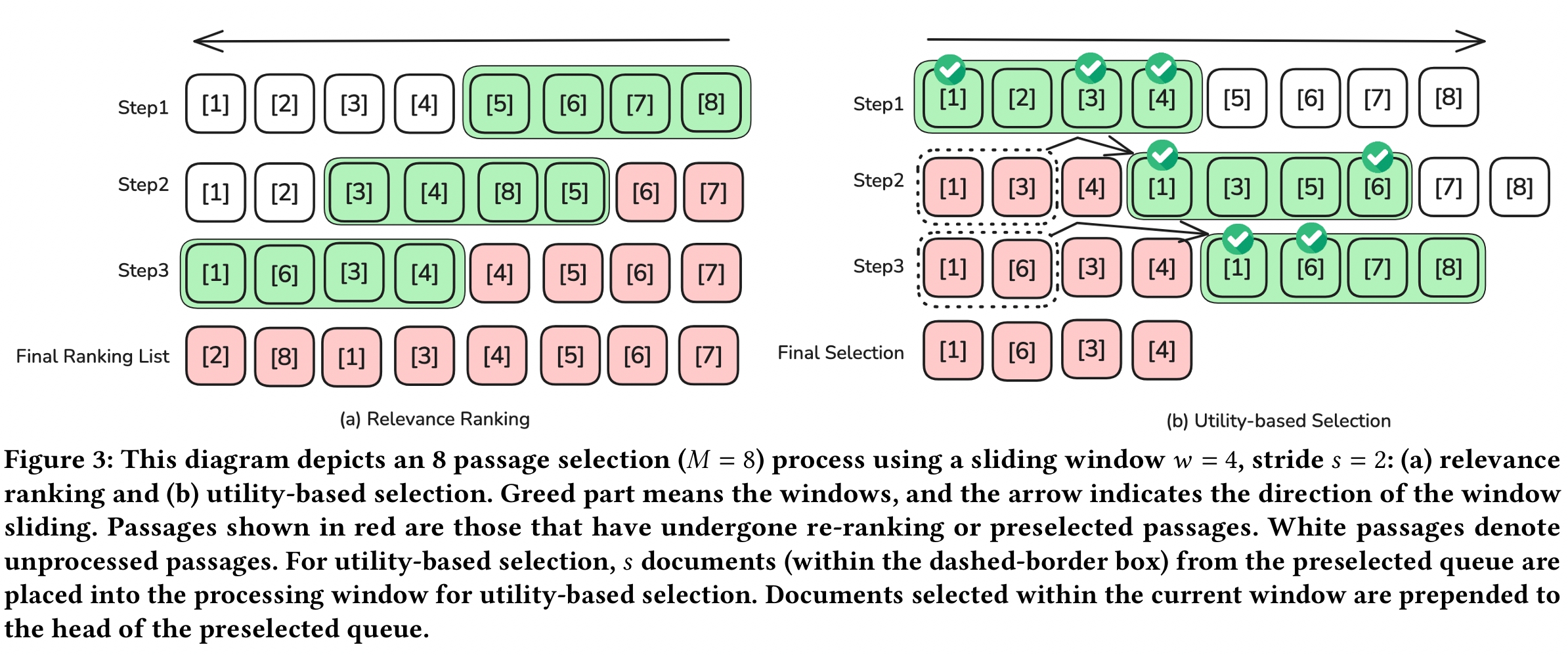

Retrieval-augmented generation (RAG) enhances large language models (LLMs) by incorporating retrieved information. Standard retrieval process prioritized relevance, focusing on topical alignment between queries and passages. In contrast, in RAG, the emphasis has shifted to utility, which considers the usefulness of passages for generating accurate answers. Despite empirical evidence showing the benefits of utility-based retrieval in RAG, the high computational cost of using LLMs for utility judgments limits the number of passages evaluated. This restriction is problematic for complex queries requiring extensive information. To address this, we propose a method to distill the utility judgment capabilities of LLMs into smaller, more efficient models. Our approach focuses on utility-based selection rather than ranking, enabling dynamic passage selection tailored to specific queries without the need for fixed thresholds. We train student models to learn pseudo-answer generation and utility judgments from teacher LLMs, using a sliding window method that dynamically selects useful passages. Our experiments demonstrate that utility-based selection provides a flexible and cost-effective solution for RAG, significantly reducing computational costs while improving answer quality. We present the distillation results using Qwen3-32B as the teacher model for both relevance ranking and utility-based selection, distilled into RankQwen1.7B and UtilityQwen1.7B. Our findings indicate that for complex questions, utility-based selection is more effective than relevance ranking in enhancing answer generation performance. We will release the relevance ranking and utility-based selection annotations for the MS MARCO dataset, supporting further research in this area.

📦 Utility-Based Selection and Relevance Ranking

Training Dataset

We introduce utility-based selection annotation and relevance ranking annotation, a large-scale LLM-annotated retrieval dataset.

- MS MARCO: About 100K queries

- Annotated LLM: Qwen3-32B

⭐️ Model

The selector trained on utility-based selection annotation: UtilityQwen1.7B The ranker trained on relevance-based reranking annotation: RankQwen1.7B

⭐️ Inference:

Both models use the sliding window method. More details can be found on GitHub.

👋 Citation

If you find our paper and code useful in your research, please cite our paper.

@inproceedings{zhang2025distilling,

title={Distilling a Small Utility-Based Passage Selector to Enhance Retrieval-Augmented Generation},

author={Zhang, Hengran and Bi, Keping and Guo, Jiafeng and Zhang, Jiaming and Wang, Shuaiqiang and Yin, Dawei and Cheng, Xueqi},

booktitle={Proceedings of the 2025 Annual International ACM SIGIR Conference on Research and Development in Information Retrieval in the Asia Pacific Region},

pages={22--30},

year={2025}

}