Naming notice (2026-04-10). The "PolarQuant" technique used in this model is being rebranded to HLWQ (Hadamard-Lloyd Weight Quantization). The change is only the name; the algorithm and the weights in this repository are unchanged.

The rebrand resolves a name collision with an unrelated, earlier KV cache quantization method also named PolarQuant (Han et al., arXiv:2502.02617, 2025). HLWQ addresses weight quantization with a deterministic Walsh-Hadamard rotation and Lloyd-Max scalar codebook; Han et al.'s PolarQuant addresses KV cache quantization with a random polar rotation. The two methods are technically distinct.

Existing loaders that load this repository by ID continue to work without changes. Future model uploads will use the HLWQ name.

Reference paper for this technique: arXiv:2603.29078 (v2 in preparation; v1 still uses the old name).

🧊 Gemma-4-31B-it-PolarQuant-Q5-Vision

Multimodal (Image+Text → Text) on consumer GPUs with PolarQuant.

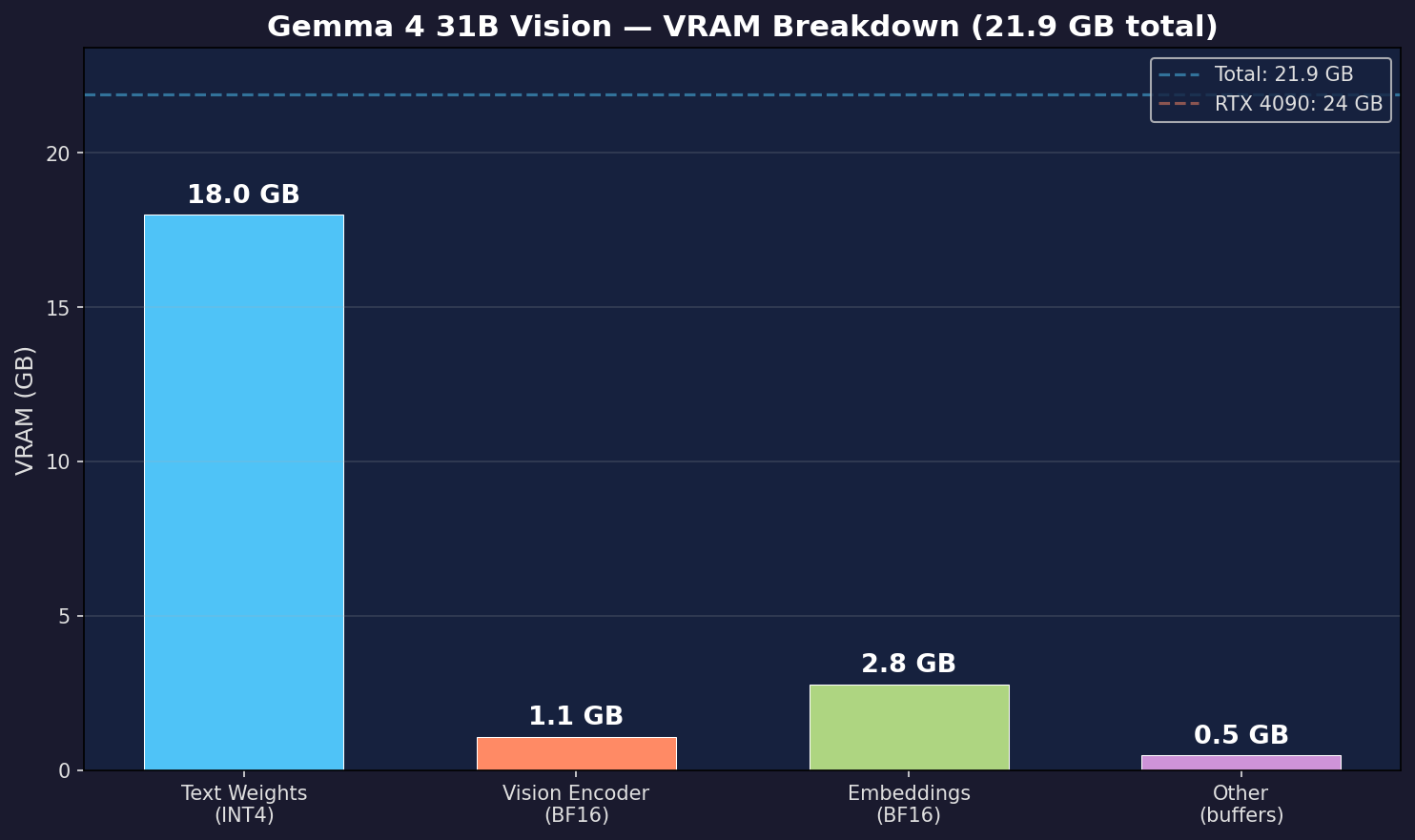

| Component | Method | Result |

|---|---|---|

| Text weights | PolarQuant Q5 + torchao INT4 | ~20 GB |

| Vision encoder | BF16 (full quality) | ~1.1 GB |

| KV Cache | PolarQuant Q3 (5.3x compression) | longer context |

🎯 Key Results

| Metric | Value |

|---|---|

| VRAM | 21.9 GB |

| Speed | 24.9 tok/s |

| Text layers | 412 INT4 |

| Vision layers | 190 BF16 |

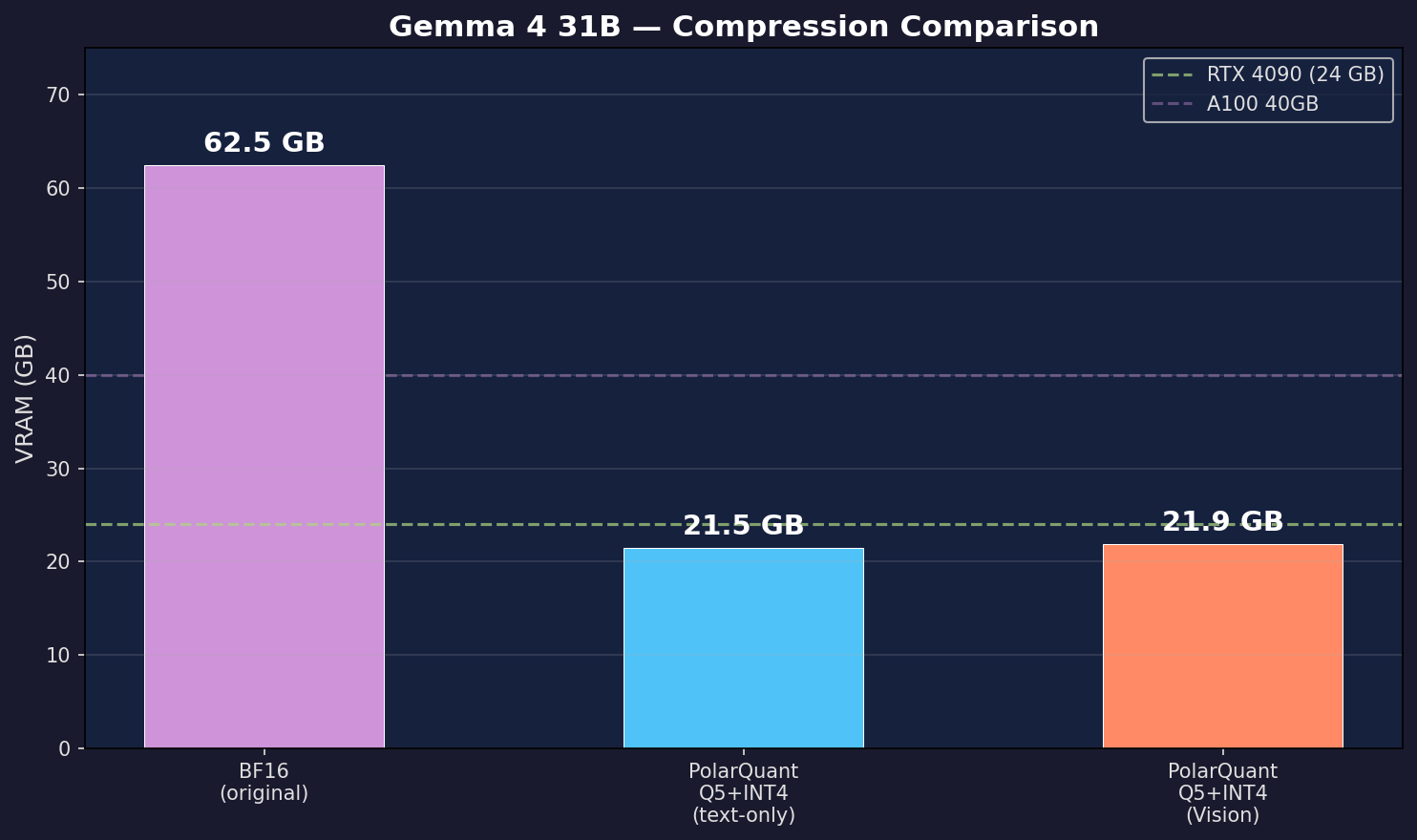

| Compression | BF16 62.5 GB → 21.9 GB (2.9x) |

| Text test | ✅ "2+2 = 4" |

| Vision test | ✅ "Golden Gate Bridge" |

📊 Charts

🏆 GPU Support

| GPU | VRAM | Fits? |

|---|---|---|

| RTX 4090 | 24 GB | ✅ Yes |

| RTX 5090 | 32 GB | ✅ Comfortable |

| L4 | 24 GB | ✅ Yes |

| A100 40GB | 40 GB | ✅ Plenty |

| T4 | 16 GB | ❌ Too small |

🚀 Quick Start

from transformers import AutoModelForMultimodalLM, AutoProcessor

import torch

MODEL = "google/gemma-4-31B-it"

processor = AutoProcessor.from_pretrained(MODEL)

# Load with streaming loader (see notebook for full code)

model = AutoModelForMultimodalLM.from_pretrained(

MODEL, dtype=torch.bfloat16, device_map="cpu",

attn_implementation="sdpa",

)

# Apply PQ5 dequant + INT4 per-module (text only, vision stays BF16)

# ... see notebook ...

# Image + Text inference

messages = [{

"role": "user",

"content": [

{"type": "image", "url": "https://example.com/image.jpg"},

{"type": "text", "text": "Describe this image."},

]

}]

inputs = processor.apply_chat_template(messages, tokenize=True, return_dict=True,

return_tensors="pt", add_generation_prompt=True).to("cuda")

output = model.generate(**inputs, max_new_tokens=256)

print(processor.decode(output[0][inputs["input_ids"].shape[1]:], skip_special_tokens=True))

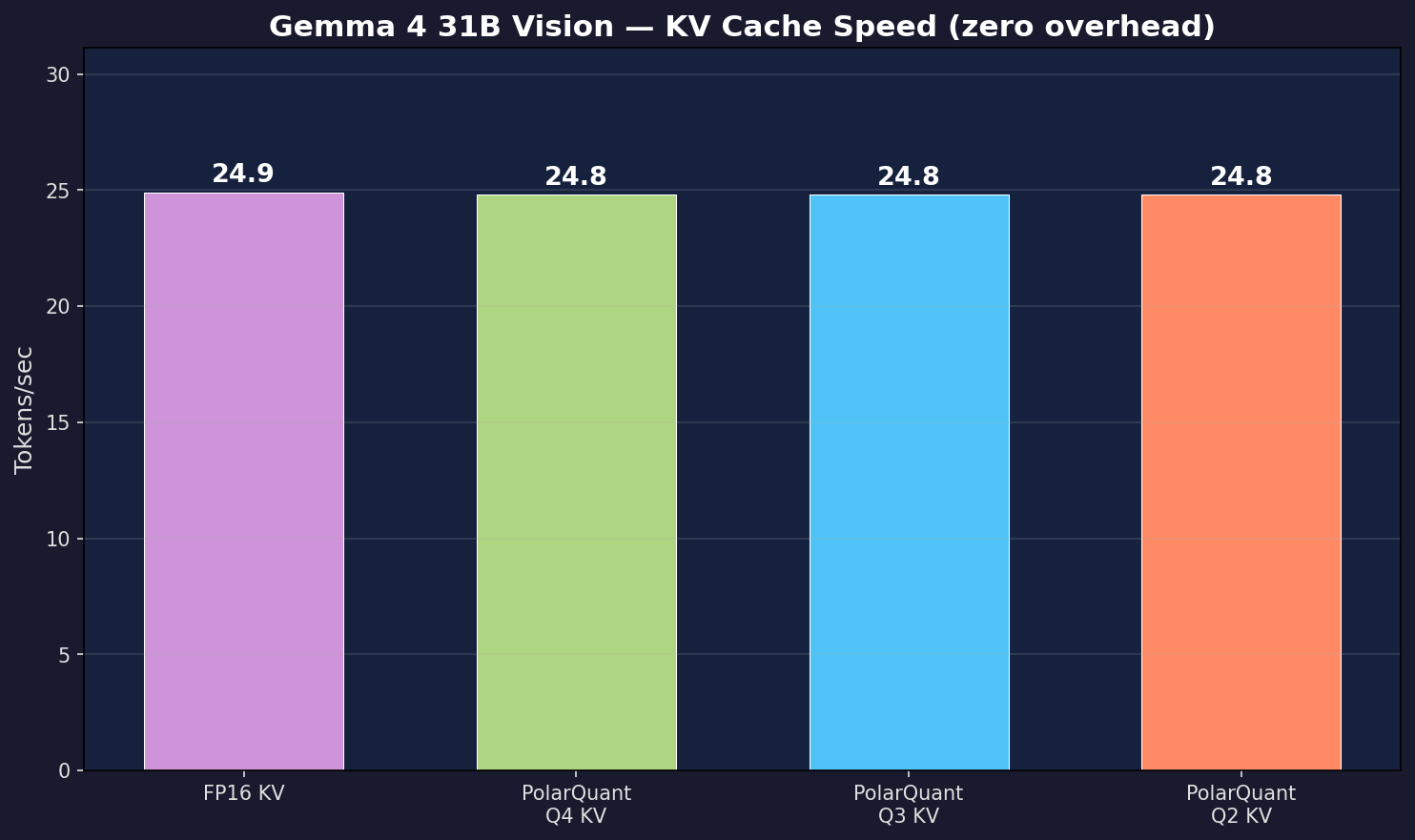

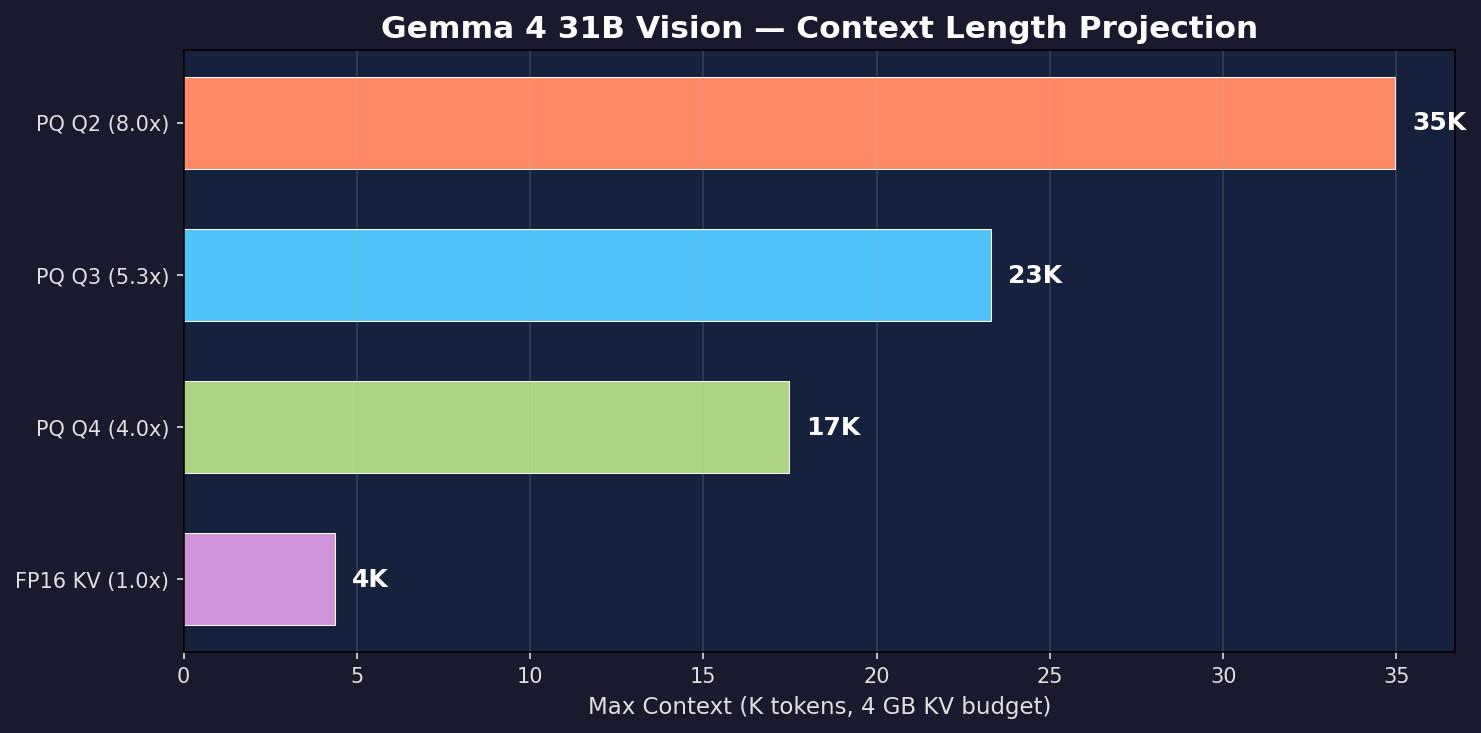

📊 KV Cache Compression

| Method | Bits | Compression | tok/s |

|---|---|---|---|

| FP16 (baseline) | 16 | 1.0x | 24.9 |

| PolarQuant Q4 | 4 | 4.0x | 24.9 |

| PolarQuant Q3 | 3 | 5.3x | 24.9 |

| PolarQuant Q2 | 2 | 8.0x | 24.9 |

🔧 Technical Details

- Architecture: Gemma 4 (60 layers, 32 attn heads, 16 KV heads, head_dim=256)

- Hybrid attention: Sliding window (1024) + global attention

- Text quantization: Hadamard rotation (128×128) + Lloyd-Max Q5 + torchao INT4

- Vision encoder: Preserved in BF16 (550M params) for full image quality

- KV cache: Hadamard rotation (256×256) + Lloyd-Max Q3 + real bit-packing

- Streaming loader: Per-module INT4 via

nn.Sequentialwrapper — peak VRAM = final model only - Base model: google/gemma-4-31B-it (Apache 2.0)

📖 Citation

@article{polarquant2025,

title={PolarQuant: Hadamard-Rotated Lloyd-Max Quantization for LLM Compression},

author={Vicentino, Caio},

journal={arXiv preprint arXiv:2603.29078},

year={2025},

url={https://arxiv.org/abs/2603.29078}

}

🔗 Resources

🙏 Acknowledgements

Built on Google's Gemma 4 (Apache 2.0). Quantization by PolarQuant with torchao.

🚀 Quick Start

Install

pip install git+https://github.com/caiovicentino/polarengine-vllm.git

Load & Generate (1 line!)

from polarengine_vllm import PolarQuantModel

model = PolarQuantModel.from_pretrained("caiovicentino1/Gemma-4-31B-it-PolarQuant-Q5-Vision")

print(model.generate("Hello, how are you?", max_new_tokens=100))

With KV Cache Compression (5.3x more context)

model = PolarQuantModel.from_pretrained("caiovicentino1/Gemma-4-31B-it-PolarQuant-Q5-Vision", kv_cache_nbits=3)

# KV cache now uses 5.3x less memory — fit longer conversations!

print(model.generate("Explain quantum computing in detail.", max_new_tokens=500))

Benchmark

polarquant bench caiovicentino1/Gemma-4-31B-it-PolarQuant-Q5-Vision --ppl --chart

Gradio Demo

polarquant demo caiovicentino1/Gemma-4-31B-it-PolarQuant-Q5-Vision --share

📦 Method: PolarQuant

Hadamard Rotation + Lloyd-Max Optimal Centroids

Unlike GGUF (uniform quantization), PolarQuant places quantization levels where weight density is highest — mathematically proven optimal for Gaussian-distributed neural network weights.

PolarQuant Q5 (cos_sim > 0.996) > GGUF Q5_K_M (~0.99) at same size

🔗 Links

- Downloads last month

- 240

Model tree for caiovicentino1/Gemma-4-31B-it-PolarQuant-Q5-Vision

Base model

google/gemma-4-31B-it