Autoresearch-540M: Arabic-First Language Model

A 540M parameter language model trained from scratch with an Arabic-first curriculum. Built as an educational and research project to explore training LLMs for Arabic from the ground up.

Model Details

| Parameters | 540M total (235M scaling) |

| Architecture | GPT-2 style transformer (nanochat) |

| Layers | 16 |

| Hidden dim | 1024 |

| Heads | 8 (head_dim=128) |

| Sequence length | 2048 |

| Vocab size | 32,768 (custom Arabic-optimized tiktoken) |

| Training framework | nanochat @ commit 6ed7d1d |

| Precision | FP8 (tensorwise scaling) with BF16 compute |

| Hardware | 8× NVIDIA H100 80GB SXM |

Training Results

| Metric | Value |

|---|---|

| Best val_bpb | 1.155 (step 9,250) |

| Final val_bpb | 2.99 (step 12,500) |

| Final training loss | 1.74 |

| Total training time | 58.5 minutes |

| Sustained MFU | 54-55% |

| Peak throughput | 2.4M tok/sec |

| Training cost | ~$18 (1 hr × $18.13/hr) |

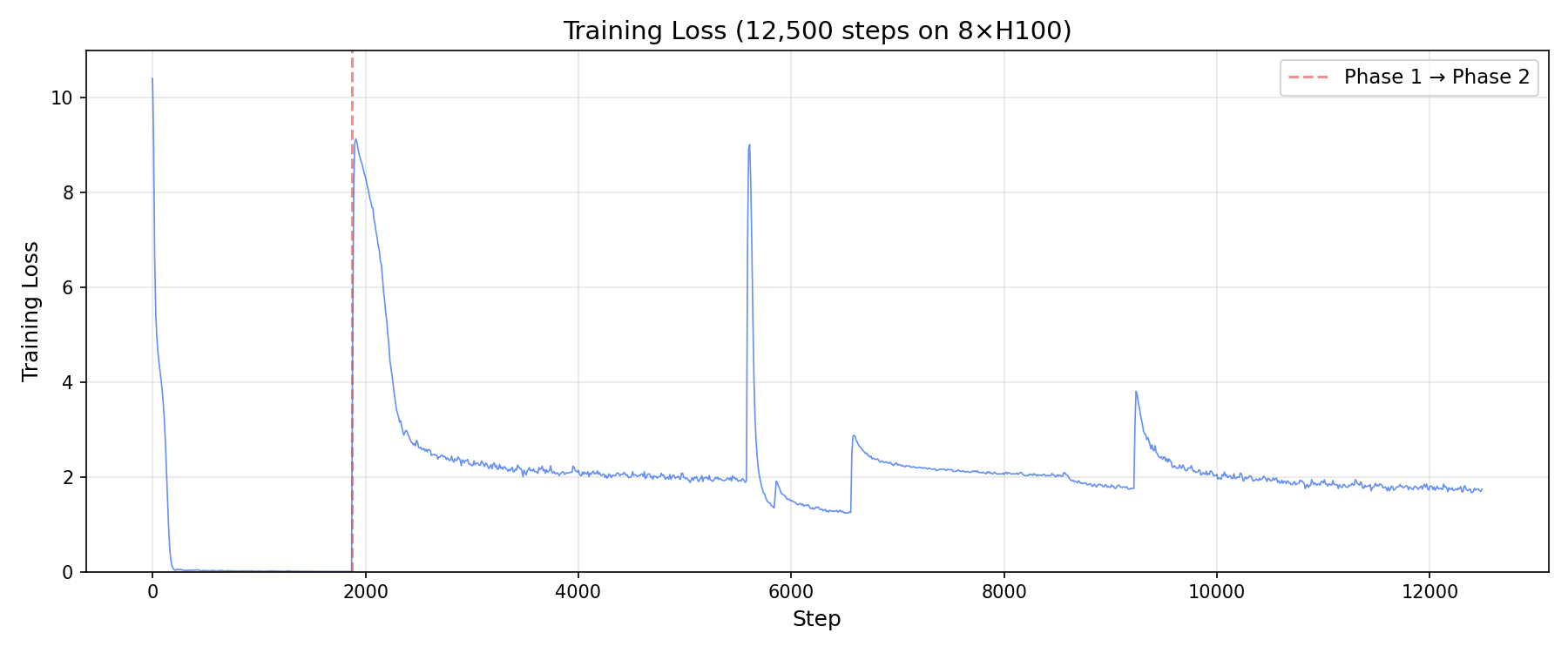

Training Loss

Validation BPB

Throughput & MFU

Val_bpb Trajectory

The validation loss oscillated significantly due to the two-phase curriculum:

- Phase 1 (steps 0-1,875): OpenITI memorization — val_bpb dropped to 2.95, then rose to 4.3 as model overfit

- Phase 2 (steps 1,875-12,500): Diverse data — val_bpb initially spiked to 4.8, then recovered to 1.155 at step 9,250

- The oscillation reflects the model performing differently on Arabic vs English/Code/Math portions of the balanced val set

Training

Curriculum

Two-phase training with Arabic foundation:

- Phase 1 (15% = 1,875 steps): Classical Arabic scholarly texts (OpenITI corpus) — model memorized this small dataset deeply

- Phase 2 (85% = 10,625 steps): Mixed multilingual data (50% Arabic / 20% English / 10% Math / 20% Code)

Data

6.55B tokens across 80 pre-tokenized binary files:

| Category | Files | Tokens | Description |

|---|---|---|---|

| Arabic | 39 | 2.66B | Arabic web text |

| English | 17 | 1.34B | English web text |

| Code | 14 | 1.31B | JavaScript + Python |

| Math | 8 | 756M | Mathematical text |

| OpenITI | 1 | 411M | Classical Arabic scholarly texts |

Tokenizer

Custom 32K vocabulary tiktoken tokenizer optimized for Arabic:

- Arabic fertility: ~4 tokens per 22 characters (highly efficient)

- Trained on Arabic-majority corpus

- BOS token:

<|bos|>(id=32760)

Training Details

- Total steps: 12,500

- Batch size: 524,288 tokens/step (8 GPUs × 32 device batch × 2048 seq len = zero gradient accumulation)

- Optimizer: Muon (nanochat default)

- FP8: Enabled with tensorwise scaling

- Flash Attention 3: Enabled (Hopper GPU)

- Pre-tokenized data: Binary uint16 format — zero on-the-fly tokenization, instant batch loading

Usage

import pickle, torch, sys

sys.path.insert(0, "nanochat") # clone nanochat first

from nanochat.gpt import GPT, GPTConfig

# Load tokenizer

with open("tokenizer.pkl", "rb") as f:

enc = pickle.load(f)

# Load model

checkpoint = torch.load("model.pt", map_location="cpu", weights_only=False)

config = GPTConfig(

vocab_size=32768, n_layer=16, n_head=8, n_kv_head=8,

n_embd=1024, sequence_len=2048, window_pattern="L"

)

model = GPT(config)

model.load_state_dict(checkpoint, strict=False)

model.eval()

# Generate

bos = enc.encode_single_token("<|bos|>")

tokens = [bos] + enc.encode("بسم الله الرحمن الرحيم")

for token in model.generate(tokens, max_tokens=200, temperature=0.8, top_k=50):

pass # tokens are yielded one by one

output = enc.decode(list(model.generate(tokens, max_tokens=200)))

print(output)

Or use the included inference script:

PYTHONPATH=nanochat python inference.py --checkpoint model.pt --prompt "بسم الله الرحمن الرحيم" --interactive

Example Output

>>> بسم الله الرحمن الرحيم

أيسر التفاسير لكلام العلي الكبير

بسم الله الرحمن الرحيم الحمد لله رب العالمين والصلاة والسلام على المبعوث رحمة

للعالمين سيدنا ونبينا محمد وعلى آله الطيبين الطاهرين وأصحابه الغر الميامين

وأزواجه أمهات المؤمنين ومن تبعهم بإحسان إلى يوم الدين أما بعد

Files

| File | Size | Description |

|---|---|---|

model.pt |

1.5 GB | Final checkpoint (step 12,500) — PyTorch state_dict |

checkpoints/model_009000_best_valbpb.pt |

1.5 GB | Best val_bpb checkpoint (1.155 at step 9,250) |

optimizer/optim_012500_rank[0-7].pt |

2.2 GB | Optimizer state (8 DDP ranks) for training resume |

tokenizer.pkl |

466 KB | tiktoken tokenizer (32K Arabic-optimized vocab) |

token_bytes.npy |

129 KB | Token byte mappings |

inference.py |

4 KB | Inference and generation script (CPU/MPS/CUDA) |

meta.json |

1.5 KB | Training metadata |

Limitations

- Base model only — generates text continuations, not conversations. No instruction following or chat capability.

- Arabic-dominant — English prompts tend to produce Arabic output. The model "thinks" in Arabic due to Phase 1 curriculum.

- No chat tokens — tokenizer lacks

<|user|>,<|assistant|>etc. SFT required for conversational use. - Val_bpb instability — validation loss oscillates (1.15-4.8) depending on which training data type was last processed. The balanced val set exposes domain imbalance in the model's representations.

- Curriculum design flaw — Phase 1 OpenITI-only training caused memorization (loss 0.006 by step 200). Future runs should use broader Arabic data in Phase 1. See ADR-032 and ADR-033 in the source repo.

- Small scale — 540M params is educational/research scale. Not production quality.

- Non-commercial — CC BY-NC 4.0 license. Research and educational use only.

Training Journey

This model was built as a learning-in-public project, documenting every decision and failure:

- 4 H100 attempts over 4 days — $113 total spent

- Attempt 1 ($15): CUDA 13.1 + PyTorch nightly — torch.compile hung

- Attempt 2 ($5): PyTorch 2.6.0 — torch.compile hung

- Attempt 3 ($55): PyTorch 2.9.1 — 8-GPU training hung (root cause: Arabic BPE tokenization rank skew)

- Attempt 4 ($38): Pre-tokenized binary data — success! 58.5 min training, 55% MFU

Key Lessons

- On-the-fly tokenization of Arabic text creates rank skew in multi-GPU training (ranks tokenize at different speeds)

- Pre-tokenized binary data eliminates this entirely — <5ms per batch vs 1-15 min

- Narrow→Broad curriculum (OpenITI-only Phase 1) causes weight space warping that resists generalization

- Broad→Focused→Full curriculum is better (train diverse first, specialize second)

- 33 Architecture Decision Records and 58 Lessons Learned entries in the source repo

Citation

@misc{autoresearch2026,

title={Autoresearch-540M: Arabic-First Language Model},

author={AENSaid},

year={2026},

url={https://huggingface.co/AENSaid/autoresearch-540m}

}

Acknowledgments

- nanochat by Andrej Karpathy — training framework

- OpenITI — classical Arabic scholarly texts

- Built with Claude Code (Anthropic)