LoopUS:

Recasting Pretrained LLMs into Looped Latent Refinement Models

BAELAB, Pusan National University, Busan, Korea

DOLAB, Changwon National University, Changwon, Korea

Taekhyun Park1, Yongjae Lee1, Dohee Kim2, Hyerim Bae1,†

🌟 Github | 🔗 Project Page | 📄 Paper

Abstract

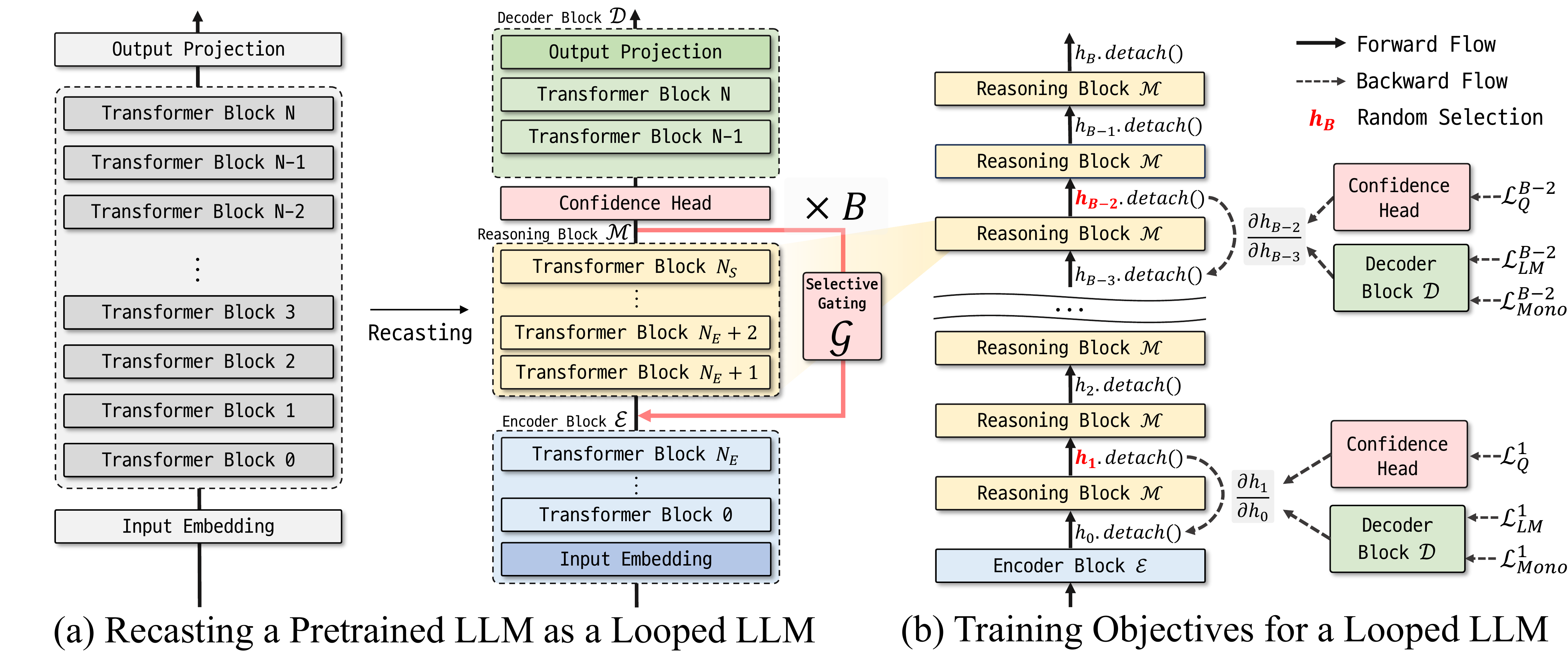

Looped computation shows promise in improving the reasoning-oriented performance of LLMs by scaling test-time compute. Looped Depth Up-Scaling (LoopUS) is a post-training framework that converts a standard pretrained LLM into a looped architecture. LoopUS recasts the pretrained LLM into an encoder, a looped reasoning block, and a decoder. This mechanism transforms a standard non-looped model into a looped form while stabilizing it against both computational bottlenecks and representation collapse. Through stable latent looping, LoopUS improves reasoning-oriented performance without extending the generated traces or requiring recurrent training from scratch.

QuickStart

To use this model, please follow the installation and usage instructions from the official repository.

Installation

git clone https://github.com/Thrillcrazyer/LoopUS.git

cd LoopUS

uv sync

Chat Mode

You can run the model in chatting mode using the following command:

uv run chat.py --model-name Thrillcrazyer/Qwen3_8B_LoopUS

Qualitative Generation

uv run LoopUS-generate \

--model-name Qwen/Qwen3-8B-Base \

--decomposed-model Thrillcrazyer/Qwen3_8B_LoopUS \

--prompt "The meaning of life is" \

--n-recursion 8

Illustration of LoopUS

Citation

@misc{park2024loopus,

title={LoopUS: Recasting Pretrained LLMs into Looped Latent Refinement Models},

author={Taekhyun Park and Yongjae Lee and Dohee Kim and Hyerim Bae},

year={2024},

eprint={2605.11011},

archivePrefix={arXiv},

primaryClass={cs.CL}

}

- Downloads last month

- 86

Model tree for Thrillcrazyer/Qwen3-8B_LoopUS

Base model

Qwen/Qwen3-8B-Base