base_model:

- Qwen/Qwen3-4B-Base

datasets:

- HuggingFaceFW/fineweb-edu

license: apache-2.0

model_name: Qwen3_1.7B_LoopUS_SFT

pipeline_tag: text-generation

tags:

- LoopUS

- LoopedTrasnformers

LoopUS:

Recasting Pretrained LLMs into Looped Latent Refinement Models

BAELAB, Pusan National University, Busan, Korea

DOLAB, Changwon National University, Changwon, Korea

Taekhyun Park1, Yongjae Lee1, Dohee Kim2, Hyerim Bae1,†

🌟 Github | 🌐 Project Page | 📄 Paper

Abstract

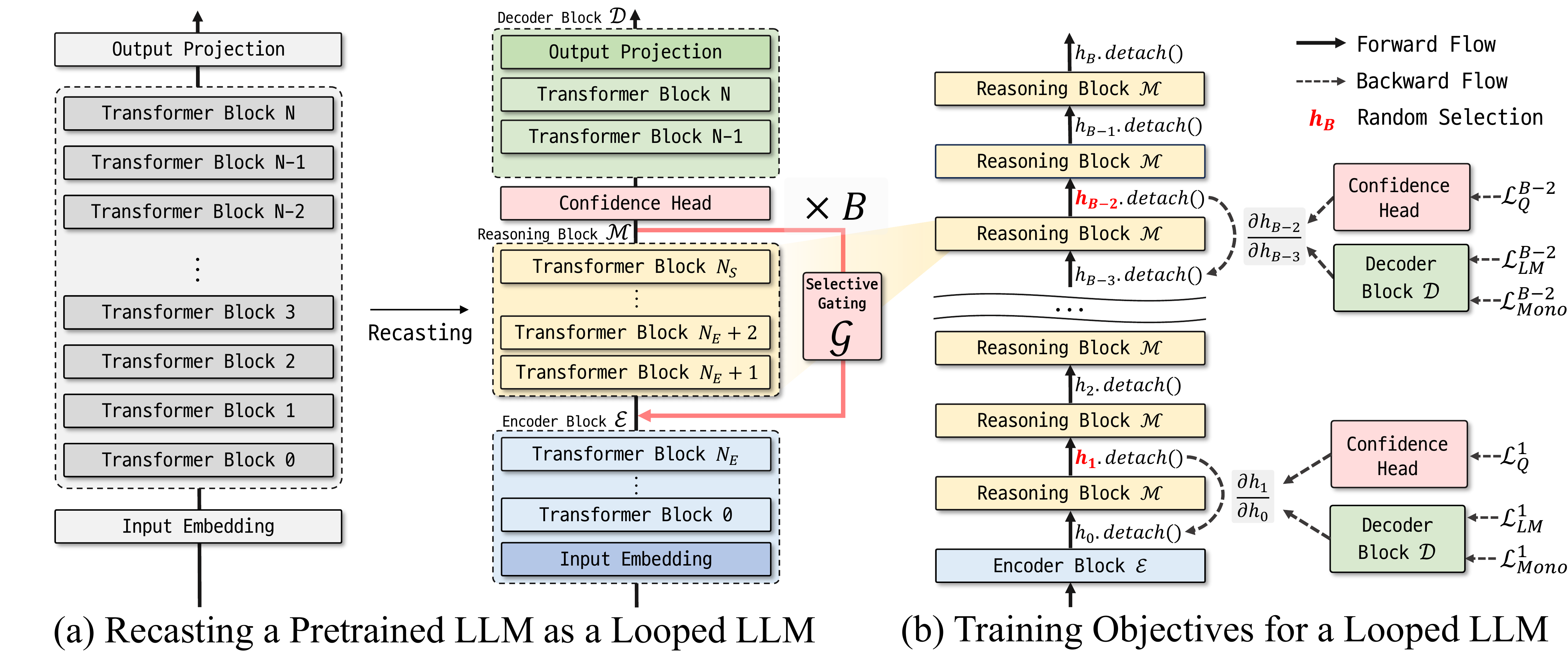

Looped computation shows promise in improving the reasoning-oriented performance of LLMs by scaling test-time compute. Looped Depth Up-Scaling (LoopUS) is a post-training framework that converts a standard pretrained LLM into a looped architecture. LoopUS recasts the pretrained LLM into an encoder, a looped reasoning block, and a decoder. It improves reasoning-oriented performance without extending the generated traces or requiring recurrent training from scratch.

QuickStart

To use this model, clone the official repository and run the chat interface:

git clone https://github.com/Thrillcrazyer/LoopUS.git

cd LoopUS

uv sync

uv run chat.py --model-name Thrillcrazyer/Qwen3_1.7B_LoopUS_SFT

Illustration of LoopUS

Citation

If you find LoopUS useful in your research, please cite:

@article{park2026loopus,

title={LoopUS: Recasting Pretrained LLMs into Looped Latent Refinement Models},

author={Park, Taekhyun and Lee, Yongjae and Kim, Dohee and Bae, Hyerim},

journal={arXiv preprint arXiv:2605.11011},

year={2026}

}