license: apache-2.0

datasets:

- HuggingFaceFW/fineweb-edu

- mattwesney/General_Inquiry_Thinking-Chain-Of-Thought

- tatsu-lab/alpaca

- databricks/databricks-dolly-15k

- TeichAI/Step-3.5-Flash-2600x

- TeichAI/convo-v1

language:

- en

tags:

- small

- glint

- compactai

Note: You must use the custom python script to run this model properly, you can download it from here by going into the downloads option and scrolling down.

Glint-1

⚠️ IMPORTANT NOTICE

- This model is experimental. Glint-1 is a 1M parameter research model designed for architectural experimentation.

- Performance characteristics: The model exhibits behavioral patterns comparable to ~2M parameter models despite its compact size.

- Not production-ready: This release demonstrates functional capability, not optimal performance.

Overview

Glint-1 is an ultra-compact language model developed by CompactAI following our rebrand initiative. This 1M parameter model demonstrates that efficient architectural design can yield behavioral characteristics typically associated with larger models (~2M parameters).

This release includes both Pretrained Weights (base language modeling) and Instruction-Tuned Weights (fine-tuned for conversational tasks).

Model Specifications

| Parameter | Value |

|---|---|

| Architecture | Transformer Decoder |

| Parameters | ~1M |

| Effective Behavior | ~2M parameter equivalent |

| Context Length | 2,048 tokens |

| Vocabulary | Standard |

| Normalization | RMSNorm |

| Activation | SwiGLU |

Benchmarks

Glint-1 has been evaluated on standard language modeling and reasoning benchmarks:

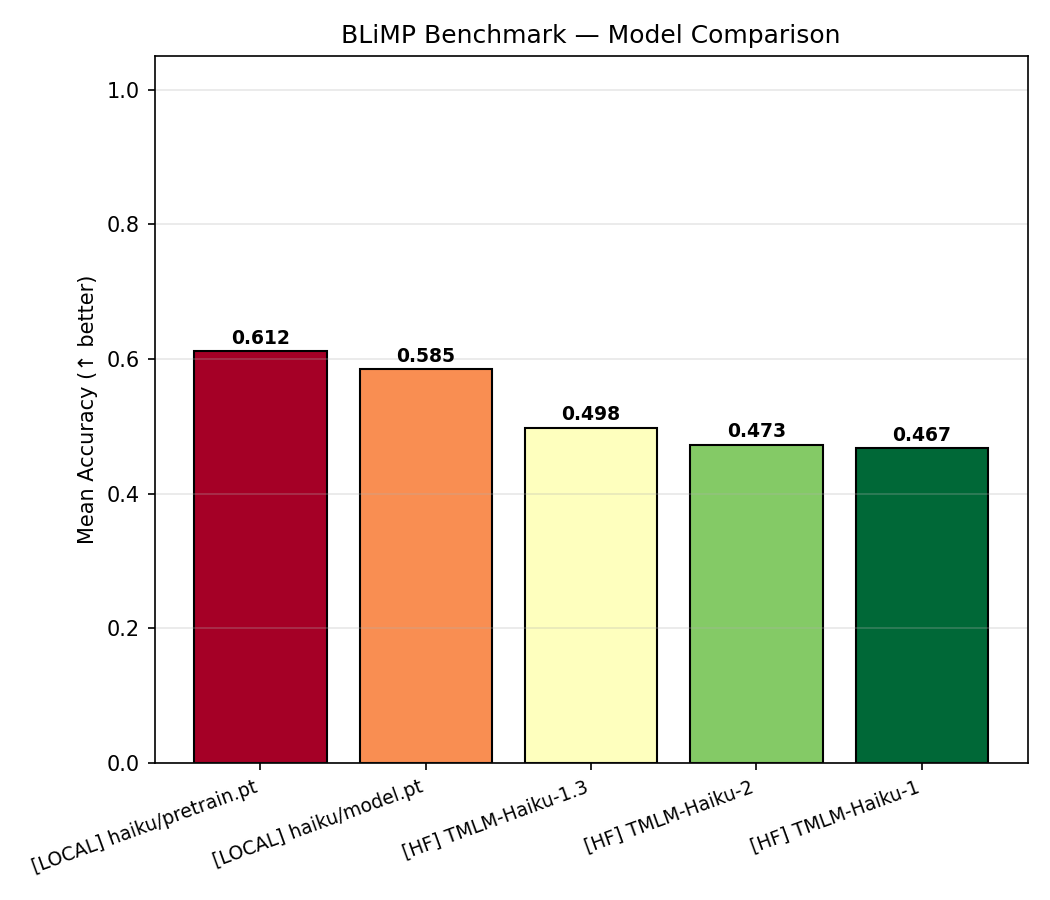

BLiMP Benchmark

Grammaticality minimal pairs across 67 paradigms. Accuracy measured as % grammatical < ungrammatical perplexity.

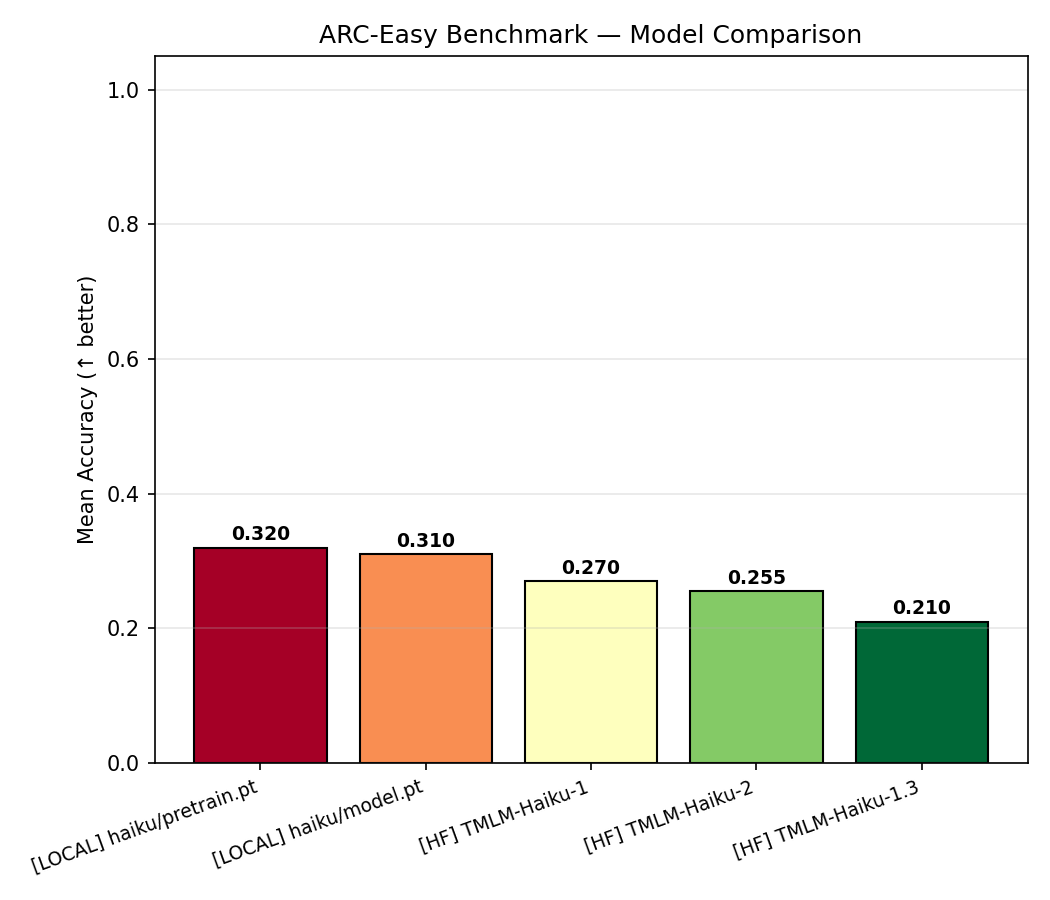

ARC-Easy Benchmark

Multiple-choice science QA (~2.4K questions) using perplexity-based answer selection.

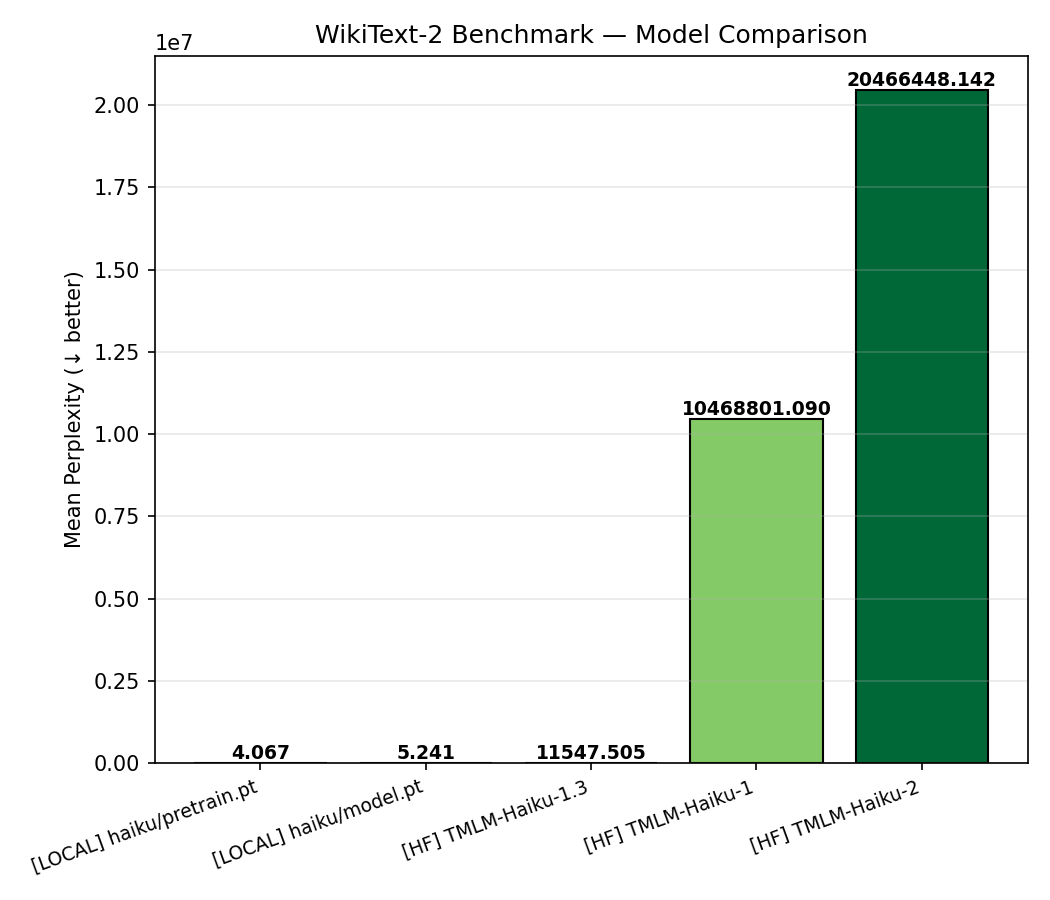

WikiText-2 Benchmark

Language modeling perplexity on Wikipedia test split. Lower is better.

Training Details

| Parameter | Value |

|---|---|

| Batch Size | 48 |

| Learning Rate | 8e-4 (pretrain), 2e-4 (SFT) |

| Warmup | 300 steps |

| Weight Decay | 0.02 |

| Max Grad Norm | 1.0 |

Limitations

- Repetition: May exhibit repetitive generation patterns

- Knowledge: Limited world knowledge due to parameter constraints

- Reliability: Not suitable for production applications or critical tasks

- Purpose: Intended for research, educational purposes, and architectural benchmarking

Usage

This model is released for research purposes. While functional, users should not expect state-of-the-art performance. The model demonstrates that compact architectures can achieve reasonable behavioral characteristics, making it suitable for:

- Architectural research

- Edge deployment experiments

- Educational purposes

- Baseline comparisons

Generated by CompactAI for research purposes. Use responsibly.