metadata

language: en

license: apache-2.0

library_name: diffusers

base_model: black-forest-labs/FLUX.1-dev

tags:

- flux

- diffusers

- lora

- cmo

- text-to-image

pipeline_tag: text-to-image

FLUX.1-dev-CMO

🤗 Hugging Face | 📄 arXiv

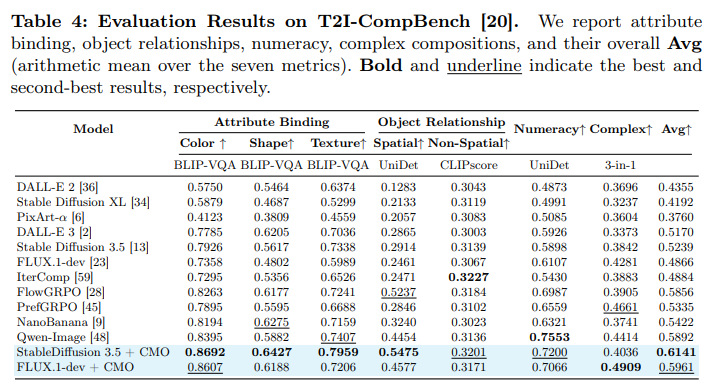

🌟 Official LoRA Adapter for Correlation-Weighted Multi-Reward Optimization for Compositional Generation

This repository contains the official LoRA adapter for black-forest-labs/FLUX.1-dev fine-tuned using CMO (Correlation-Weighted Multi-Reward Optimization) to enhance compositional generation capabilities.

🚀 Usage

Below is the code to load and merge the LoRA adapter with the base FLUX.1-dev model.

import torch

from diffusers import FluxPipeline

from peft import PeftModel

model_id = "black-forest-labs/FLUX.1-dev"

lora_ckpt_path = "Bruece/FLUX.1-dev-CMO"

device = "cuda"

pipe = FluxPipeline.from_pretrained(model_id, torch_dtype=torch.bfloat16)

pipe.transformer = PeftModel.from_pretrained(pipe.transformer, lora_ckpt_path)

pipe.transformer = pipe.transformer.merge_and_unload()

pipe = pipe.to(device)

prompt = 'a photo of a black kite and a green bear'

image = pipe(prompt, height=512, width=512, num_inference_steps=40, guidance_scale=4.5).images[0]

image.save("flux_cmo_lora.png")

🖼️ Qualitative Results

🛠️ Training Details

- Base Model: FLUX.1-dev

- Algorithm: Correlation-Weighted Multi-Reward Optimization (CMO)

- Precision: bfloat16

📜 Citation

If you find this model useful for your research, please cite:

@article{wi2026correlation,

title={Correlation-Weighted Multi-Reward Optimization for Compositional Generation},

author={Wi, Jungmyung and Kim, Hyunsoo and Kim, Donghyun},

journal={arXiv preprint arXiv:2603.18528},

year={2026}

}