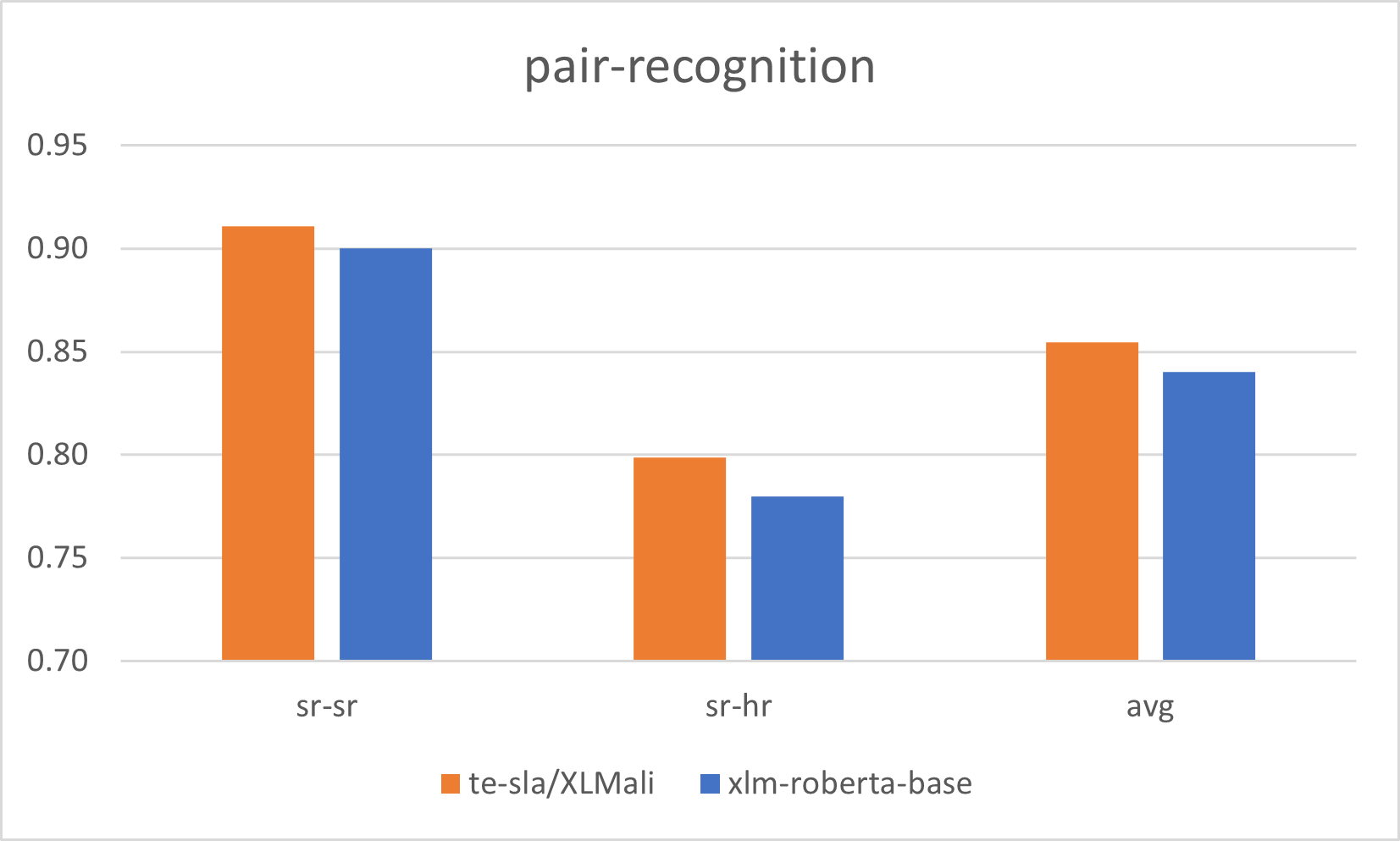

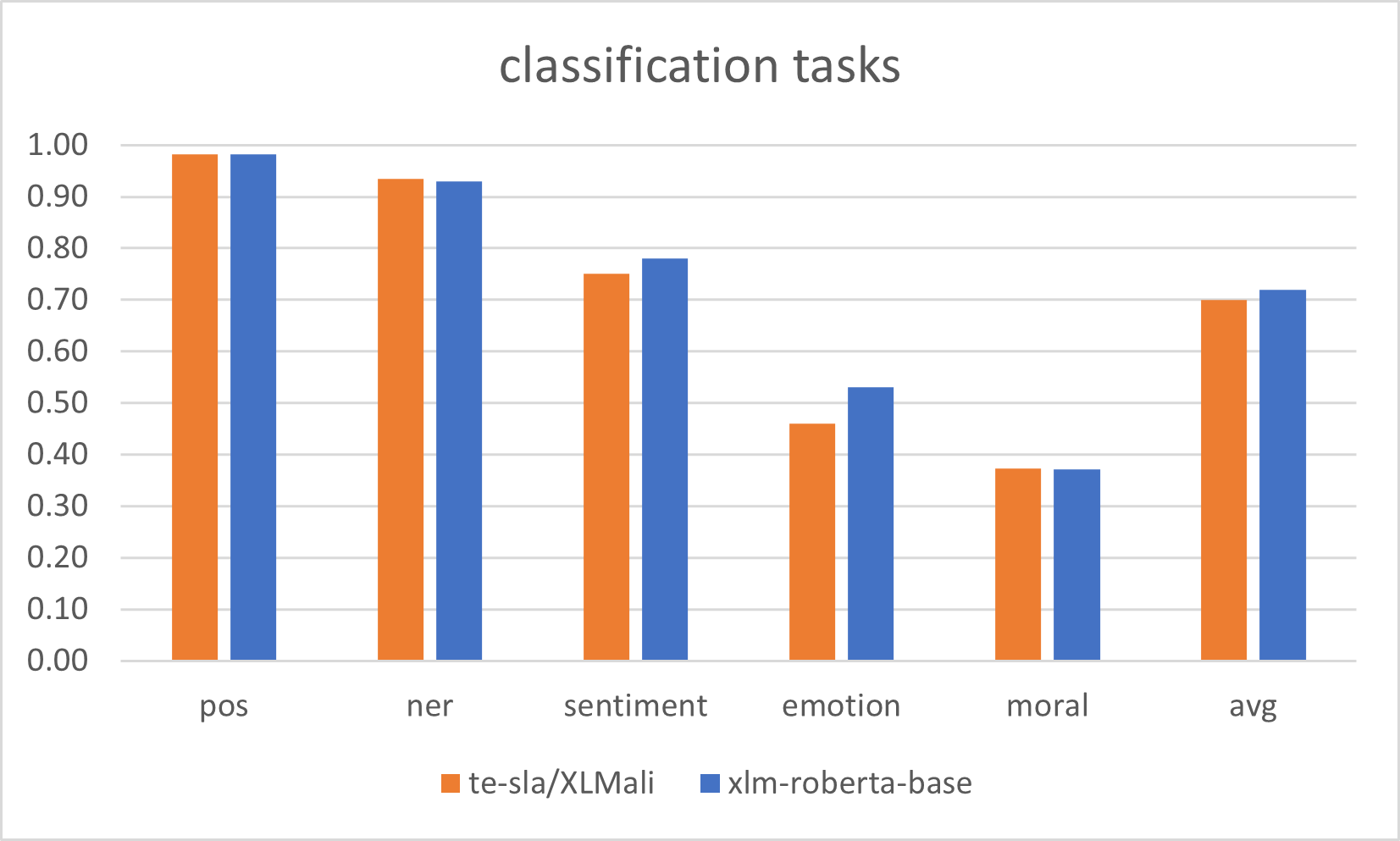

Евалуација XLMR-base модела за српски језик |

Serbian XLMR-base models evaluation results |

|

|

## Cit. ```bibtex @incollection{skoric2025:juznoslovenskijezici, author = {Škorić, Mihailo and Petalinkar, Saša}, orcid = {0000-0003-4811-8692 and 0009-0007-9664-3594}, title = {Quality Textual Corpora and New South Slavic Language Models}, license = {https://creativecommons.org/licenses/by/4.0/}, booktitle = {Proceedings of the International Conference South Slavic Languages in the Digital Environment JuDig : Thematic Collection of Papers}, editor = {Moskovljević Popović, Jasmina and Stanković, Ranka}, isbn = {978-86-6153-791-2}, series = {South Slavic Languages in the Digital Environment JuDig}, publisher = {University of Belgrade — Faculty of Philology}, address = {Belgrade}, year = {2025}, volume = {1}, pages = {337--348}, note = {19}, doi = {10.18485/judig.2025.1.ch19}, doiurl = {http://doi.fil.bg.ac.rs/volume.php?pt=eb_ser&issue=judig-2025-1&i=19}, url = {http://doi.fil.bg.ac.rs/pdf/eb_ser/judig/2025-1/judig-2025-1-ch19.pdf} } ```

|

Истраживање jе спроведено уз подршку Фонда за науку Републике Србиjе, #7276, Text Embeddings – Serbian Language Applications – TESLA |

This research was supported by the Science Fund of the Republic of Serbia, #7276, Text Embeddings - Serbian Language Applications - TESLA |