Upload CADForge judge evidence docs

Browse files- docs/best-example-project.md +374 -0

- docs/brainstorm/00-hackathon-readout.md +75 -0

- docs/brainstorm/01-idea-scorecard.md +59 -0

- docs/brainstorm/02-recommended-idea-regulatory-dossier-control-room.md +272 -0

- docs/brainstorm/03-rapid-build-plan.md +164 -0

- docs/brainstorm/04-physics-design-environments.md +258 -0

- docs/brainstorm/05-mechforge-rendering-stack.md +85 -0

- docs/brainstorm/06-production-simulation-stack.md +207 -0

- docs/brainstorm/07-mechforge-domain-choice.md +169 -0

- docs/brainstorm/08-agentic-3d-engineering-environment.md +249 -0

- docs/brainstorm/09-cad-rlve-structural-household-parts.md +472 -0

- docs/brainstorm/10-cadforge-rlve-environment.md +564 -0

- docs/brainstorm/11-reference-model-reward-pipeline.md +192 -0

- docs/brainstorm/12-markus-chair-scope-grpo-rlve.md +161 -0

- docs/brainstorm/13-markus-chair-cadquery-grpo-rlve-plan.md +799 -0

- docs/brainstorm/14-cadquery-sft-grpo-rlve-training-plan.md +295 -0

- docs/brainstorm/15-cadquery-agentic-traces-sft-grpo-plan.md +246 -0

- docs/brainstorm/16-tonight-execution-plan.md +140 -0

- docs/brainstorm/17-cadquery-reward-functions-deep-dive.md +272 -0

- docs/brainstorm/18-how-sft-and-grpo-data-works.md +192 -0

- docs/brainstorm/19-qwen35-2b-9b-cadforge-sft-grpo-runpod-plan.md +224 -0

- docs/brainstorm/20-cadforge-qwen-training-runbook.md +380 -0

- docs/cadforge-openenv-project-report.md +1 -1

- docs/cadforge-submission-checklist.md +71 -0

- docs/competiton-round1/COMPETITION_REQUIREMENTS.md +69 -0

- docs/competiton-round1/inference-script-example.md +189 -0

- docs/competiton-round1/objective.md +581 -0

- docs/competiton-round1/pre-vaidationscript-example.md +185 -0

- docs/detailed-blog/cadforge-detailed-blog.md +1 -1

- docs/doc-edit-game-v2.md +149 -0

- docs/docs-guide.md +1 -0

- docs/final-postmortem-round1.md +240 -0

- docs/hackathon_help_guide.md +425 -0

- docs/judging_criteria.md +166 -0

- docs/project-setup.md +3 -0

- docs/round1-corrections.md +32 -0

docs/best-example-project.md

ADDED

|

@@ -0,0 +1,374 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

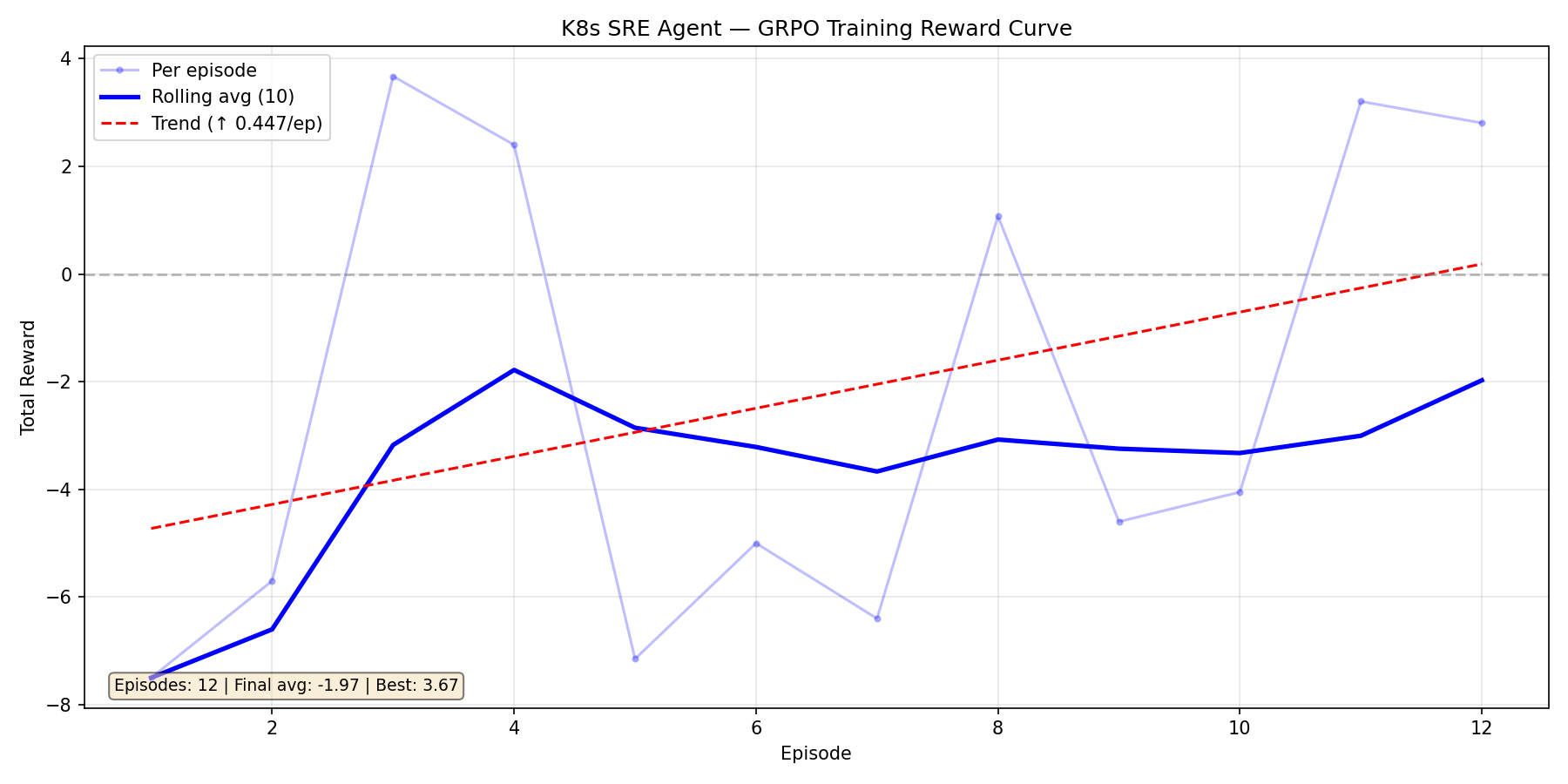

|

|

|

|

|

|

|

|

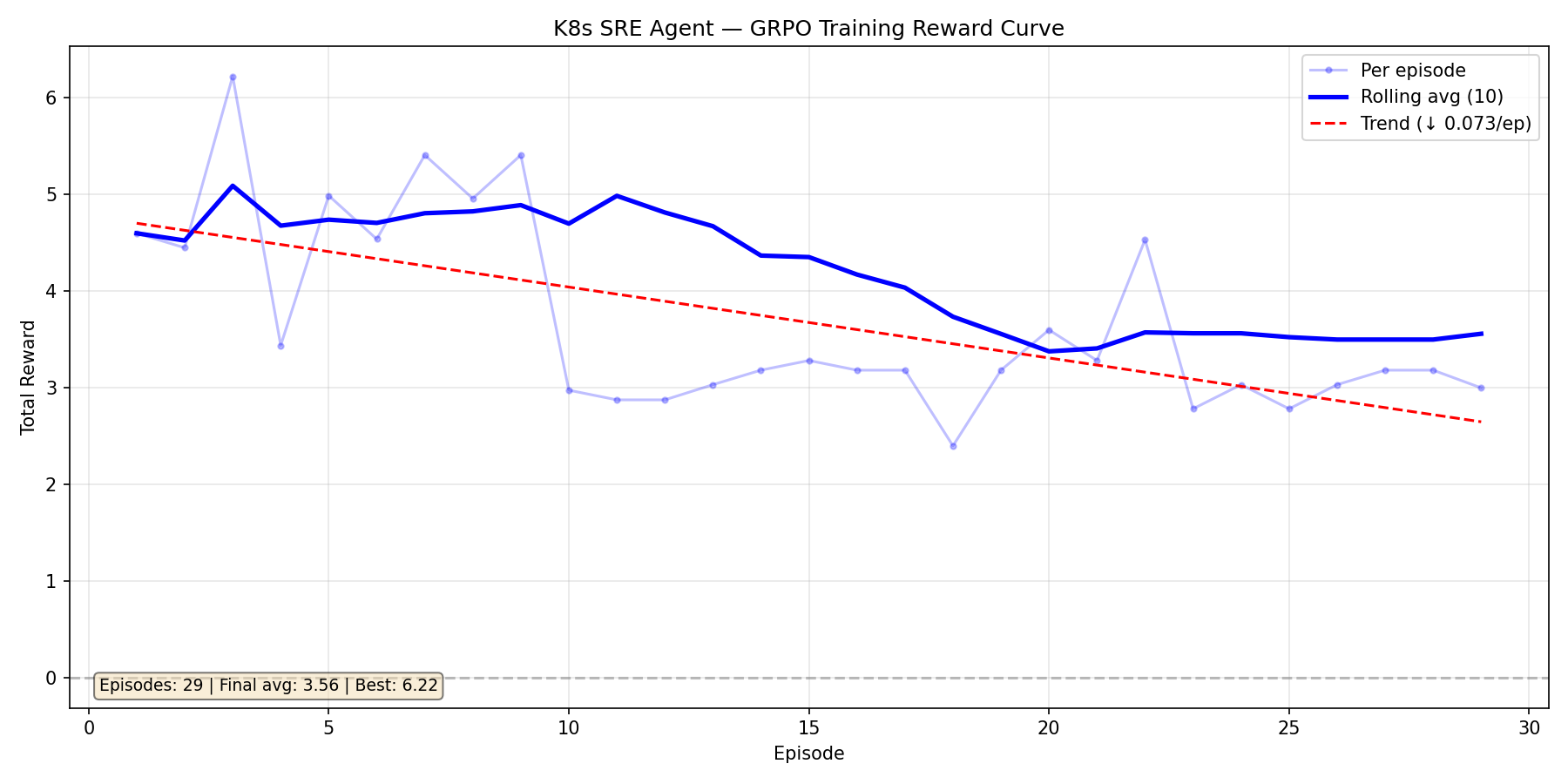

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

https://github.com/sid-rp/kube-sre-gym

|

| 2 |

+

|

| 3 |

+

|

| 4 |

+

---

|

| 5 |

+

title: Kube SRE Gym

|

| 6 |

+

emoji: 🔧

|

| 7 |

+

colorFrom: red

|

| 8 |

+

colorTo: yellow

|

| 9 |

+

sdk: docker

|

| 10 |

+

pinned: false

|

| 11 |

+

app_port: 8000

|

| 12 |

+

base_path: /web

|

| 13 |

+

tags:

|

| 14 |

+

- openenv

|

| 15 |

+

---

|

| 16 |

+

|

| 17 |

+

# Kube SRE Gym

|

| 18 |

+

|

| 19 |

+

### Can a 0.6B model learn to be an on-call SRE — from scratch?

|

| 20 |

+

|

| 21 |

+

We gave a tiny language model a pager, a live Kubernetes cluster, and zero knowledge of what a pod even is. No pre-training on DevOps docs. No few-shot examples. Just a PagerDuty alert and a `kubectl` prompt.

|

| 22 |

+

|

| 23 |

+

Within 8 episodes, it learned to discover namespaces, read pod statuses, identify OOMKills from CrashLoopBackOffs, and apply the correct fix. By episode 4, it was resolving incidents faster than our hand-written baselines.

|

| 24 |

+

|

| 25 |

+

**This is Kube SRE Gym** — a self-improving environment where an RL agent learns to diagnose and fix real production Kubernetes failures through adversarial self-play, curriculum-driven difficulty, and GRPO.

|

| 26 |

+

|

| 27 |

+

> **1st Place, OpenEnv Hackathon** (PyTorch + Cerebral Valley, $15K prize) | Built with [OpenEnv v0.2.1](https://github.com/meta-pytorch/OpenEnv/tree/v0.2.1) | Deployed on [HF Spaces](https://huggingface.co/spaces/openenv-community/kube-sre-gym) | Training via [HF TRL](https://github.com/huggingface/trl) in [Colab](kube_sre_gym_colab.ipynb)

|

| 28 |

+

|

| 29 |

+

[](https://cerebralvalley.ai/e/openenv-hackathon-sf/hackathon/gallery)

|

| 30 |

+

|

| 31 |

+

---

|

| 32 |

+

|

| 33 |

+

## The Story: From Blind to On-Call

|

| 34 |

+

|

| 35 |

+

### Act 1: The Cold Start

|

| 36 |

+

|

| 37 |

+

Episode 1. The agent receives its first alert: *"CRITICAL: payment-gateway pods OOMKilled in payments namespace."*

|

| 38 |

+

|

| 39 |

+

It has never seen Kubernetes before. It doesn't know what namespaces are, what pods look like, or that `kubectl` even exists. It tries random commands. Everything fails. Reward: **-2.0**.

|

| 40 |

+

|

| 41 |

+

### Act 2: First Light

|

| 42 |

+

|

| 43 |

+

Episode 4. Something clicks. The agent discovers `kubectl get pods -A` — a single command that reveals the entire cluster. It sees `OOMKilled` in the STATUS column. It connects this to the alert. It runs `kubectl set resources deployment/payment-gateway --limits=memory=128Mi -n payments`.

|

| 44 |

+

|

| 45 |

+

The pod restarts. The health check passes. The LLM judge confirms resolution. Reward: **+3.95**.

|

| 46 |

+

|

| 47 |

+

### Act 3: The Environment Fights Back

|

| 48 |

+

|

| 49 |

+

As the agent masters simple faults, the **Adversarial Designer** (Claude) notices. It starts creating compound incidents — an OOMKill in `payments` *and* a bad image in `frontend` simultaneously. Red herrings appear. The agent must learn to triage, not just react.

|

| 50 |

+

|

| 51 |

+

The **Curriculum Controller** tracks per-fault-type mastery and escalates: warmup → beginner → intermediate → advanced → expert. The training distribution adapts in real-time. No scenario is ever repeated.

|

| 52 |

+

|

| 53 |

+

### Act 4: The Environment Improves Itself

|

| 54 |

+

|

| 55 |

+

Here's what made this project different from what we planned: **the environment itself had bugs that training exposed.**

|

| 56 |

+

|

| 57 |

+

During training, we discovered our kubectl command parser only accepted `deployment/name` format (with a slash). The model kept sending perfectly valid `kubectl scale deployment frontend-cache --replicas=1` — and the environment rejected it every time. The model was right. Our environment was wrong.

|

| 58 |

+

|

| 59 |

+

We also found the LLM judge was truncating cluster snapshots at 2000 chars, cutting off pods alphabetically after `payment-*`. And a race condition between health checks and judge API calls was causing false negatives — pods would appear healthy during the health check but unhealthy by the time the judge snapshot ran.

|

| 60 |

+

|

| 61 |

+

**The agent's failures taught us to fix the environment.** This is the self-improvement loop we didn't expect — not just the model getting better, but the training infrastructure co-evolving with it.

|

| 62 |

+

|

| 63 |

+

---

|

| 64 |

+

|

| 65 |

+

## Problem Statements Addressed

|

| 66 |

+

|

| 67 |

+

### Primary: Statement 4 — Self-Improvement

|

| 68 |

+

|

| 69 |

+

Kube SRE Gym is an environment where the agent **generates its own challenges, escalates difficulty, and improves through adaptive curricula** — exactly the recursive skill amplification described in Statement 4.

|

| 70 |

+

|

| 71 |

+

- **Adversarial self-play**: Claude designs incidents that target the agent's tracked weaknesses

|

| 72 |

+

- **Automatic curriculum**: Difficulty escalates as per-fault-type mastery improves (warmup → beginner → intermediate → advanced → expert)

|

| 73 |

+

- **No manual authoring**: The training distribution adapts as the agent learns — infinite novel scenarios

|

| 74 |

+

- **Co-evolutionary improvement**: Training runs exposed environment bugs, making the platform itself better

|

| 75 |

+

|

| 76 |

+

### Secondary: Statement 3.1 — World Modeling / Professional Tasks

|

| 77 |

+

|

| 78 |

+

The agent interacts with **real Kubernetes tools and APIs** — not mocked responses or shortcuts. It must maintain internal state across multi-step kubectl workflows and reason about causal effects of its actions on a live cluster.

|

| 79 |

+

|

| 80 |

+

- **Real tool interaction**: Every `kubectl` command executes against a live GKE cluster

|

| 81 |

+

- **Multi-step workflows**: Triage → investigate → fix → verify, with no shortcuts

|

| 82 |

+

- **Persistent world state**: Pod restarts, OOM events, and cascading failures are real K8s events

|

| 83 |

+

|

| 84 |

+

### Partner Sub-Theme: Snorkel AI — Simulated Experts-in-the-Loop

|

| 85 |

+

|

| 86 |

+

The LLM judge uses **three expert personas** (Junior, Senior, Principal) with progressively stricter evaluation criteria, simulating interaction with subject-matter experts whose requirements change as the agent improves:

|

| 87 |

+

|

| 88 |

+

- **Junior**: Lenient scoring, partial credit, provides hints

|

| 89 |

+

- **Senior**: Standard SRE expectations, rewards systematic diagnosis

|

| 90 |

+

- **Principal**: High standards, penalizes inefficiency, rewards elegant fixes

|

| 91 |

+

|

| 92 |

+

---

|

| 93 |

+

|

| 94 |

+

## How It Works

|

| 95 |

+

|

| 96 |

+

```

|

| 97 |

+

┌─────────────────────────────────────────────────────────────────────┐

|

| 98 |

+

│ SELF-IMPROVING LOOP │

|

| 99 |

+

│ │

|

| 100 |

+

│ ┌──────────┐ ┌───────────┐ ┌──────────┐ ┌────────────┐ │

|

| 101 |

+

│ │Adversarial│───►│ Real GKE │───►│ Agent │───►│ LLM Judge │ │

|

| 102 |

+

│ │ Designer │ │ Cluster │ │(Qwen 1.7B│ │(Claude/ │ │

|

| 103 |

+

│ │(Claude) │ │ │ │ + LoRA) │ │ Qwen 14B) │ │

|

| 104 |

+

│ └─────▲─────┘ └────────────┘ └────┬─────┘ └─────┬──────┘ │

|

| 105 |

+

│ │ │ │ │

|

| 106 |

+

│ │ ┌──────────────┐ │ reward │ │

|

| 107 |

+

│ │ │ Curriculum │◄───────┴────────────────┘ │

|

| 108 |

+

│ └─────────│ Controller │ │

|

| 109 |

+

│ weak spots │ (mastery │──► GRPO gradient update │

|

| 110 |

+

│ & difficulty │ tracking) │ (TRL + vLLM on H100) │

|

| 111 |

+

│ └──────────────┘ │

|

| 112 |

+

└─────────────────────────────────────────────────────────────────────┘

|

| 113 |

+

```

|

| 114 |

+

|

| 115 |

+

### The Loop

|

| 116 |

+

|

| 117 |

+

1. **Adversarial Designer** (Claude) creates targeted incidents based on the agent's weak spots — single faults for warmup, multi-fault cascading failures for harder tiers

|

| 118 |

+

2. **Fault Injection** executes real `kubectl` commands against a live GKE cluster (set memory to 4Mi, inject bad images, corrupt env vars, scale to zero)

|

| 119 |

+

3. **Agent** (Qwen3-1.7B + LoRA) receives a PagerDuty-style alert and must diagnose + fix using only kubectl commands — no hints about cluster topology

|

| 120 |

+

4. **LLM Judge** scores each action for SRE workflow correctness (triage → investigate → fix → verify) and verifies resolution by checking actual cluster state

|

| 121 |

+

5. **Curriculum Controller** tracks per-fault-type mastery and escalates difficulty — the agent gets harder scenarios as it improves

|

| 122 |

+

6. **GRPO** computes advantages across 8 parallel rollouts and updates the policy — the agent gets better at fixing incidents it previously failed

|

| 123 |

+

|

| 124 |

+

### What Makes This Different

|

| 125 |

+

|

| 126 |

+

- **Real cluster, not a simulator** — kubectl commands execute against live GKE pods. OOMKills, CrashLoopBackOffs, and ImagePullBackOffs are real Kubernetes events

|

| 127 |

+

- **Self-generating scenarios** — the adversarial designer creates new incident types targeting the agent's weaknesses, so the training distribution adapts as the agent learns

|

| 128 |

+

- **Multi-layer verification** — programmatic health checks (expected pod count, restart tracking, OOM detection) + LLM judge verification prevents false resolution

|

| 129 |

+

- **No hardcoded knowledge** — the agent prompt contains zero information about cluster topology, namespace names, or deployment details. It must discover everything via `kubectl get pods -A`

|

| 130 |

+

- **Environment co-evolution** — training revealed bugs in our own infrastructure, making the platform better alongside the agent

|

| 131 |

+

|

| 132 |

+

---

|

| 133 |

+

|

| 134 |

+

## Architecture

|

| 135 |

+

|

| 136 |

+

```

|

| 137 |

+

H100 GPU (80GB) GKE Cluster (3 namespaces)

|

| 138 |

+

┌──────────────────────────────────┐ ┌─────────────────────────┐

|

| 139 |

+

│ │ │ payments/ │

|

| 140 |

+

│ OpenEnv Server :8000 │ K8s API │ payment-api (Flask) │

|

| 141 |

+

│ ├─ Environment (reset/step) │◄────────►│ payment-gateway │

|

| 142 |

+

│ ├─ Fault Injector │ │ payment-worker │

|

| 143 |

+

│ ├─ Curriculum Controller │ │ │

|

| 144 |

+

│ ├─ Adversarial Designer ──────────►Claude │ frontend/ │

|

| 145 |

+

│ └─ LLM Judge ─────────────────────►Claude │ web-app (nginx) │

|

| 146 |

+

│ │ │ frontend-cache │

|

| 147 |

+

│ GRPO Trainer (TRL 0.29.0) │ │ │

|

| 148 |

+

│ ├─ Qwen3-1.7B + LoRA (BF16) │ │ auth/ │

|

| 149 |

+

│ ├─ vLLM colocate (inference) │ │ auth-service │

|

| 150 |

+

│ └─ 8 rollouts × grad_accum=8 │ └─────────────────────────┘

|

| 151 |

+

│ │

|

| 152 |

+

└──────────────────────────────────┘

|

| 153 |

+

```

|

| 154 |

+

|

| 155 |

+

## Failure Types

|

| 156 |

+

|

| 157 |

+

| Type | What Gets Injected | What Agent Must Do |

|

| 158 |

+

|------|--------------------|---------------------|

|

| 159 |

+

| `oom_kill` | Memory limit set to 4Mi | Increase to 128Mi via `kubectl set resources` |

|

| 160 |

+

| `crashloop` | Container command set to `exit 1` | Remove bad command via `kubectl patch` |

|

| 161 |

+

| `image_pull` | Image set to `nginx:nonexistent-tag-99999` | Fix image tag via `kubectl set image` |

|

| 162 |

+

| `bad_config` | DATABASE_URL pointed to `wrong-host.invalid` | Correct env var via `kubectl set env` |

|

| 163 |

+

| `scale_zero` | Replicas set to 0 | Scale back up via `kubectl scale` |

|

| 164 |

+

| `liveness_probe` | Probe path set to `/nonexistent` | Fix probe via `kubectl patch` |

|

| 165 |

+

| `multi-fault` | 2-3 faults across different namespaces | Find and fix ALL faults |

|

| 166 |

+

|

| 167 |

+

## Training Signal

|

| 168 |

+

|

| 169 |

+

The reward function has multiple layers to ensure clean GRPO signal:

|

| 170 |

+

|

| 171 |

+

- **Per-step LLM judge score** (-1.0 to +1.0) — evaluates SRE workflow quality (phase-aware: triage, investigate, fix, verify)

|

| 172 |

+

- **Repeat penalty** — -0.15 per repeated command (teaches exploration over repetition)

|

| 173 |

+

- **Resolution bonus** — +1.0 to +5.0 for confirmed fixes (efficiency-scaled: faster fixes get higher bonuses)

|

| 174 |

+

- **Timeout penalty** — failed episodes wiped to net -2.0 total reward

|

| 175 |

+

- **Judge verification** — LLM confirms fix is real by reviewing cluster state + action history

|

| 176 |

+

- **Phase-order bonus** — +0.2 for following correct SRE workflow, -0.3 for skipping phases

|

| 177 |

+

|

| 178 |

+

This produces clear separation: successful episodes score +3 to +8, failed episodes score -2.0. GRPO needs this variance to compute meaningful advantages.

|

| 179 |

+

|

| 180 |

+

---

|

| 181 |

+

|

| 182 |

+

## Results

|

| 183 |

+

|

| 184 |

+

### Training Run 1: Qwen2.5-1.5B — The Cold Start

|

| 185 |

+

|

| 186 |

+

|

| 187 |

+

|

| 188 |

+

Our first attempt. 12 episodes, massive variance swinging between -7.5 and +3.7. The upward trend (+0.447/ep) was encouraging — the model *was* learning — but the signal was too noisy. We traced this to **environment bugs**: our command parser rejected valid kubectl syntax, the error penalty override was masking real progress, and the judge was truncating cluster snapshots.

|

| 189 |

+

|

| 190 |

+

The model was fighting two battles: learning Kubernetes AND working around our broken environment.

|

| 191 |

+

|

| 192 |

+

### Training Run 2: Qwen3-1.7B — Too Much Reward, Too Soon

|

| 193 |

+

|

| 194 |

+

|

| 195 |

+

|

| 196 |

+

After fixing the environment bugs, we switched to Qwen3-1.7B. It started strong (avg ~5.0) but the reward signal was *too generous* — the model found a plateau at 3.0-3.5 and stopped improving. The slight downward trend (-0.073/ep) over 29 episodes told us the curriculum wasn't pushing hard enough.

|

| 197 |

+

|

| 198 |

+

This run taught us that **a good environment needs to fight back**. We tightened the reward function, added repeat-command penalties, and activated adversarial mode.

|

| 199 |

+

|

| 200 |

+

### Training Run 3: Qwen3-1.7B — Environment Fights Back (Ongoing)

|

| 201 |

+

|

| 202 |

+

Current run with all fixes applied — adversarial scenarios, tighter rewards, repeat-command circuit breaker:

|

| 203 |

+

|

| 204 |

+

| Episode | Reward | Diagnosis | Fix |

|

| 205 |

+

|---------|--------|-----------|-----|

|

| 206 |

+

| 1 | +1.80 | 0.30 | -0.10 |

|

| 207 |

+

| 2 | +5.38 | 0.30 | +0.10 |

|

| 208 |

+

| 3 | -2.50 | 0.70 | 0.00 |

|

| 209 |

+

| 4 | **+6.58** | 0.70 | -0.60 |

|

| 210 |

+

| 5 | +5.45 | 0.70 | 0.00 |

|

| 211 |

+

| 6 | -2.00 | 0.55 | -0.60 |

|

| 212 |

+

| 7 | **+6.79** | 0.70 | +0.50 |

|

| 213 |

+

| 8 | +6.35 | 0.20 | +0.40 |

|

| 214 |

+

|

| 215 |

+

**Mean: 3.48 | Best: 6.79** — with real adversarial difficulty. The high-variance episodes (ep3, ep6 are negatives; ep4, ep7 are +6.5) show GRPO is getting the signal variance it needs to compute meaningful advantages.

|

| 216 |

+

|

| 217 |

+

### What the agent learned (from reward signal alone)

|

| 218 |

+

|

| 219 |

+

1. Run `kubectl get pods -A` to discover cluster topology

|

| 220 |

+

2. Identify fault types from pod STATUS column (OOMKilled, ImagePullBackOff, CrashLoopBackOff)

|

| 221 |

+

3. Map fault types to correct fix commands (`set resources`, `set image`, `patch`, `scale`)

|

| 222 |

+

4. Check ALL namespaces after each fix — there may be multiple faults

|

| 223 |

+

5. Never repeat a failed command — try a different approach

|

| 224 |

+

|

| 225 |

+

### What we learned (from the agent's failures)

|

| 226 |

+

|

| 227 |

+

1. Our command parser was too strict — valid kubectl syntax was being rejected

|

| 228 |

+

2. Judge snapshot truncation hid pods alphabetically after `payment-*`

|

| 229 |

+

3. Error penalty override was masking real progress with false negatives

|

| 230 |

+

4. Too-generous rewards cause plateaus — the environment must fight back

|

| 231 |

+

5. The environment needs to evolve alongside the agent — static environments miss bugs

|

| 232 |

+

|

| 233 |

+

---

|

| 234 |

+

|

| 235 |

+

## Training with HF TRL (Colab)

|

| 236 |

+

|

| 237 |

+

A complete training notebook is provided at [`kube_sre_gym_colab.ipynb`](kube_sre_gym_colab.ipynb) using **HF TRL's GRPO** implementation. The notebook covers:

|

| 238 |

+

|

| 239 |

+

1. Connect to the OpenEnv server on HF Spaces

|

| 240 |

+

2. Configure GRPO training with TRL (`GRPOConfig`, `GRPOTrainer`)

|

| 241 |

+

3. Run training episodes against the live environment

|

| 242 |

+

4. Save checkpoints to HuggingFace Hub

|

| 243 |

+

|

| 244 |

+

Training uses TRL's experimental OpenEnv integration (`trl.experimental.openenv.generate_rollout_completions`) for seamless environment-trainer communication.

|

| 245 |

+

|

| 246 |

+

## Quick Start

|

| 247 |

+

|

| 248 |

+

```python

|

| 249 |

+

from kube_sre_gym import KubeSreGymAction, KubeSreGymEnv

|

| 250 |

+

|

| 251 |

+

with KubeSreGymEnv(base_url="http://localhost:8000") as client:

|

| 252 |

+

obs = client.reset()

|

| 253 |

+

print(obs.observation.command_output) # PagerDuty alert

|

| 254 |

+

|

| 255 |

+

obs = client.step(KubeSreGymAction(command="kubectl get pods -A"))

|

| 256 |

+

obs = client.step(KubeSreGymAction(command="kubectl describe pod payment-api-xxx -n payments"))

|

| 257 |

+

obs = client.step(KubeSreGymAction(command="fix: kubectl set resources deployment/payment-api --limits=memory=128Mi -n payments"))

|

| 258 |

+

# reward > 0 if fix is correct, episode done

|

| 259 |

+

```

|

| 260 |

+

|

| 261 |

+

## Deployment on HF Spaces

|

| 262 |

+

|

| 263 |

+

The environment is deployed as a Docker-based HF Space using OpenEnv v0.2.1:

|

| 264 |

+

|

| 265 |

+

```bash

|

| 266 |

+

# Dockerfile uses openenv-base image

|

| 267 |

+

FROM ghcr.io/meta-pytorch/openenv-base:latest

|

| 268 |

+

# Serves OpenEnv HTTP/WebSocket API on port 8000

|

| 269 |

+

CMD ["uvicorn", "server.app:app", "--host", "0.0.0.0", "--port", "8000"]

|

| 270 |

+

```

|

| 271 |

+

|

| 272 |

+

Configuration in `openenv.yaml`:

|

| 273 |

+

```yaml

|

| 274 |

+

spec_version: 1

|

| 275 |

+

name: kube_sre_gym

|

| 276 |

+

type: space

|

| 277 |

+

runtime: fastapi

|

| 278 |

+

app: server.app:app

|

| 279 |

+

port: 8000

|

| 280 |

+

```

|

| 281 |

+

|

| 282 |

+

## Training on H100

|

| 283 |

+

|

| 284 |

+

**Install**

|

| 285 |

+

```bash

|

| 286 |

+

git clone https://huggingface.co/spaces/openenv-community/kube-sre-gym && cd kube-sre-gym

|

| 287 |

+

pip install -e ".[train]"

|

| 288 |

+

```

|

| 289 |

+

|

| 290 |

+

**Set credentials**

|

| 291 |

+

```bash

|

| 292 |

+

export K8S_TOKEN=<gke-bearer-token>

|

| 293 |

+

export K8S_ENDPOINT=<gke-api-url>

|

| 294 |

+

export K8S_CA_CERT=<base64-ca-cert>

|

| 295 |

+

export ANTHROPIC_API_KEY=<key> # for adversarial designer + judge

|

| 296 |

+

export HF_TOKEN=<token> # for pushing checkpoints

|

| 297 |

+

```

|

| 298 |

+

|

| 299 |

+

**Launch (2 terminals)**

|

| 300 |

+

```bash

|

| 301 |

+

# Terminal 1: Environment server

|

| 302 |

+

GYM_MODE=adversarial LLM_BACKEND=anthropic uv run server

|

| 303 |

+

|

| 304 |

+

# Terminal 2: GRPO training

|

| 305 |

+

python train.py --vllm-mode colocate --num-generations 8 --max-steps 8 --save-steps 1 \

|

| 306 |

+

--push-to-hub --hub-repo your-name/k8s-sre-agent

|

| 307 |

+

```

|

| 308 |

+

|

| 309 |

+

The curriculum automatically progresses: warmup (single faults) → intermediate (harder faults) → expert (multi-fault adversarial scenarios designed by Claude).

|

| 310 |

+

|

| 311 |

+

## Evaluation

|

| 312 |

+

|

| 313 |

+

```bash

|

| 314 |

+

# Compare base model vs trained checkpoint

|

| 315 |

+

python eval.py

|

| 316 |

+

```

|

| 317 |

+

|

| 318 |

+

Runs both models through random adversarial scenarios and reports resolution rate, average reward, and steps-to-fix.

|

| 319 |

+

|

| 320 |

+

## Configuration

|

| 321 |

+

|

| 322 |

+

| Variable | Description | Default |

|

| 323 |

+

|----------|-------------|---------|

|

| 324 |

+

| `K8S_TOKEN` | Bearer token for GKE | required |

|

| 325 |

+

| `K8S_ENDPOINT` | GKE API endpoint | required |

|

| 326 |

+

| `K8S_CA_CERT` | Base64 CA cert | required |

|

| 327 |

+

| `GYM_MODE` | `standard` or `adversarial` | `standard` |

|

| 328 |

+

| `LLM_BACKEND` | `openai`, `hf`, or `anthropic` | `openai` |

|

| 329 |

+

| `ANTHROPIC_API_KEY` | For adversarial designer + judge | required in adversarial mode |

|

| 330 |

+

| `MAX_STEPS` | Max commands per episode | `16` |

|

| 331 |

+

| `EVAL_MIN_DIFFICULTY` | Override min difficulty for eval | `0.0` |

|

| 332 |

+

|

| 333 |

+

## Project Structure

|

| 334 |

+

|

| 335 |

+

```

|

| 336 |

+

kube-sre-gym/

|

| 337 |

+

├── train.py # GRPO training (TRL 0.29.0 + vLLM colocate)

|

| 338 |

+

├── eval.py # Base vs trained model comparison

|

| 339 |

+

├── kube_sre_gym_colab.ipynb # Google Colab training notebook (HF TRL)

|

| 340 |

+

├── plot_rewards.py # Reward curve visualization

|

| 341 |

+

├── models.py # Action, Observation, State dataclasses

|

| 342 |

+

├── client.py # KubeSreGymEnv sync client

|

| 343 |

+

├── Dockerfile # HF Spaces deployment (OpenEnv base image)

|

| 344 |

+

├── openenv.yaml # OpenEnv v0.2.1 Space config

|

| 345 |

+

├── server/

|

| 346 |

+

│ ├── kube_sre_gym_environment.py # Core env: reset → inject → step → judge → reward

|

| 347 |

+

│ ├── k8s_backend.py # K8s auth, execute, reset, health checks

|

| 348 |

+

│ ├── k8s_commands.py # kubectl command handlers (get/describe/logs/set/patch)

|

| 349 |

+

│ ├── k8s_injectors.py # Real fault injection via K8s API

|

| 350 |

+

│ ├── adversarial_designer.py # LLM designs multi-step incidents

|

| 351 |

+

│ ├── judge.py # LLMJudge + AdversarialJudge (phase-aware SRE scoring)

|

| 352 |

+

│ ├── curriculum.py # Progressive difficulty + mastery tracking

|

| 353 |

+

│ ├── scenario_generator.py # Fault scenario pool

|

| 354 |

+

│ ├─�� llm_client.py # OpenAI/HF/Anthropic wrapper

|

| 355 |

+

│ ├── constants.py # Cluster topology, healthy state definitions

|

| 356 |

+

│ └── app.py # FastAPI + WebSocket server

|

| 357 |

+

└── sample_app/

|

| 358 |

+

├── namespaces.yaml # payments, frontend, auth

|

| 359 |

+

└── base/ # Healthy deployment manifests

|

| 360 |

+

```

|

| 361 |

+

|

| 362 |

+

## Key Design Decisions

|

| 363 |

+

|

| 364 |

+

1. **Real cluster over simulator** — Simulators can't reproduce the timing, state transitions, and failure modes of real Kubernetes. OOM kills happen when the kernel actually runs out of memory, not when a flag is set.

|

| 365 |

+

|

| 366 |

+

2. **Adversarial self-play** — The designer targets the agent's weaknesses (tracked by curriculum), creating an automatic curriculum that gets harder as the agent improves. No manual scenario authoring needed.

|

| 367 |

+

|

| 368 |

+

3. **Multi-layer resolution check** — Programmatic (expected pod count + restart tracking + OOM detection) + LLM judge verification. This prevents false resolution from OOM-flapping pods or partial fixes in multi-fault scenarios.

|

| 369 |

+

|

| 370 |

+

4. **No topology in prompt** — The agent receives zero information about namespaces, deployment names, or images. It must learn to discover the cluster layout via `kubectl get pods -A`, making the learned policy transferable to any cluster.

|

| 371 |

+

|

| 372 |

+

5. **GRPO over PPO** — GRPO compares multiple rollouts of the same prompt, producing stable advantages without a value function. Better suited for sparse, delayed rewards (most reward comes at episode end).

|

| 373 |

+

|

| 374 |

+

6. **Environment co-evolution** — We intentionally treat environment bugs as part of the story. When training exposed issues in our command parser, judge, and health checks, we fixed them — making the environment better alongside the agent. This is recursive self-improvement at the platform level.

|

docs/brainstorm/00-hackathon-readout.md

ADDED

|

@@ -0,0 +1,75 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

# OpenEnv Hackathon Readout

|

| 2 |

+

|

| 3 |

+

Date: 2026-04-24

|

| 4 |

+

|

| 5 |

+

## What The Hackathon Wants

|

| 6 |

+

|

| 7 |

+

The winning submission should be an OpenEnv-compliant environment where an LLM acts step by step, receives programmatic feedback, and measurably improves through RL or RL-style training.

|

| 8 |

+

|

| 9 |

+

The most important judging weights are:

|

| 10 |

+

|

| 11 |

+

| Criterion | Weight | Practical meaning |

|

| 12 |

+

|---|---:|---|

|

| 13 |

+

| Environment innovation | 40% | Novel, challenging, meaningful agent behavior, not a clone of common games or toy tasks. |

|

| 14 |

+

| Storytelling | 30% | A judge should understand the world, the agent, what it learned, and why it matters in 3 to 5 minutes. |

|

| 15 |

+

| Showing improvement | 20% | Reward curves, before/after runs, baseline comparison, actual training evidence. |

|

| 16 |

+

| Reward/training pipeline | 10% | Coherent rubrics, TRL or Unsloth script, reproducible pipeline. |

|

| 17 |

+

|

| 18 |

+

Minimum gates:

|

| 19 |

+

|

| 20 |

+

- Use latest OpenEnv.

|

| 21 |

+

- Hosted Hugging Face Space.

|

| 22 |

+

- OpenEnv-compliant `reset`, `step`, `state`, typed models, `openenv.yaml`.

|

| 23 |

+

- Training script using Unsloth or HF TRL, ideally Colab.

|

| 24 |

+

- Evidence of real training, including reward/loss plots.

|

| 25 |

+

- README with problem, environment, actions, observations, tasks, setup, results.

|

| 26 |

+

|

| 27 |

+

## Strategic Lessons From The Docs

|

| 28 |

+

|

| 29 |

+

1. Pick a task where success can be verified programmatically.

|

| 30 |

+

2. Make the environment ambitious but keep the first curriculum levels easy enough for non-zero reward.

|

| 31 |

+

3. Use multiple reward signals, not one monolithic score.

|

| 32 |

+

4. Build the environment and verifier before training.

|

| 33 |

+

5. Show a before/after behavior difference, not only a training script.

|

| 34 |

+

6. Avoid a static benchmark. Adaptive curriculum and self-play read as much more ambitious.

|

| 35 |

+

7. The story matters almost as much as the engineering.

|

| 36 |

+

|

| 37 |

+

## Lessons From The Prior DocEdit Work

|

| 38 |

+

|

| 39 |

+

The old DocEdit environment passed because it was:

|

| 40 |

+

|

| 41 |

+

- Real-world, not a game.

|

| 42 |

+

- OpenEnv compliant.

|

| 43 |

+

- Lightweight enough for the constraints.

|

| 44 |

+

- Deterministically graded.

|

| 45 |

+

- Easy to explain.

|

| 46 |

+

|

| 47 |

+

The later Qwen SFT + GRPO postmortem proved that document repair can improve with training, but it also exposed a strategic limitation: full-document rewrite policies are probably not the best final design. A stronger next step is a planner/executor setup with structured edit actions and verifier feedback.

|

| 48 |

+

|

| 49 |

+

## Lessons From The Winning Kube SRE Example

|

| 50 |

+

|

| 51 |

+

The winning pattern was not just "Kubernetes environment." It was:

|

| 52 |

+

|

| 53 |

+

- A vivid professional world: a tiny model learns to be on-call.

|

| 54 |

+

- Real or realistic tools.

|

| 55 |

+

- Multi-step investigation and repair.

|

| 56 |

+

- Adaptive curriculum.

|

| 57 |

+

- Adversarial scenario generation.

|

| 58 |

+

- Multi-layer rewards.

|

| 59 |

+

- A story where the agent and environment co-evolve.

|

| 60 |

+

|

| 61 |

+

The key insight to borrow:

|

| 62 |

+

|

| 63 |

+

> The environment should fight back as the agent improves.

|

| 64 |

+

|

| 65 |

+

## Our Target Shape

|

| 66 |

+

|

| 67 |

+

To maximize win probability, the idea should combine:

|

| 68 |

+

|

| 69 |

+

- Theme 2: long-horizon planning, ideally up to 300 actions.

|

| 70 |

+

- Theme 3.1: professional world modeling with realistic tools and persistent state.

|

| 71 |

+

- Theme 4: self-improvement through adaptive scenario generation.

|

| 72 |

+

- Existing leverage from DocEdit so we can build fast.

|

| 73 |

+

|

| 74 |

+

The strongest direction is therefore not "another document editor." It is a long-horizon professional control room where document edits are one part of a larger verified workflow.

|

| 75 |

+

|

docs/brainstorm/01-idea-scorecard.md

ADDED

|

@@ -0,0 +1,59 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

# Idea Scorecard

|

| 2 |

+

|

| 3 |

+

## Scoring Rubric

|

| 4 |

+

|

| 5 |

+

Scores are out of 10 and weighted roughly by the hackathon criteria.

|

| 6 |

+

|

| 7 |

+

| Field | Meaning |

|

| 8 |

+

|---|---|

|

| 9 |

+

| Innovation | Would judges find the environment fresh and research-worthy? |

|

| 10 |

+

| Story | Can the demo be explained clearly and memorably? |

|

| 11 |

+

| Trainability | Can we show reward improvement in the available time? |

|

| 12 |

+

| Verifiability | Can rewards be objective and hard to game? |

|

| 13 |

+

| Build speed | Can we build a credible OpenEnv environment quickly? |

|

| 14 |

+

|

| 15 |

+

## Candidate Ideas

|

| 16 |

+

|

| 17 |

+

| Rank | Idea | Innovation | Story | Trainability | Verifiability | Build speed | Verdict |

|

| 18 |

+

|---:|---|---:|---:|---:|---:|---:|---|

|

| 19 |

+

| 1 | Regulatory Dossier Control Room | 9 | 9 | 8 | 9 | 8 | Best overall. Uses DocEdit leverage but expands into long-horizon professional world modeling. |

|

| 20 |

+

| 2 | Personal Chief of Staff Simulator | 8 | 9 | 7 | 7 | 6 | Excellent theme fit, but personalization and reward design may get fuzzy. |

|

| 21 |

+

| 3 | Codebase Migration Gym | 7 | 7 | 8 | 9 | 6 | Verifiable with tests, but code agents are crowded and less novel. |

|

| 22 |

+

| 4 | Research Reproduction Lab | 9 | 8 | 5 | 7 | 4 | Very ambitious, likely too hard to build and train under time pressure. |

|

| 23 |

+

| 5 | Multi-Agent Procurement Negotiation | 8 | 8 | 6 | 6 | 5 | Good multi-agent story, but objective grading and RL loop are harder. |

|

| 24 |

+

| 6 | Supply Chain Crisis Planner | 7 | 8 | 7 | 8 | 6 | Solid simulator, but can feel like an operations game if not grounded enough. |

|

| 25 |

+

|

| 26 |

+

## Recommended Winner Candidate

|

| 27 |

+

|

| 28 |

+

Build **Regulatory Dossier Control Room**.

|

| 29 |

+

|

| 30 |

+

One-line pitch:

|

| 31 |

+

|

| 32 |

+

> Train an agent to manage a 300-step regulatory document crisis: inspect a simulated pharma submission, discover scattered inconsistencies, apply precise cross-document edits, validate the dossier, and improve through adversarially generated new compliance failures.

|

| 33 |

+

|

| 34 |

+

Why this is the best fit:

|

| 35 |

+

|

| 36 |

+

- It hits long-horizon planning directly.

|

| 37 |

+

- It is professional and high-value.

|

| 38 |

+

- It has crisp verification via hidden canonical facts and compliance rules.

|

| 39 |

+

- It extends prior DocEdit work instead of restarting from zero.

|

| 40 |

+

- It creates a very strong story: "Can a small model learn to behave like a regulatory operations associate?"

|

| 41 |

+

- It can show training improvement without requiring a real external system like Kubernetes.

|

| 42 |

+

- It can scale from easy 10-step tasks to hard 300-step tasks through curriculum.

|

| 43 |

+

|

| 44 |

+

## Why Not Just Continue DocEdit V2?

|

| 45 |

+

|

| 46 |

+

DocEdit V2 is useful but too narrow for this round's themes. It is mostly local edit application. The judging criteria now heavily reward long-horizon behavior, self-improvement, and world modeling.

|

| 47 |

+

|

| 48 |

+

We should reuse DocEdit-style document generation, corruption, chunking, and grading, but wrap it inside a larger workflow:

|

| 49 |

+

|

| 50 |

+

- Multiple documents.

|

| 51 |

+

- Persistent investigation state.

|

| 52 |

+

- Hidden facts.

|

| 53 |

+

- Cross-document dependencies.

|

| 54 |

+

- Validation loops.

|

| 55 |

+

- Audit notes.

|

| 56 |

+

- Adaptive scenario generator.

|

| 57 |

+

|

| 58 |

+

That gives the old strength a much bigger judging surface.

|

| 59 |

+

|

docs/brainstorm/02-recommended-idea-regulatory-dossier-control-room.md

ADDED

|

@@ -0,0 +1,272 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

# Recommended Idea: Regulatory Dossier Control Room

|

| 2 |

+

|

| 3 |

+

## Short Pitch

|

| 4 |

+

|

| 5 |

+

**Regulatory Dossier Control Room** is an OpenEnv environment where an LLM acts as a regulatory operations agent during a simulated pharma submission crisis.

|

| 6 |

+

|

| 7 |

+

The agent receives a high-level change request, such as a dosage update, safety warning, manufacturing site change, or adverse-event correction. The change is scattered across a dossier of many interlinked documents: drug label, clinical study report, investigator brochure, patient leaflet, quality summary, cover letter, amendment log, and internal review notes.

|

| 8 |

+

|

| 9 |

+

The agent has up to 300 tool steps to inspect, search, edit, validate, and audit the dossier. Rewards come from objective checks against a hidden consistency graph and regulatory rules.

|

| 10 |

+

|

| 11 |

+

## Why Judges Should Care

|

| 12 |

+

|

| 13 |

+

Real regulatory work is long-horizon, high-stakes, and brutally detail-sensitive. A single inconsistent dosage, date, or contraindication across documents can delay a submission.

|

| 14 |

+

|

| 15 |

+

Current LLMs are good at explaining documents, but they struggle with:

|

| 16 |

+

|

| 17 |

+

- Tracking facts across many files.

|

| 18 |

+

- Applying the same change consistently.

|

| 19 |

+

- Avoiding collateral damage.

|

| 20 |

+

- Remembering decisions over long sessions.

|

| 21 |

+

- Recovering from early mistakes.

|

| 22 |

+

- Knowing when to validate and when to stop.

|

| 23 |

+

|

| 24 |

+

This environment trains exactly that behavior.

|

| 25 |

+

|

| 26 |

+

## Theme Fit

|

| 27 |

+

|

| 28 |

+

Primary theme:

|

| 29 |

+

|

| 30 |

+

- Theme 2: Super long-horizon planning and instruction following.

|

| 31 |

+

|

| 32 |

+

Secondary themes:

|

| 33 |

+

|

| 34 |

+

- Theme 3.1: Professional task world modeling.

|

| 35 |

+

- Theme 4: Self-improvement through adaptive curricula.

|

| 36 |

+

- Theme 5: Wild card, because it turns document editing into a realistic compliance control room.

|

| 37 |

+

|

| 38 |

+

## The 300-Step Task

|

| 39 |

+

|

| 40 |

+

Hard episodes have:

|

| 41 |

+

|

| 42 |

+

- 20 to 60 dossier files.

|

| 43 |

+

- 40 to 150 hidden obligations.

|

| 44 |

+

- 100 to 300 possible action steps.

|

| 45 |

+

- Cross-document dependencies.

|

| 46 |

+

- Red herrings and stale memo fragments.

|

| 47 |

+

- Validation reports that reveal partial but not complete truth.

|

| 48 |

+

|

| 49 |

+

Example hard prompt:

|

| 50 |

+

|

| 51 |

+

> A late safety update changes the maximum daily dose from 40 mg to 30 mg for renal impairment patients, adds a contraindication for severe hepatic impairment, removes an outdated trial endpoint from Study RX-204, and requires all patient-facing materials to use plain-language wording. Update the dossier, preserve unrelated content, and leave an audit trail.

|

| 52 |

+

|

| 53 |

+

The agent must discover that this affects:

|

| 54 |

+

|

| 55 |

+

- Drug label dosage section.

|

| 56 |

+

- Contraindications section.

|

| 57 |

+

- Patient leaflet.

|

| 58 |

+

- Clinical study report summary table.

|

| 59 |

+

- Investigator brochure safety section.

|

| 60 |

+

- Cover letter.

|

| 61 |

+

- Amendment log.

|

| 62 |

+

- Cross-reference table.

|

| 63 |

+

- Internal review checklist.

|

| 64 |

+

|

| 65 |

+

## Action Space

|

| 66 |

+

|

| 67 |

+

Potential actions:

|

| 68 |

+

|

| 69 |

+

```json

|

| 70 |

+

{"tool": "search", "query": "renal impairment 40 mg"}

|

| 71 |

+

{"tool": "open_file", "path": "label/section_4_2_dosage.xml"}

|

| 72 |

+

{"tool": "inspect_window", "path": "csr/rx_204_summary.xml", "start": 120, "length": 40}

|

| 73 |

+

{"tool": "replace_text", "path": "label/section_4_2_dosage.xml", "target": "40 mg", "replacement": "30 mg"}

|

| 74 |

+

{"tool": "patch_section", "path": "patient_leaflet.xml", "section_id": "dose_warning", "content": "..."}

|

| 75 |

+

{"tool": "add_audit_note", "document": "amendment_log.xml", "note": "..."}

|

| 76 |

+

{"tool": "run_validator", "validator": "dose_consistency"}

|

| 77 |

+

{"tool": "commit_episode"}

|

| 78 |

+

```

|

| 79 |

+

|

| 80 |

+

Optional later actions:

|

| 81 |

+

|

| 82 |

+

```json

|

| 83 |

+

{"tool": "assign_subtask", "agent": "safety_reviewer", "objective": "..."}

|

| 84 |

+

{"tool": "resolve_conflict", "fact": "max_daily_dose", "value": "30 mg", "evidence": ["..."]}

|

| 85 |

+

```

|

| 86 |

+

|

| 87 |

+

## Observation Space

|

| 88 |

+

|

| 89 |

+

The agent sees:

|

| 90 |

+

|

| 91 |

+

- Current task brief.

|

| 92 |

+

- Current file/window content.

|

| 93 |

+

- Search results.

|

| 94 |

+

- Known facts discovered so far.

|

| 95 |

+

- Validation warnings.

|

| 96 |

+

- Edit history.

|

| 97 |

+

- Remaining step budget.

|

| 98 |

+

- Reward components from the last action.

|

| 99 |

+

|

| 100 |

+

The agent does not see:

|

| 101 |

+

|

| 102 |

+

- The full hidden canonical answer.

|

| 103 |

+

- All affected files upfront.

|

| 104 |

+

- The complete dependency graph.

|

| 105 |

+

|

| 106 |

+

## Reward Design

|

| 107 |

+

|

| 108 |

+

Use multiple independent reward components:

|

| 109 |

+

|

| 110 |

+

| Reward component | Purpose |

|

| 111 |

+

|---|---|

|

| 112 |

+

| Fact correction reward | Correctly updates canonical facts like dosage, dates, safety claims, study endpoints. |

|

| 113 |

+

| Cross-document consistency reward | Same fact is consistent across all required files. |

|

| 114 |

+

| Coverage reward | Agent discovers and touches all impacted nodes in the hidden dependency graph. |

|

| 115 |

+

| Collateral damage penalty | Penalize changing unrelated text or breaking valid facts. |

|

| 116 |

+

| Audit reward | Correctly records what changed and why. |

|

| 117 |

+

| Validation reward | Reward using validators and resolving their warnings. |

|

| 118 |

+

| Efficiency reward | Encourage completion before 300 steps. |

|

| 119 |

+

| Anti-hacking penalty | Penalize invalid paths, repeated no-ops, format-breaking edits, or validator spam. |

|

| 120 |

+

|

| 121 |

+

Suggested total:

|

| 122 |

+

|

| 123 |

+

```text

|

| 124 |

+

reward =

|

| 125 |

+

delta_fact_score

|

| 126 |

+

+ delta_consistency_score

|

| 127 |

+

+ 0.2 * delta_coverage

|

| 128 |

+

+ 0.1 * audit_score_delta

|

| 129 |

+

+ validator_resolution_bonus

|

| 130 |

+

- collateral_damage_penalty

|

| 131 |

+

- repeat_action_penalty

|

| 132 |

+

- invalid_action_penalty

|

| 133 |

+

```

|

| 134 |

+

|

| 135 |

+

Final success score:

|

| 136 |

+

|

| 137 |

+

```text

|

| 138 |

+

final_score =

|

| 139 |

+

0.35 * fact_accuracy

|

| 140 |

+

+ 0.25 * cross_doc_consistency

|

| 141 |

+

+ 0.15 * affected_file_coverage

|

| 142 |

+

+ 0.10 * audit_quality

|

| 143 |

+

+ 0.10 * structural_validity

|

| 144 |

+

+ 0.05 * efficiency

|

| 145 |

+

- collateral_damage

|

| 146 |

+

```

|

| 147 |

+

|

| 148 |

+

## Self-Improvement Loop

|

| 149 |

+

|

| 150 |

+

The environment includes an **Adversarial Compliance Designer**.

|

| 151 |

+

|

| 152 |

+

It tracks the agent's weaknesses:

|

| 153 |

+

|

| 154 |

+

- Misses patient-facing documents.

|

| 155 |

+

- Fixes label but forgets clinical study report tables.

|

| 156 |

+

- Over-edits unrelated sections.

|

| 157 |

+

- Fails to write audit notes.

|

| 158 |

+

- Repeats search actions.

|

| 159 |

+

- Stops before running validators.

|

| 160 |

+

|

| 161 |

+

Then it generates harder future episodes:

|

| 162 |

+

|

| 163 |

+

- More files.

|

| 164 |

+

- More cross-references.

|

| 165 |

+

- More red herrings.

|

| 166 |

+

- More subtle wording differences.

|

| 167 |

+

- Compound changes.

|

| 168 |

+

- Longer dependency chains.

|

| 169 |

+

|

| 170 |

+

Curriculum levels:

|

| 171 |

+

|

| 172 |

+

| Level | Episode shape | Expected horizon |

|

| 173 |

+

|---|---|---:|

|

| 174 |

+

| 1 | One file, one fact | 5 to 15 steps |

|

| 175 |

+

| 2 | Three files, one fact | 15 to 35 steps |

|

| 176 |

+

| 3 | Ten files, two facts | 35 to 80 steps |

|

| 177 |

+

| 4 | Twenty files, compound update | 80 to 160 steps |

|

| 178 |

+

| 5 | Full dossier crisis with red herrings | 160 to 300 steps |

|

| 179 |

+

|

| 180 |

+

This gives us non-zero reward early, then a path to the 300-step headline.

|

| 181 |

+

|

| 182 |

+

## What We Train

|

| 183 |

+

|

| 184 |

+

Start with a small instruct model and train it to:

|

| 185 |

+

|

| 186 |

+

- Search before editing.

|

| 187 |

+

- Build a working memory of discovered facts.

|

| 188 |

+

- Use validators.

|

| 189 |

+