code stringlengths 2.5k 836k | kind stringclasses 2

values | parsed_code stringlengths 2 404k | quality_prob float64 0.6 0.98 | learning_prob float64 0.3 1 |

|---|---|---|---|---|

# Visualizing Logistic Regression

```

import numpy as np

import tensorflow as tf

import matplotlib.pyplot as plt

from tensorflow.examples.tutorials.mnist import input_data

mnist = input_data.read_data_sets('data/', one_hot=True)

trainimg = mnist.train.images

trainlabel = mnist.train.labels

testimg = mnist.te... | github_jupyter | import numpy as np

import tensorflow as tf

import matplotlib.pyplot as plt

from tensorflow.examples.tutorials.mnist import input_data

mnist = input_data.read_data_sets('data/', one_hot=True)

trainimg = mnist.train.images

trainlabel = mnist.train.labels

testimg = mnist.test.images

testlabel = mnist.test.label... | 0.676086 | 0.913252 |

# Final Project Submission

* Student name: `Reno Vieira Neto`

* Student pace: `self paced`

* Scheduled project review date/time: `Fri Oct 15, 2021 3pm – 3:45pm (PDT)`

* Instructor name: `James Irving`

* Blog post URL: https://renoneto.github.io/using_streamlit

#### This project originated the [following app](https://... | github_jupyter | import pandas as pd

import numpy as np

import seaborn as sns

import matplotlib.pyplot as plt

import re

import time

from surprise import Reader, Dataset, dump

from surprise.model_selection import cross_validate, GridSearchCV

from surprise.prediction_algorithms import KNNBasic, KNNBaseline, SVD, SVDpp

from surprise.accur... | 0.662906 | 0.885829 |

```

#all_slow

#export

from fastai.basics import *

#hide

from nbdev.showdoc import *

#default_exp callback.tensorboard

```

# Tensorboard

> Integration with [tensorboard](https://www.tensorflow.org/tensorboard)

First thing first, you need to install tensorboard with

```

pip install tensorboard

```

Then launch tensorbo... | github_jupyter | #all_slow

#export

from fastai.basics import *

#hide

from nbdev.showdoc import *

#default_exp callback.tensorboard

pip install tensorboard

in your terminal. You can change the logdir as long as it matches the `log_dir` you pass to `TensorBoardCallback` (default is `runs` in the working directory).

## Tensorboard Embe... | 0.718496 | 0.86511 |

<a href="https://colab.research.google.com/github/Victoooooor/SimpleJobs/blob/main/movenet.ipynb" target="_parent"><img src="https://colab.research.google.com/assets/colab-badge.svg" alt="Open In Colab"/></a>

```

#@title

!pip install -q imageio

!pip install -q opencv-python

!pip install -q git+https://github.com/tenso... | github_jupyter | #@title

!pip install -q imageio

!pip install -q opencv-python

!pip install -q git+https://github.com/tensorflow/docs

#@title

import tensorflow as tf

import tensorflow_hub as hub

from tensorflow_docs.vis import embed

import numpy as np

import cv2

import os

# Import matplotlib libraries

from matplotlib import pyplot as p... | 0.760651 | 0.820001 |

# Getting started with Captum Insights: a simple model on CIFAR10 dataset

Demonstrates how to use Captum Insights embedded in a notebook to debug a CIFAR model and test samples. This is a slight modification of the CIFAR_TorchVision_Interpret notebook.

More details about the model can be found here: https://pytorch.o... | github_jupyter | import os

import torch

import torch.nn as nn

import torchvision

import torchvision.transforms as transforms

from captum.insights import AttributionVisualizer, Batch

from captum.insights.features import ImageFeature

def get_classes():

classes = [

"Plane",

"Car",

"Bird",

"Cat",

... | 0.905044 | 0.978935 |

# Loading Image Data

So far we've been working with fairly artificial datasets that you wouldn't typically be using in real projects. Instead, you'll likely be dealing with full-sized images like you'd get from smart phone cameras. In this notebook, we'll look at how to load images and use them to train neural network... | github_jupyter | %matplotlib inline

%config InlineBackend.figure_format = 'retina'

import matplotlib.pyplot as plt

import torch

from torchvision import datasets, transforms

import helper

dataset = datasets.ImageFolder('path/to/data', transform=transform)

root/dog/xxx.png

root/dog/xxy.png

root/dog/xxz.png

root/cat/123.png

root/cat... | 0.829561 | 0.991161 |

```

# Visualization of the KO+ChIP Gold Standard from:

# Miraldi et al. (2018) "Leveraging chromatin accessibility for transcriptional regulatory network inference in Th17 Cells"

# TO START: In the menu above, choose "Cell" --> "Run All", and network + heatmap will load

# Change "canvas" to "SVG" (drop-down menu in ce... | github_jupyter | # Visualization of the KO+ChIP Gold Standard from:

# Miraldi et al. (2018) "Leveraging chromatin accessibility for transcriptional regulatory network inference in Th17 Cells"

# TO START: In the menu above, choose "Cell" --> "Run All", and network + heatmap will load

# Change "canvas" to "SVG" (drop-down menu in cell b... | 0.609757 | 0.747455 |

# Bagging

This notebook introduces a very natural strategy to build ensembles of

machine learning models named "bagging".

"Bagging" stands for Bootstrap AGGregatING. It uses bootstrap resampling

(random sampling with replacement) to learn several models on random

variations of the training set. At predict time, the p... | github_jupyter | import pandas as pd

import numpy as np

# create a random number generator that will be used to set the randomness

rng = np.random.RandomState(1)

def generate_data(n_samples=30):

"""Generate synthetic dataset. Returns `data_train`, `data_test`,

`target_train`."""

x_min, x_max = -3, 3

x = rng.uniform(x... | 0.856317 | 0.963057 |

### Dependencies for the interactive plots apart from rdkit, oechem and other qc* packages

!conda install -c conda-forge plotly -y

!conda install -c plotly jupyter-dash -y

!conda install -c plotly plotly-orca -y

```

#imports

import numpy as np

from scipy import stats

import fragmenter

from openeye import oechem... | github_jupyter | #imports

import numpy as np

from scipy import stats

import fragmenter

from openeye import oechem

TD_datasets = [

'Fragment Stability Benchmark',

# 'Fragmenter paper',

# 'OpenFF DANCE 1 eMolecules t142 v1.0',

'OpenFF Fragmenter Validation 1.0',

'OpenFF Full TorsionDrive Benchmark 1',

'OpenFF Gen 2 Torsion Set ... | 0.668015 | 0.692207 |

# Noisy Convolutional Neural Network Example

Build a noisy convolutional neural network with TensorFlow v2.

- Author: Gagandeep Singh

- Project: https://github.com/czgdp1807/noisy_weights

Experimental Details

- Datasets: The MNIST database of handwritten digits has been used for training and testing.

Observations

... | github_jupyter | from __future__ import absolute_import, division, print_function

import tensorflow as tf

from tensorflow.keras import Model, layers

import numpy as np

# MNIST dataset parameters.

num_classes = 10 # total classes (0-9 digits).

# Training parameters.

learning_rate = 0.001

training_steps = 200

batch_size = 128

display_s... | 0.939519 | 0.974893 |

This is a "Neural Network" toy example which implements the basic logical gates.

Here we don't use any method to train the NN model. We just guess correct weight.

It is meant to show how in principle NN works.

```

import math

def sigmoid(x):

return 1./(1+ math.exp(-x))

def neuron(inputs, weights):

return sigmo... | github_jupyter | import math

def sigmoid(x):

return 1./(1+ math.exp(-x))

def neuron(inputs, weights):

return sigmoid(sum([x*y for x,y in zip(inputs,weights)]))

def almost_equal(x,y,epsilon=0.001):

return abs(x-y) < epsilon

def NN_OR(x1,x2):

weights =[-10, 20, 20]

inputs = [1, x1, x2]

return neuron(weights,input... | 0.609292 | 0.978073 |

```

# Dependencies and Setup

import pandas as pd

# File to Load (Remember to change the path if needed.)

school_data_to_load = "Resources/schools_complete.csv"

student_data_to_load = "Resources/students_complete.csv"

# Read the School Data and Student Data and store into a Pandas DataFrame

school_data_df = pd.read_cs... | github_jupyter | # Dependencies and Setup

import pandas as pd

# File to Load (Remember to change the path if needed.)

school_data_to_load = "Resources/schools_complete.csv"

student_data_to_load = "Resources/students_complete.csv"

# Read the School Data and Student Data and store into a Pandas DataFrame

school_data_df = pd.read_csv(sc... | 0.641198 | 0.861538 |

# 探索过拟合和欠拟合

在前面的两个例子中(电影影评分类和预测燃油效率),我们看到,在训练许多周期之后,我们的模型对验证数据的准确性会到达峰值,然后开始下降。

换句话说,我们的模型会过度拟合训练数据,学习如果处理过拟合很重要,尽管通常可以在训练集上实现高精度,但我们真正想要的是开发能够很好泛化测试数据(或之前未见过的数据)的模型。

过拟合的反面是欠拟合,当测试数据仍有改进空间会发生欠拟合,出现这种情况的原因有很多:模型不够强大,过度正则化,或者根本没有经过足够长的时间训练,这意味着网络尚未学习训练数据中的相关模式。

如果训练时间过长,模型将开始过度拟合,并从训练数据中学习模式,而这些模式可能并不适用于测试数据,我们需要取得平... | github_jupyter | from __future__ import absolute_import, division, print_function, unicode_literals

try:

# %tensorflow_version only exists in Colab.

%tensorflow_version 2.x

except Exception:

pass

import tensorflow as tf

from tensorflow import keras

import numpy as np

import matplotlib.pyplot as plt

print(tf.__version__)

NUM_W... | 0.894657 | 0.938745 |

# Ex2 - Getting and Knowing your Data

Check out [Chipotle Exercises Video Tutorial](https://www.youtube.com/watch?v=lpuYZ5EUyS8&list=PLgJhDSE2ZLxaY_DigHeiIDC1cD09rXgJv&index=2) to watch a data scientist go through the exercises

This time we are going to pull data directly from the internet.

Special thanks to: https:/... | github_jupyter | import pandas as pd

import numpy as np

url = 'https://raw.githubusercontent.com/justmarkham/DAT8/master/data/chipotle.tsv'

chipo = pd.read_csv(url, sep = '\t')

chipo.head(10)

# Solution 1

chipo.shape[0] # entries <= 4622 observations

# Solution 2

chipo.info() # entries <= 4622 observations

chipo.shape[1]

c... | 0.628179 | 0.988199 |

# Simulation of Ball drop and Spring mass damper system

"Simulation of dynamic systems for dummies".

<img src="for_dummies.jpg" width="200" align="right">

This is a very simple description of how to do time simulations of a dynamic system using SciPy ODE (Ordinary Differnetial Equation) Solver.

```

from scipy.integra... | github_jupyter | from scipy.integrate import odeint

import numpy as np

import matplotlib.pyplot as plt

V_start = 150*10**3/3600 # [m/s] Train velocity at start

def train(states,t):

# states:

# [x]

x = states[0] # Position of train

dxdt = V_start # The position state will change by the speed of the train

... | 0.782372 | 0.981382 |

# Heart Rate Varability (HRV)

NeuroKit2 is the most comprehensive software for computing HRV indices, and the list of features is available below:

| Domains | Indices | NeuroKit | heartpy | HRV | pyHRV | |

|-------------------|:-------:|:---------------:|:-------:|:---:|:-----:|---|

| Time Domain ... | github_jupyter | # Load the NeuroKit package and other useful packages

import neurokit2 as nk

import matplotlib.pyplot as plt

%matplotlib inline

plt.rcParams['figure.figsize'] = [15, 9] # Bigger images

data = nk.data("bio_resting_5min_100hz")

data.head() # Print first 5 rows

# Find peaks

peaks, info = nk.ecg_peaks(data["ECG"], samp... | 0.673514 | 0.92054 |

```

import numpy as np

import os

import torch

import torchvision

import torchvision.transforms as transforms

### Load dataset - Preprocessing

DATA_PATH = '/tmp/data'

BATCH_SIZE = 64

def load_mnist(path, batch_size):

if not os.path.exists(path): os.mkdir(path)

trans = transforms.Compose([transforms.ToTensor()... | github_jupyter | import numpy as np

import os

import torch

import torchvision

import torchvision.transforms as transforms

### Load dataset - Preprocessing

DATA_PATH = '/tmp/data'

BATCH_SIZE = 64

def load_mnist(path, batch_size):

if not os.path.exists(path): os.mkdir(path)

trans = transforms.Compose([transforms.ToTensor(),

... | 0.807537 | 0.641029 |

# Numpy

" NumPy is the fundamental package for scientific computing with Python. It contains among other things:

* a powerful N-dimensional array object

* sophisticated (broadcasting) functions

* useful linear algebra, Fourier transform, and random number capabilities "

-- From the [NumPy](http://www.numpy.org/) l... | github_jupyter | from __future__ import absolute_import

from __future__ import division

from __future__ import print_function

import numpy as np

np.random.random((3, 2)) # Array of shape (3, 2), entries uniform in [0, 1).

np.random.seed(0)

print(np.random.random(2))

# Reset the global random state to the same state.

np.random.seed(... | 0.719581 | 0.988165 |

```

import numpy as np

import pandas as pd

import mxnet as mx

import matplotlib.pyplot as plt

import plotly.plotly as py

import logging

logging.basicConfig(level=logging.DEBUG)

train1=pd.read_csv('../data/train.csv')

train1.shape

train1.iloc[0:4, 0:15]

train=np.asarray(train1.iloc[0:33600,:])

cv=np.asarray(train1.ilo... | github_jupyter | import numpy as np

import pandas as pd

import mxnet as mx

import matplotlib.pyplot as plt

import plotly.plotly as py

import logging

logging.basicConfig(level=logging.DEBUG)

train1=pd.read_csv('../data/train.csv')

train1.shape

train1.iloc[0:4, 0:15]

train=np.asarray(train1.iloc[0:33600,:])

cv=np.asarray(train1.iloc[33... | 0.610686 | 0.707922 |

```

# Hidden code cell for setup

# Imports and setup

import astropixie

import astropixie_widgets

import enum

import ipywidgets

import numpy

astropixie_widgets.config.setup_notebook()

from astropixie.data import pprint as show_data_in_table

from numpy import intersect1d as stars_in_both

class SortOrder(enum.Enum):

... | github_jupyter | # Hidden code cell for setup

# Imports and setup

import astropixie

import astropixie_widgets

import enum

import ipywidgets

import numpy

astropixie_widgets.config.setup_notebook()

from astropixie.data import pprint as show_data_in_table

from numpy import intersect1d as stars_in_both

class SortOrder(enum.Enum):

B... | 0.615781 | 0.910704 |

# Compare Robustness

## Set up the Environment

```

# Import everything that's needed to run the notebook

import os

import pickle

import dill

import pathlib

import datetime

import random

import time

from IPython.display import display, Markdown, Latex

import pandas as pd

import numpy as np

from sklearn.pipeline impor... | github_jupyter | # Import everything that's needed to run the notebook

import os

import pickle

import dill

import pathlib

import datetime

import random

import time

from IPython.display import display, Markdown, Latex

import pandas as pd

import numpy as np

from sklearn.pipeline import Pipeline

from sklearn.base import BaseEstimator, Tr... | 0.652906 | 0.773216 |

## Example. Probability of a girl birth given placenta previa

**Analysis using a uniform prior distribution**

```

%matplotlib inline

import arviz as az

import matplotlib.pyplot as plt

import numpy as np

import pymc as pm

from scipy.special import expit

az.style.use('arviz-darkgrid')

%config Inline.figure_formats = ... | github_jupyter | %matplotlib inline

import arviz as az

import matplotlib.pyplot as plt

import numpy as np

import pymc as pm

from scipy.special import expit

az.style.use('arviz-darkgrid')

%config Inline.figure_formats = ['retina']

%load_ext watermark

births = 987

fem_births = 437

with pm.Model() as model_1:

theta = pm.Uniform('the... | 0.709623 | 0.987436 |

# Klasyfikatory

### Pakiety

```

import pandas as pd

import numpy as np

import category_encoders as ce

import matplotlib.pyplot as plt

from sklearn.ensemble import RandomForestClassifier

from sklearn.model_selection import GridSearchCV, train_test_split

from sklearn.metrics import recall_score

from sklearn.pipeline i... | github_jupyter | import pandas as pd

import numpy as np

import category_encoders as ce

import matplotlib.pyplot as plt

from sklearn.ensemble import RandomForestClassifier

from sklearn.model_selection import GridSearchCV, train_test_split

from sklearn.metrics import recall_score

from sklearn.pipeline import Pipeline

from sklearn.metric... | 0.605799 | 0.797596 |

# Statistics

:label:`sec_statistics`

Undoubtedly, to be a top deep learning practitioner, the ability to train the state-of-the-art and high accurate models is crucial. However, it is often unclear when improvements are significant, or only the result of random fluctuations in the training process. To be able to dis... | github_jupyter | import random

from mxnet import np, npx

from d2l import mxnet as d2l

npx.set_np()

# Sample datapoints and create y coordinate

epsilon = 0.1

random.seed(8675309)

xs = np.random.normal(loc=0, scale=1, size=(300,))

ys = [

np.sum(

np.exp(-(xs[:i] - xs[i])**2 /

(2 * epsilon**2)) / np.sqrt(2 * n... | 0.76533 | 0.994396 |

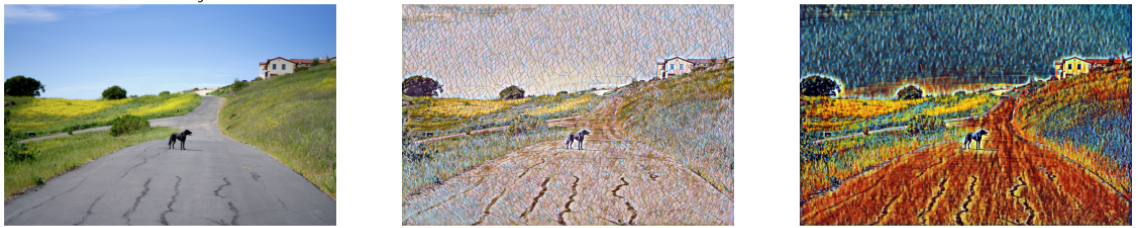

# Style Transfer on ONNX Models with OpenVINO

This notebook demonstrates [Fast Neural Style Transfer](https://github.com/onnx/models/tree/master/vision/style_transfer/fast_neu... | github_jupyter | import sys

from enum import Enum

from pathlib import Path

import cv2

import matplotlib.pyplot as plt

import numpy as np

from IPython.display import HTML, FileLink, clear_output, display

from openvino.runtime import Core, PartialShape

from yaspin import yaspin

sys.path.append("../utils")

from notebook_utils import dow... | 0.610453 | 0.951051 |

```

!wget https://download.pytorch.org/tutorial/hymenoptera_data.zip -P data/

!unzip -d data data/hymenoptera_data.zip

import torch

import torch.nn as nn

import torch.optim as optim

from torch.optim import lr_scheduler

import torchvision

from torchvision import datasets, models

from torchvision import transforms as T

... | github_jupyter | !wget https://download.pytorch.org/tutorial/hymenoptera_data.zip -P data/

!unzip -d data data/hymenoptera_data.zip

import torch

import torch.nn as nn

import torch.optim as optim

from torch.optim import lr_scheduler

import torchvision

from torchvision import datasets, models

from torchvision import transforms as T

impo... | 0.836688 | 0.817319 |

```

!pip install coremltools

# Initialise packages

from u2net import U2NETP

import coremltools as ct

from coremltools.proto import FeatureTypes_pb2 as ft

import torch

import torch.nn as nn

from torch.autograd import Variable

import os

import numpy as np

from PIL import Image

from torchvision import transforms

from ... | github_jupyter | !pip install coremltools

# Initialise packages

from u2net import U2NETP

import coremltools as ct

from coremltools.proto import FeatureTypes_pb2 as ft

import torch

import torch.nn as nn

from torch.autograd import Variable

import os

import numpy as np

from PIL import Image

from torchvision import transforms

from skim... | 0.821617 | 0.38523 |

# Basics of Deep Learning

In this notebook, we will cover the basics behind Deep Learning. I'm talking about building a brain....

Only kidding. Deep learning is a fascinating new field that has exploded over the last few... | github_jupyter | import numpy as np

# We will be using a sigmoid activation function

def sigmoid(x):

return 1/(1+np.exp(-x))

# derivation of sigmoid(x) - will be used for backpropagating errors through the network

def sigmoid_prime(x):

return sigmoid(x)*(1-sigmoid(x))

x = np.array([1,5])

y = 0.4

weights = np.array([-0.2,0.... | 0.678753 | 0.989712 |

# Convolutional Neural Network Example

Build a convolutional neural network with TensorFlow.

This example is using TensorFlow layers API, see 'convolutional_network_raw' example

for a raw TensorFlow implementation with variables.

- Author: Aymeric Damien

- Project: https://github.com/aymericdamien/TensorFlow-Example... | github_jupyter | from __future__ import division, print_function, absolute_import

# Import MNIST data

from tensorflow.examples.tutorials.mnist import input_data

mnist = input_data.read_data_sets("/tmp/data/", one_hot=False)

import tensorflow as tf

import matplotlib.pyplot as plt

import numpy as np

# Training Parameters

learning_rate ... | 0.828973 | 0.987017 |

```

%load_ext autoreload

%autoreload 2

import gust # library for loading graph data

import matplotlib.pyplot as plt

import numpy as np

import seaborn as sns

import scipy.sparse as sp

import torch

import torch.nn as nn

import torch.nn.functional as F

import torch.distributions as dist

import time

import random

from sc... | github_jupyter | %load_ext autoreload

%autoreload 2

import gust # library for loading graph data

import matplotlib.pyplot as plt

import numpy as np

import seaborn as sns

import scipy.sparse as sp

import torch

import torch.nn as nn

import torch.nn.functional as F

import torch.distributions as dist

import time

import random

from scipy.... | 0.79158 | 0.604487 |

# Analyzing Portfolio Risk and Return

In this Challenge, you'll assume the role of a quantitative analyst for a FinTech investing platform. This platform aims to offer clients a one-stop online investment solution for their retirement portfolios that’s both inexpensive and high quality. (Think about [Wealthfront](http... | github_jupyter | # Import the required libraries and dependencies

import pandas as pd

from pathlib import Path

%matplotlib inline

import numpy as np

import os

#understanding where we are in the dir in order to have Path work correctly

os.getcwd()

# Import the data by reading in the CSV file and setting the DatetimeIndex

# Review ... | 0.851089 | 0.995805 |

```

# install: tqdm (progress bars)

!pip install tqdm

import torch

import torch.nn as nn

import numpy as np

from tqdm.auto import tqdm

from torch.utils.data import DataLoader, Dataset, TensorDataset

import torchvision.datasets as ds

```

## Load the data (CIFAR-10)

```

def load_cifar(datadir='./data_cache'): # will d... | github_jupyter | # install: tqdm (progress bars)

!pip install tqdm

import torch

import torch.nn as nn

import numpy as np

from tqdm.auto import tqdm

from torch.utils.data import DataLoader, Dataset, TensorDataset

import torchvision.datasets as ds

def load_cifar(datadir='./data_cache'): # will download ~400MB of data into this dir. Cha... | 0.879858 | 0.832475 |

# Introduction to Deep Learning with PyTorch

In this notebook, you will get an introduction to [PyTorch](http://pytorch.org/), which is a framework for building and training neural networks (NN). ``PyTorch`` in a lot of ways behaves like the arrays you know and love from Numpy. These Numpy arrays, after all, are just ... | github_jupyter | # First, import PyTorch

!pip install torch==1.10.1

!pip install matplotlib==3.5.0

!pip install numpy==1.21.4

!pip install omegaconf==2.1.1

!pip install optuna==2.10.0

!pip install Pillow==9.0.0

!pip install scikit_learn==1.0.2

!pip install torchvision==0.11.2

!pip install transformers==4.15.0

# First, import PyTorch

im... | 0.708616 | 0.988949 |

<h1>PCA Training with BotNet (02-03-2018)</h1>

```

import os

import tensorflow as tf

import numpy as np

import itertools

import matplotlib.pyplot as plt

import gc

from datetime import datetime

from sklearn.utils import shuffle

from sklearn.preprocessing import StandardScaler

from sklearn.preprocessing import MinMaxSca... | github_jupyter | import os

import tensorflow as tf

import numpy as np

import itertools

import matplotlib.pyplot as plt

import gc

from datetime import datetime

from sklearn.utils import shuffle

from sklearn.preprocessing import StandardScaler

from sklearn.preprocessing import MinMaxScaler

from sklearn.model_selection import train_test_s... | 0.623377 | 0.711042 |

<a href="https://colab.research.google.com/github/awikner/CHyPP/blob/master/TREND_Logistic_Regression.ipynb" target="_parent"><img src="https://colab.research.google.com/assets/colab-badge.svg" alt="Open In Colab"/></a>

## Import libraries and sklearn and skimage modules.

```

import matplotlib.pyplot as plt

import nu... | github_jupyter | import matplotlib.pyplot as plt

import numpy as np

from sklearn.datasets import fetch_openml

from sklearn.linear_model import LogisticRegression

from sklearn.model_selection import train_test_split

from skimage.util import invert

X, y = fetch_openml('mnist_784', version=1, return_X_y=True)

plt.imshow(invert(X[0].res... | 0.611034 | 0.99066 |

<table class="ee-notebook-buttons" align="left">

<td><a target="_blank" href="https://github.com/giswqs/earthengine-py-notebooks/tree/master/Algorithms/Segmentation/segmentation_snic.ipynb"><img width=32px src="https://www.tensorflow.org/images/GitHub-Mark-32px.png" /> View source on GitHub</a></td>

<td><a tar... | github_jupyter | # Installs geemap package

import subprocess

try:

import geemap

except ImportError:

print('geemap package not installed. Installing ...')

subprocess.check_call(["python", '-m', 'pip', 'install', 'geemap'])

# Checks whether this notebook is running on Google Colab

try:

import google.colab

import gee... | 0.665845 | 0.958654 |

# Introduction to XGBoost Spark with GPU

Taxi is an example of xgboost regressor. In this notebook, we will show you how to load data, train the xgboost model and use this model to predict "fare_amount" of your taxi trip.

A few libraries are required:

1. NumPy

2. cudf jar

3. xgboost4j jar

4. xgboost4j-spark j... | github_jupyter | from ml.dmlc.xgboost4j.scala.spark import XGBoostRegressionModel, XGBoostRegressor

from ml.dmlc.xgboost4j.scala.spark.rapids import GpuDataReader

from pyspark.ml.evaluation import RegressionEvaluator

from pyspark.sql import SparkSession

from pyspark.sql.types import FloatType, IntegerType, StructField, StructType

from ... | 0.730001 | 0.960731 |

<!--BOOK_INFORMATION-->

<img align="left" style="padding-right:10px;" src="figures/PDSH-cover-small.png">

*This notebook contains an excerpt from the [Python Data Science Handbook](http://shop.oreilly.com/product/0636920034919.do) by Jake VanderPlas; the content is available [on GitHub](https://github.com/jakevdp/Pytho... | github_jupyter | import matplotlib.pyplot as plt

plt.style.use('classic')

%matplotlib inline

import numpy as np

import pandas as pd

# Create some data

rng = np.random.RandomState(0)

x = np.linspace(0, 10, 500)

y = np.cumsum(rng.randn(500, 6), 0)

# Plot the data with Matplotlib defaults

plt.plot(x, y)

plt.legend('ABCDEF', ncol=2, loc=... | 0.669096 | 0.988906 |

# SMARTS selection and depiction

## Depict molecular components selected by a particular SMARTS

This notebook focuses on selecting molecules containing fragments matching a particular SMARTS query, and then depicting the components (i.e. bonds, angles, torsions) matching that particular query.

```

import openeye.oech... | github_jupyter | import openeye.oechem as oechem

import openeye.oedepict as oedepict

from IPython.display import display

import os

from __future__ import print_function

def depictMatch(mol, match, width=500, height=200):

"""Take in an OpenEye molecule and a substructure match and display the results

with (optionally) specified ... | 0.676086 | 0.783119 |

# <span style="color:red">Seaborn | Part-14: FacetGrid:</span>

Welcome to another lecture on *Seaborn*! Our journey began with assigning *style* and *color* to our plots as per our requirement. Then we moved on to *visualize distribution of a dataset*, and *Linear relationships*, and further we dived into topics cover... | github_jupyter | # Importing intrinsic libraries:

import numpy as np

import pandas as pd

np.random.seed(101)

import matplotlib.pyplot as plt

import seaborn as sns

%matplotlib inline

sns.set(style="whitegrid", palette="rocket")

import warnings

warnings.filterwarnings("ignore")

# Let us also get tableau colors we defined earlier:

tablea... | 0.677581 | 0.986071 |

# MIST101 Pratical 1: Introduction to Tensorflow (Basics of Tensorflow)

## What is Tensor

The central unit of data in TensorFlow is the tensor. A tensor consists of a set of primitive values shaped into an array of any number of dimensions. A tensor's rank is its number of dimensions. Here are some examples of tensor... | github_jupyter | 3 # a rank 0 tensor; this is a scalar with shape []

[1., 2., 3.] # a rank 1 tensor; this is a vector with shape [3]

[[1., 2., 3.], [4., 5., 6.]] # a rank 2 tensor; a matrix with shape [2, 3]

[[[1., 2., 3.]], [[7., 8., 9.]]] # a rank 3 tensor with shape [2, 1, 3]

import tensorflow as tf

node1 = tf.constant(3.0, dtype=... | 0.872836 | 0.996264 |

<!--NOTEBOOK_HEADER-->

*This notebook contains material from [cbe61622](https://jckantor.github.io/cbe61622);

content is available [on Github](https://github.com/jckantor/cbe61622.git).*

<!--NAVIGATION-->

< [4.0 Chemical Instrumentation](https://jckantor.github.io/cbe61622/04.00-Chemical_Instrumentation.html) | [Conte... | github_jupyter | <!--NOTEBOOK_HEADER-->

*This notebook contains material from [cbe61622](https://jckantor.github.io/cbe61622);

content is available [on Github](https://github.com/jckantor/cbe61622.git).*

<!--NAVIGATION-->

< [4.0 Chemical Instrumentation](https://jckantor.github.io/cbe61622/04.00-Chemical_Instrumentation.html) | [Conte... | 0.672762 | 0.703193 |

<a href="https://colab.research.google.com/github/linked0/deep-learning/blob/master/AAMY/cifar10_cnn_my.ipynb" target="_parent"><img src="https://colab.research.google.com/assets/colab-badge.svg" alt="Open In Colab"/></a>

```

'''

#Train a simple deep CNN on the CIFAR10 small images dataset.

It gets to 75% validation ... | github_jupyter | '''

#Train a simple deep CNN on the CIFAR10 small images dataset.

It gets to 75% validation accuracy in 25 epochs, and 79% after 50 epochs.

(It's still underfitting at that point, though).

'''

from __future__ import print_function

import keras

from keras.datasets import cifar10

from keras.preprocessing.image import I... | 0.873701 | 0.925634 |

# Hello Image Segmentation

A very basic introduction to using segmentation models with OpenVINO.

We use the pre-trained [road-segmentation-adas-0001](https://docs.openvinotoolkit.org/latest/omz_models_model_road_segmentation_adas_0001.html) model from the [Open Model Zoo](https://github.com/openvinotoolkit/open_model... | github_jupyter | import cv2

import matplotlib.pyplot as plt

import numpy as np

import sys

from openvino.runtime import Core

sys.path.append("../utils")

from notebook_utils import segmentation_map_to_image

ie = Core()

model = ie.read_model(model="model/road-segmentation-adas-0001.xml")

compiled_model = ie.compile_model(model=model, d... | 0.636466 | 0.988414 |

[Table of Contents](http://nbviewer.ipython.org/github/rlabbe/Kalman-and-Bayesian-Filters-in-Python/blob/master/table_of_contents.ipynb)

# Gaussian Probabilities

```

#format the book

%matplotlib notebook

from __future__ import division, print_function

from book_format import load_style

load_style()

```

## Introducti... | github_jupyter | #format the book

%matplotlib notebook

from __future__ import division, print_function

from book_format import load_style

load_style()

import numpy as np

x = [1.85, 2.0, 1.7, 1.9, 1.6]

print(np.mean(x))

print(np.median(x))

X = [1.8, 2.0, 1.7, 1.9, 1.6]

Y = [2.2, 1.5, 2.3, 1.7, 1.3]

Z = [1.8, 1.8, 1.8, 1.8, 1.8]

prin... | 0.68056 | 0.992386 |

```

import numpy as np

import logging

import torch

import torch.nn.functional as F

import numpy as np

from tqdm import trange

from pytorch_pretrained_bert import GPT2Tokenizer, GPT2Model, GPT2LMHeadModel

# Load pre-trained model tokenizer (vocabulary)

tokenizer = GPT2Tokenizer.from_pretrained('gpt2')

text_1 = "It wa... | github_jupyter | import numpy as np

import logging

import torch

import torch.nn.functional as F

import numpy as np

from tqdm import trange

from pytorch_pretrained_bert import GPT2Tokenizer, GPT2Model, GPT2LMHeadModel

# Load pre-trained model tokenizer (vocabulary)

tokenizer = GPT2Tokenizer.from_pretrained('gpt2')

text_1 = "It was ne... | 0.871297 | 0.707859 |

```

from typing import Tuple, Dict, Callable, Iterator, Union, Optional, List

import os

import sys

import yaml

import numpy as np

import torch

from torch import Tensor

import gym

# To import module code.

module_path = os.path.abspath(os.path.join('..'))

if module_path not in sys.path:

sys.path.append(module_pat... | github_jupyter | from typing import Tuple, Dict, Callable, Iterator, Union, Optional, List

import os

import sys

import yaml

import numpy as np

import torch

from torch import Tensor

import gym

# To import module code.

module_path = os.path.abspath(os.path.join('..'))

if module_path not in sys.path:

sys.path.append(module_path)

f... | 0.727782 | 0.433382 |

Universidade Federal do Rio Grande do Sul (UFRGS)

Programa de Pós-Graduação em Engenharia Civil (PPGEC)

# Project PETROBRAS (2018/00147-5):

## Attenuation of dynamic loading along mooring lines embedded in clay

---

_Prof. Marcelo M. Rocha, Dr.techn._ [(ORCID)](https://orcid.org/0000-0001-5640-1020)

Porto Ale... | github_jupyter | # Importing Python modules required for this notebook

# (this cell must be executed with "shift+enter" before any other Python cell)

import numpy as np

import pandas as pd

import matplotlib.pyplot as plt

# Importing "pandas dataframe" with dimension exponents for scales calculation

DimData = pd.read_excel('resources/D... | 0.626238 | 0.941007 |

<center>

<img src="https://raw.githubusercontent.com/Yorko/mlcourse.ai/master/img/ods_stickers.jpg" />

## [mlcourse.ai](https://mlcourse.ai) - Open Machine Learning Course

<center>

Auteur: [Yury Kashnitskiy](https://yorko.github.io). Traduit par Anna Larionova et [Ousmane Cissé](https://fr.linkedin.com/in/ousmane... | github_jupyter | import numpy as np

import pandas as pd

from matplotlib import pyplot as plt

%config InlineBackend.figure_format = 'retina'

import seaborn as sns

sns.set() # just to use the seaborn theme

from sklearn.datasets import load_boston

from sklearn.linear_model import Lasso, LassoCV, Ridge, RidgeCV

from sklearn.model_sele... | 0.764716 | 0.984094 |

```

!pip install gluoncv # -i https://opentuna.cn/pypi/web/simple

%matplotlib inline

```

4. Transfer Learning with Your Own Image Dataset

=======================================================

Dataset size is a big factor in the performance of deep learning models.

``ImageNet`` has over one million labeled images, b... | github_jupyter | !pip install gluoncv # -i https://opentuna.cn/pypi/web/simple

%matplotlib inline

import mxnet as mx

import numpy as np

import os, time, shutil

from mxnet import gluon, image, init, nd

from mxnet import autograd as ag

from mxnet.gluon import nn

from mxnet.gluon.data.vision import transforms

from gluoncv.utils import m... | 0.679179 | 0.925769 |

## Diffusion Tensor Imaging (DTI)

Diffusion tensor imaging or "DTI" refers to images describing diffusion with a tensor model. DTI is derived from preprocessed diffusion weighted imaging (DWI) data. First proposed by Basser and colleagues ([Basser, 1994](https://www.ncbi.nlm.nih.gov/pubmed/8130344)), the diffusion ten... | github_jupyter | import bids

from bids.layout import BIDSLayout

from dipy.io.gradients import read_bvals_bvecs

from dipy.core.gradients import gradient_table

from nilearn import image as img

import nibabel as nib

bids.config.set_option('extension_initial_dot', True)

deriv_layout = BIDSLayout("../../../data/ds000221/derivatives", vali... | 0.68616 | 0.99153 |

```

import pandas as pd

import numpy as np

import statsmodels.api as sm

from scipy.stats import norm

# Getting the database

df_data = pd.read_excel('proshares_analysis_data.xlsx', header=0, index_col=0, sheet_name='merrill_factors')

df_data.head()

```

# Section 1 - Short answer

1.1 Mean-variance optimization goes lon... | github_jupyter | import pandas as pd

import numpy as np

import statsmodels.api as sm

from scipy.stats import norm

# Getting the database

df_data = pd.read_excel('proshares_analysis_data.xlsx', header=0, index_col=0, sheet_name='merrill_factors')

df_data.head()

# 2.1 What are the weights of the tangency portfolio, wtan?

rf_lab = 'USGG3... | 0.777638 | 0.904777 |

```

try:

import openmdao.api as om

except ImportError:

!python -m pip install openmdao[notebooks]

import openmdao.api as om

```

# BoundsEnforceLS

The BoundsEnforceLS only backtracks until variables violate their upper and lower bounds.

Here is a simple example where BoundsEnforceLS is used to backtrack d... | github_jupyter | try:

import openmdao.api as om

except ImportError:

!python -m pip install openmdao[notebooks]

import openmdao.api as om

import numpy as np

import openmdao.api as om

from openmdao.test_suite.components.implicit_newton_linesearch import ImplCompTwoStatesArrays

top = om.Problem()

top.model.add_subsystem('co... | 0.825379 | 0.8059 |

# Classroom exercise: energy calculation

## Diffusion model in 1D

Description: A one-dimensional diffusion model. (Could be a gas of particles, or a bunch of crowded people in a corridor, or animals in a valley habitat...)

- Agents are on a 1d axis

- Agents do not want to be where there are other agents

- This is re... | github_jupyter | %matplotlib inline

import numpy as np

from matplotlib import pyplot as plt

density = np.array([0, 0, 3, 5, 8, 4, 2, 1])

fig, ax = plt.subplots()

ax.bar(np.arange(len(density)) - 0.5, density)

ax.xrange = [-0.5, len(density) - 0.5]

ax.set_ylabel("Particle count $n_i$")

ax.set_xlabel("Position $i$")

%%bash

rm -rf diffu... | 0.724188 | 0.982305 |

<a href="https://colab.research.google.com/github/krakowiakpawel9/convnet-course/blob/master/02_mnist_cnn.ipynb" target="_parent"><img src="https://colab.research.google.com/assets/colab-badge.svg" alt="Open In Colab"/></a>

## Trenowanie prostej sieci neuronowej na zbiorze MNIST

```

import keras

from keras.datasets i... | github_jupyter | import keras

from keras.datasets import mnist

from keras.models import Sequential

from keras.layers import Dense, Dropout, Flatten

from keras.layers import Conv2D, MaxPooling2D

from keras import backend as K

import warnings

warnings.filterwarnings('ignore')

# zdefiniowanie wymiarów obrazu wejsciowego

img_rows, img_co... | 0.728941 | 0.950915 |

# Think Bayes: Chapter 7

This notebook presents code and exercises from Think Bayes, second edition.

Copyright 2016 Allen B. Downey

MIT License: https://opensource.org/licenses/MIT

```

from __future__ import print_function, division

import matplotlib.pyplot as plt

%matplotlib inline

import warnings

warnings.filter... | github_jupyter | from __future__ import print_function, division

import matplotlib.pyplot as plt

%matplotlib inline

import warnings

warnings.filterwarnings('ignore')

import math

import numpy as np

from thinkbayes2 import Pmf, Cdf, Suite, Joint

import thinkbayes2

import thinkplot

### Solution

### Solution

### Solution

### Solutio... | 0.772788 | 0.983738 |

### **Heavy Machinery Image Recognition**

We are going to build a Machine Learning which can recognize a heavy machinery images, whether it is a truck or an excavator

```

from IPython.display import display

import os

import requests

from PIL import Image

from io import BytesIO

import numpy as np

import pandas as pd

... | github_jupyter | from IPython.display import display

import os

import requests

from PIL import Image

from io import BytesIO

import numpy as np

import pandas as pd

import tensorflow as tf

from tensorflow.keras.preprocessing.image import load_img, img_to_array

from keras.layers.convolutional import Conv2D, MaxPooling2D

from keras.layer... | 0.652795 | 0.90261 |

<small><i>This notebook was put together by [Jake Vanderplas](http://www.vanderplas.com). Source and license info is on [GitHub](https://github.com/jakevdp/sklearn_tutorial/).</i></small>

```

! git clone https://github.com/data-psl/lectures2021

import sys

sys.path.append('lectures2021/notebooks/02_sklearn')

%cd 'lectu... | github_jupyter | ! git clone https://github.com/data-psl/lectures2021

import sys

sys.path.append('lectures2021/notebooks/02_sklearn')

%cd 'lectures2021/notebooks/02_sklearn'

%matplotlib inline

import numpy as np

import matplotlib.pyplot as plt

from scipy import stats

plt.style.use('seaborn')

np.random.seed(2)

x = np.concatenate([np.... | 0.694199 | 0.984679 |

While taking the **Intro to Deep Learning with PyTorch** course by Udacity, I really liked exercise that was based on building a character-level language model using LSTMs. I was unable to complete all on my own since NLP is still a very new field to me. I decided to give the exercise a try with `tensorflow 2.0` and b... | github_jupyter | !pip install tensorflow-gpu==2.0.0-beta1

import tensorflow as tf

from tensorflow.keras.optimizers import Adam

import numpy as np

from tensorflow.keras.preprocessing.sequence import pad_sequences

print(tf.__version__)

# Open text file and read in data as `text`

with open('anna.txt', 'r') as f:

text = f.read()

# Fi... | 0.701713 | 0.947381 |

## Example. Estimating the speed of light

Simon Newcomb's measurements of the speed of light, from

> Stigler, S. M. (1977). Do robust estimators work with real data? (with discussion). *Annals of

Statistics* **5**, 1055–1098.

The data are recorded as deviations from $24\ 800$

nanoseconds. Table 3.1 of Bayesian Dat... | github_jupyter | %matplotlib inline

import arviz as az

import matplotlib.pyplot as plt

import numpy as np

import pymc as pm

import seaborn as sns

from scipy.optimize import brentq

plt.style.use('seaborn-darkgrid')

plt.rc('font', size=12)

%config Inline.figure_formats = ['retina']

numbs = "28 26 33 24 34 -44 27 16 40 -2 29 22 \

24 21... | 0.627495 | 0.955693 |

```

%matplotlib inline

```

# Net file

This is the Net file for the clique problem: state and output transition function definition

```

import tensorflow as tf

import numpy as np

def weight_variable(shape, nm):

'''function to initialize weights'''

initial = tf.truncated_normal(shape, stddev=0.1)

tf.sum... | github_jupyter | %matplotlib inline

import tensorflow as tf

import numpy as np

def weight_variable(shape, nm):

'''function to initialize weights'''

initial = tf.truncated_normal(shape, stddev=0.1)

tf.summary.histogram(nm, initial, collections=['always'])

return tf.Variable(initial, name=nm)

class Net:

'''class ... | 0.738575 | 0.843186 |

<a href="https://colab.research.google.com/github/seyrankhademi/introduction2AI/blob/main/linear_vs_mlp.ipynb" target="_parent"><img src="https://colab.research.google.com/assets/colab-badge.svg" alt="Open In Colab"/></a>

# Computer Programming vs Machine Learning

This notebook is written by Dr. Seyran Khademi to f... | github_jupyter | # The weighet-sum function takes as an input the feature values for the applicant

# and outputs the final score.

import numpy as np

def weighted_sum(GPA,QP,Age,Loan):

#check that the points for GPA and QP are in range between 0 and 10

x=GPA

y=QP

points = np.array([x,y])

if (points < 0).all() and (points > ... | 0.651022 | 0.989501 |

# The Fourier Transform

*This Jupyter notebook is part of a [collection of notebooks](../index.ipynb) in the bachelors module Signals and Systems, Communications Engineering, Universität Rostock. Please direct questions and suggestions to [Sascha.Spors@uni-rostock.de](mailto:Sascha.Spors@uni-rostock.de).*

## Properti... | github_jupyter | %matplotlib inline

import sympy as sym

sym.init_printing()

def fourier_transform(x):

return sym.transforms._fourier_transform(x, t, w, 1, -1, 'Fourier')

def inverse_fourier_transform(X):

return sym.transforms._fourier_transform(X, w, t, 1/(2*sym.pi), 1, 'Inverse Fourier')

t, w = sym.symbols('t omega')

X = sy... | 0.706089 | 0.99311 |

# Pyspark & Astrophysical data: IMAGE

Let's play with Image. In this example, we load an image data from a FITS file (CFHTLens), and identify sources with a simple astropy algorithm. The workflow is described below. For simplicity, we only focus on one CCD in this notebook. For full scale, see the pyspark [im2cat.py](... | github_jupyter | ## Import SparkSession from Spark

from pyspark.sql import SparkSession

## Create a DataFrame from the HDU data of a FITS file

fn = "../../src/test/resources/image.fits"

hdu = 1

df = spark.read.format("fits").option("hdu", hdu).load(fn)

## By default, spark-fits distributes the rows of the image

df.printSchema()

df.show... | 0.880045 | 0.986442 |

# Data Manipulation

It is impossible to get anything done if we cannot manipulate data. Generally, there are two important things we need to do with data: (i) acquire it and (ii) process it once it is inside the computer. There is no point in acquiring data if we do not even know how to store it, so let's get our hand... | github_jupyter | import torch

x = torch.arange(12, dtype=torch.float64)

x

# We can get the tensor shape through the shape attribute.

x.shape

# .shape is an alias for .size(), and was added to more closely match numpy

x.size()

x = x.reshape((3, 4))

x

torch.FloatTensor(2, 3)

torch.Tensor(2, 3)

torch.empty(2, 3)

torch.zeros((2, 3, 4))... | 0.644113 | 0.994129 |

## GANs

Credits: \

https://pytorch.org/tutorials/beginner/dcgan_faces_tutorial.html \

https://jovian.ai/aakashns/06-mnist-gan

```

from __future__ import print_function

#%matplotlib inline

import argparse

import os

import random

import torch

import torch.nn as nn

import torch.nn.parallel

import torch.backends.cudnn as... | github_jupyter | from __future__ import print_function

#%matplotlib inline

import argparse

import os

import random

import torch

import torch.nn as nn

import torch.nn.parallel

import torch.backends.cudnn as cudnn

import torch.optim as optim

import torch.utils.data

import torchvision.datasets as dset

import torchvision.transforms as tran... | 0.838548 | 0.843638 |

<table> <tr>

<td style="background-color:#ffffff;">

<a href="http://qworld.lu.lv" target="_blank"><img src="../images/qworld.jpg" width="25%" align="left"> </a></td>

<td style="background-color:#ffffff;vertical-align:bottom;text-align:right;">

prepared by <a href="http://abu.lu.... | github_jupyter | <table> <tr>

<td style="background-color:#ffffff;">

<a href="http://qworld.lu.lv" target="_blank"><img src="../images/qworld.jpg" width="25%" align="left"> </a></td>

<td style="background-color:#ffffff;vertical-align:bottom;text-align:right;">

prepared by <a href="http://abu.lu.... | 0.712932 | 0.969985 |

```

from google.colab import drive

drive.mount('/content/drive')

import os

os.chdir('/content/drive/My Drive/Colab Notebooks/Udacity/deep-learning-v2-pytorch/convolutional-neural-networks/conv-visualization')

```

# Maxpooling Layer

In this notebook, we add and visualize the output of a maxpooling layer in a CNN.

A ... | github_jupyter | from google.colab import drive

drive.mount('/content/drive')

import os

os.chdir('/content/drive/My Drive/Colab Notebooks/Udacity/deep-learning-v2-pytorch/convolutional-neural-networks/conv-visualization')

import cv2

import matplotlib.pyplot as plt

%matplotlib inline

# TODO: Feel free to try out your own images here b... | 0.6488 | 0.862757 |

To start this Jupyter Dash app, please run all the cells below. Then, click on the **temporary** URL at the end of the last cell to open the app.

```

!pip install -q jupyter-dash==0.3.0rc1 dash-bootstrap-components transformers

import time

import dash

import dash_html_components as html

import dash_core_components as... | github_jupyter | !pip install -q jupyter-dash==0.3.0rc1 dash-bootstrap-components transformers

import time

import dash

import dash_html_components as html

import dash_core_components as dcc

import dash_bootstrap_components as dbc

from dash.dependencies import Input, Output, State

from jupyter_dash import JupyterDash

from transformers ... | 0.727395 | 0.54468 |

```

from google.colab import drive

drive.mount('/content/drive')

```

Importing all the dependencies

```

from tensorflow.keras.preprocessing.image import ImageDataGenerator

from tensorflow.keras.applications import MobileNetV2, ResNet50, VGG19

from tensorflow.keras.layers import AveragePooling2D

from tensorflow.keras.... | github_jupyter | from google.colab import drive

drive.mount('/content/drive')

from tensorflow.keras.preprocessing.image import ImageDataGenerator

from tensorflow.keras.applications import MobileNetV2, ResNet50, VGG19

from tensorflow.keras.layers import AveragePooling2D

from tensorflow.keras.layers import Dropout

from tensorflow.keras.... | 0.673621 | 0.855489 |

```

import numpy as np

import pandas as pd

import tensorflow as tf

from tensorflow.keras import layers, optimizers, models

import matplotlib.pyplot as plt

import seaborn as sns

from sklearn.preprocessing import MinMaxScaler

from pandas.plotting import register_matplotlib_converters

%matplotlib inline

register_matplot... | github_jupyter | import numpy as np

import pandas as pd

import tensorflow as tf

from tensorflow.keras import layers, optimizers, models

import matplotlib.pyplot as plt

import seaborn as sns

from sklearn.preprocessing import MinMaxScaler

from pandas.plotting import register_matplotlib_converters

%matplotlib inline

register_matplotlib_... | 0.699254 | 0.822688 |

<a href="https://colab.research.google.com/github/paulowe/ml-lambda/blob/main/colab-train1.ipynb" target="_parent"><img src="https://colab.research.google.com/assets/colab-badge.svg" alt="Open In Colab"/></a>

## Import packages

```

import sklearn

import pandas as pd

import numpy as np

import csv as csv

from sklearn.m... | github_jupyter | import sklearn

import pandas as pd

import numpy as np

import csv as csv

from sklearn.model_selection import train_test_split

from sklearn.neural_network import MLPClassifier

from sklearn.metrics import classification_report

from sklearn import metrics

from sklearn.externals import joblib

from sklearn.preprocessing impo... | 0.615897 | 0.972753 |

# Periodic Motion: Kinematic Exploration of Pendulum

Working with observations to develop a conceptual representation of periodic motion in the context of a pendulum.

### Dependencies

This is my usual spectrum of dependencies that seem to be generally useful. We'll see if I need additional ones. When needed I will u... | github_jupyter | %matplotlib inline

import matplotlib.pyplot as plt

import numpy as np

from numpy.random import default_rng

rng = default_rng()

conceptX = [-5., 0., 5., 0., -5., 0]

conceptY = [-.6, 0.,0.6,0.,-0.6,0.]

conceptTheta = [- 15. , 0., 15., 0., -15.,0.]

conceptTime = [0., 1.,2.,3.,4.,5.]

fig, ax = plt.subplots()

ax.scatter(c... | 0.611614 | 0.978426 |

```

%matplotlib inline

```

A Gentle Introduction to ``torch.autograd``

---------------------------------

``torch.autograd`` is PyTorch’s automatic differentiation engine that powers

neural network training. In this section, you will get a conceptual

understanding of how autograd helps a neural network train.

Backgr... | github_jupyter | %matplotlib inline

import torch, torchvision

model = torchvision.models.resnet18(pretrained=True)

data = torch.rand(1, 3, 64, 64)

labels = torch.rand(1, 1000)

prediction = model(data) # forward pass

loss = (prediction - labels).sum()

loss.backward() # backward pass

optim = torch.optim.SGD(model.parameters(), lr=1e-... | 0.861101 | 0.992393 |

# Financial Planning with APIs and Simulations

In this Challenge, you’ll create two financial analysis tools by using a single Jupyter notebook:

Part 1: A financial planner for emergencies. The members will be able to use this tool to visualize their current savings. The members can then determine if they have enough... | github_jupyter | # Import the required libraries and dependencies

import os

import requests

import json

import pandas as pd

from dotenv import load_dotenv

import alpaca_trade_api as tradeapi

from MCForecastTools import MCSimulation

%matplotlib inline

# Load the environment variables from the .env file

#by calling the load_dotenv func... | 0.729327 | 0.989254 |

<a href="https://colab.research.google.com/github/chadeowen/DS-Sprint-03-Creating-Professional-Portfolios/blob/master/ChadOwen_DS_Unit_1_Sprint_Challenge_3.ipynb" target="_parent"><img src="https://colab.research.google.com/assets/colab-badge.svg" alt="Open In Colab"/></a>

# Data Science Unit 1 Sprint Challenge 3

# C... | github_jupyter | <a href="https://colab.research.google.com/github/chadeowen/DS-Sprint-03-Creating-Professional-Portfolios/blob/master/ChadOwen_DS_Unit_1_Sprint_Challenge_3.ipynb" target="_parent"><img src="https://colab.research.google.com/assets/colab-badge.svg" alt="Open In Colab"/></a>

# Data Science Unit 1 Sprint Challenge 3

# C... | 0.63273 | 0.747017 |

```

%matplotlib inline

```

Tensors

--------------------------------------------

Tensors are a specialized data structure that are very similar to arrays

and matrices. In PyTorch, we use tensors to encode the inputs and

outputs of a model, as well as the model’s parameters.

Tensors are similar to NumPy’s ndarrays, e... | github_jupyter | %matplotlib inline

import torch

import numpy as np

data = [[1, 2], [3, 4]]

x_data = torch.tensor(data)

x_data.dtype

x_data

np_array = np.array(data)

x_np = torch.from_numpy(np_array)

np_array

x_np

x_ones = torch.ones_like(x_data) # retains the properties of x_data

print(f"Ones Tensor: \n {x_ones} \n")

x_rand = tor... | 0.671255 | 0.983518 |

```

%matplotlib inline

from mpl_toolkits.basemap import Basemap

import matplotlib.pyplot as plt

fig = plt.figure(figsize=(8, 4.5))

plt.subplots_adjust(left=0.02, right=0.98, top=0.98, bottom=0.00)

m = Basemap(projection='robin',lon_0=0,resolution='c')

m.fillcontinents(color='gray',lake_color='white')

m.drawcoastlines(... | github_jupyter | %matplotlib inline

from mpl_toolkits.basemap import Basemap

import matplotlib.pyplot as plt

fig = plt.figure(figsize=(8, 4.5))

plt.subplots_adjust(left=0.02, right=0.98, top=0.98, bottom=0.00)

m = Basemap(projection='robin',lon_0=0,resolution='c')

m.fillcontinents(color='gray',lake_color='white')

m.drawcoastlines()

pl... | 0.633977 | 0.774157 |

# 250-D Multivariate Normal

Let's go for broke here.

## Setup

First, let's set up some environmental dependencies. These just make the numerics easier and adjust some of the plotting defaults to make things more legible.

```

# Python 3 compatability

from __future__ import division, print_function

from builtins impo... | github_jupyter | # Python 3 compatability

from __future__ import division, print_function

from builtins import range

# system functions that are always useful to have

import time, sys, os

# basic numeric setup

import numpy as np

import math

from numpy import linalg

# inline plotting

%matplotlib inline

# plotting

import matplotlib

f... | 0.685423 | 0.874023 |

# Transfer Learning

In this notebook, you'll learn how to use pre-trained networks to solved challenging problems in computer vision. Specifically, you'll use networks trained on [ImageNet](http://www.image-net.org/) [available from torchvision](http://pytorch.org/docs/0.3.0/torchvision/models.html).

ImageNet is a m... | github_jupyter | %matplotlib inline

%config InlineBackend.figure_format = 'retina'

import matplotlib.pyplot as plt

import torch

from torch import nn

from torch import optim

import torch.nn.functional as F

from torchvision import datasets, transforms, models

data_dir = 'Cat_Dog_data'

# TODO: Define transforms for the training data a... | 0.67694 | 0.989582 |

```

import gym

import math

import numpy as np

from collections import deque

import matplotlib.pyplot as plt

%matplotlib inline

import torch

import torch.nn as nn

import torch.nn.functional as F

from torch.autograd import Variable

import time as t

from gym import envs

envids = [spec.id for spec in envs.registry.all()]... | github_jupyter | import gym

import math

import numpy as np

from collections import deque

import matplotlib.pyplot as plt

%matplotlib inline

import torch

import torch.nn as nn

import torch.nn.functional as F

from torch.autograd import Variable

import time as t

from gym import envs

envids = [spec.id for spec in envs.registry.all()]

'''... | 0.805058 | 0.615897 |

(IN)=

# 1.7 Integración Numérica

```{admonition} Notas para contenedor de docker:

Comando de docker para ejecución de la nota de forma local:

nota: cambiar `<ruta a mi directorio>` por la ruta de directorio que se desea mapear a `/datos` dentro del contenedor de docker.

`docker run --rm -v <ruta a mi directorio>:/... | github_jupyter |

---

Nota generada a partir de la [liga1](https://www.dropbox.com/s/jfrxanjls8kndjp/Diferenciacion_e_Integracion.pdf?dl=0) y [liga2](https://www.dropbox.com/s/k3y7h9yn5d3yf3t/Integracion_por_Monte_Carlo.pdf?dl=0).

En lo siguiente consideramos que las funciones del integrando están en $\mathcal{C}^2$ en el conjunt... | 0.742795 | 0.898009 |

# Use Case 1: Kögur

In this example we will subsample a dataset stored on SciServer using methods resembling field-work procedures.

Specifically, we will estimate volume fluxes through the [Kögur section](http://kogur.whoi.edu) using (i) mooring arrays, and (ii) ship surveys.

```

# Import oceanspy

import oceanspy as o... | github_jupyter | # Import oceanspy

import oceanspy as ospy

# Import additional packages used in this notebook

import numpy as np

import matplotlib.pyplot as plt

import cartopy.crs as ccrs

# Start client

from dask.distributed import Client

client = Client()

client

# Open dataset stored on SciServer.

od = ospy.open_oceandataset.from_c... | 0.639961 | 0.982691 |

# CS229: Problem Set 4

## Problem 4: Independent Component Analysis

**C. Combier**

This iPython Notebook provides solutions to Stanford's CS229 (Machine Learning, Fall 2017) graduate course problem set 3, taught by Andrew Ng.

The problem set can be found here: [./ps4.pdf](ps4.pdf)

I chose to write the solutions to... | github_jupyter |

First, let's set up the environment and write helper functions:

- ```normalize``` ensures all mixes have the same volume

- ```load_data``` loads the mix

- ```play``` plays the audio using ```sounddevice```

Next we write a numerically stable sigmoid function, to avoid overflows:

The following functions calculate... | 0.80147 | 0.98752 |

Lorenz equations as a model of atmospheric convection:

This is one of the classic systems in non-linear differential equations. It exhibits a range of different behaviors as the parameters (σ, β, ρ) are varied.

x˙ = σ(y−x)

y˙ = ρx−y−xz

z˙ = −βz+xy

The Lorenz equations also arise in simplified models for lasers, d... | github_jupyter | %matplotlib inline

from ipywidgets import interact, interactive

from IPython.display import clear_output, display, HTML

import numpy as np

from scipy import integrate

from matplotlib import pyplot as plt

from mpl_toolkits.mplot3d import Axes3D

from matplotlib.colors import cnames

from matplotlib import animation

#Compu... | 0.702836 | 0.981875 |

## Neural Networks

- This was adopted from the PyTorch Tutorials.

- http://pytorch.org/tutorials/beginner/pytorch_with_examples.html

## Neural Networks

- Neural networks are the foundation of deep learning, which has revolutionized the

```In the mathematical theory of artificial neural networks, the universal app... | github_jupyter |

### Generate Fake Data

- `D_in` is the number of dimensions of an input varaible.

- `D_out` is the number of dimentions of an output variable.

- Here we are learning some special "fake" data that represents the xor problem.

- Here, the dv is 1 if either the first or second variable is

### A Simple Neural Network

... | 0.802981 | 0.989899 |

# Saving and Loading Models

In this notebook, I'll show you how to save and load models with PyTorch. This is important because you'll often want to load previously trained models to use in making predictions or to continue training on new data.

```

%matplotlib inline

%config InlineBackend.figure_format = 'retina'

i... | github_jupyter | %matplotlib inline

%config InlineBackend.figure_format = 'retina'

import matplotlib.pyplot as plt

import torch

from torch import nn

from torch import optim

import torch.nn.functional as F

from torchvision import datasets, transforms

import helper

import fc_model

# Define a transform to normalize the data

transform =... | 0.799011 | 0.989791 |

```

import time

import os

import pandas as pd

import numpy as np

np.set_printoptions(precision=6, suppress=True)

from sklearn.utils import shuffle

from sklearn.metrics import mean_squared_error

import tensorflow as tf

from tensorflow.keras import *

tf.__version__

gpus = tf.config.experimental.list_physical_devices('... | github_jupyter | import time

import os

import pandas as pd

import numpy as np

np.set_printoptions(precision=6, suppress=True)

from sklearn.utils import shuffle

from sklearn.metrics import mean_squared_error

import tensorflow as tf

from tensorflow.keras import *

tf.__version__

gpus = tf.config.experimental.list_physical_devices('GPU'... | 0.825414 | 0.674855 |

<a href="https://colab.research.google.com/github/prachi-lad17/Python-Case-Studies/blob/main/Case_Study_2%3A%20Figuring_out_which_customer_may_leave.ipynb" target="_parent"><img src="https://colab.research.google.com/assets/colab-badge.svg" alt="Open In Colab"/></a>

# **Figuring out which customer may leave**

```

```... | github_jupyter | ```

# Figuring Our Which Customers May Leave - Churn Analysis

### About our Dataset

Source - https://www.kaggle.com/blastchar/telco-customer-churn

1. We have customer information for a Telecommunications company

2. We've got customer IDs, general customer info, the servies they've subscribed too, type of contrac... | 0.719285 | 0.934634 |

# 04 - Full waveform inversion with Devito and Dask

## Introduction

In this tutorial we show how [Devito](http://www.devitoproject.org/devito-public) and [scipy.optimize.minimize](https://docs.scipy.org/doc/scipy/reference/generated/scipy.optimize.minimize.html) are used with [Dask](https://dask.pydata.org/en/latest/... | github_jupyter | scipy.optimize.minimize(fun, x0, args=(), method=None, jac=None, hess=None, hessp=None, bounds=None, constraints=(), tol=None, callback=None, options=None)

scipy.optimize.minimize(fun, x0, args=(), method='L-BFGS-B', jac=None, bounds=None, tol=None, callback=None, options={'disp': None, 'maxls': 20, 'iprint': -1, 'gto... | 0.857231 | 0.968081 |

# Code

**Date: February, 2017**

```

%matplotlib inline

import numpy as np

import scipy as sp

import scipy.stats as stats

import matplotlib.pyplot as plt

import seaborn as sns

import pandas as pd

# For linear regression

from scipy.stats import multivariate_normal

from scipy.integrate import dblquad

# Shut down warn... | github_jupyter | %matplotlib inline

import numpy as np

import scipy as sp

import scipy.stats as stats

import matplotlib.pyplot as plt

import seaborn as sns

import pandas as pd

# For linear regression

from scipy.stats import multivariate_normal

from scipy.integrate import dblquad

# Shut down warnings for nicer output

import warnings

... | 0.713232 | 0.832849 |

## Classes

```

from abc import ABC, abstractmethod

class Account(ABC):

def __init__(self, account_number, balance):

self._account_number = account_number

self._balance = balance

def deposit(self, value):

if value > 0:

self._balance += value

else:

... | github_jupyter | from abc import ABC, abstractmethod

class Account(ABC):

def __init__(self, account_number, balance):

self._account_number = account_number

self._balance = balance

def deposit(self, value):

if value > 0:

self._balance += value

else:

print("Invalid... | 0.6488 | 0.646446 |

```

import pandas as pd

docs = pd.read_table('SMSSpamCollection', header=None, names=['Class', 'sms'])

docs.head()

#df.column_name.value_counts() - gives no. of unique inputs in that columns

docs.Class.value_counts()

ham_spam=docs.Class.value_counts()

ham_spam

print("Spam % is ",(ham_spam[1]/float(ham_spam[0]+ham_spam[... | github_jupyter | import pandas as pd

docs = pd.read_table('SMSSpamCollection', header=None, names=['Class', 'sms'])

docs.head()

#df.column_name.value_counts() - gives no. of unique inputs in that columns

docs.Class.value_counts()

ham_spam=docs.Class.value_counts()

ham_spam

print("Spam % is ",(ham_spam[1]/float(ham_spam[0]+ham_spam[1]))... | 0.616936 | 0.544378 |

Straightforward translation of https://github.com/rmeinl/apricot-julia/blob/5f130f846f8b7f93bb4429e2b182f0765a61035c/notebooks/python_reimpl.ipynb

See also https://github.com/genkuroki/public/blob/main/0016/apricot/python_reimpl.ipynb

```

using Seaborn

using ScikitLearn: @sk_import

@sk_import datasets: fetch_covtype

... | github_jupyter | using Seaborn

using ScikitLearn: @sk_import

@sk_import datasets: fetch_covtype

using Random

using StatsBase: sample

digits_data = fetch_covtype()

X_digits = permutedims(abs.(digits_data["data"]))

summary(X_digits)

"""`calculate_gains!(X, gains, current_values, idxs, current_concave_values_sum)` mutates `gains` only"""

... | 0.66454 | 0.868882 |

**Pandas Exercises - With the NY Times Covid data**

Run the cell below to pull get the data from the nytimes github

```

!git clone https://github.com/nytimes/covid-19-data.git

```

**1. Import Pandas and Check your Version of Pandas**

```

import pandas as pd

pd.__version__

```

**2. Read the *us-counties.csv* data i... | github_jupyter | !git clone https://github.com/nytimes/covid-19-data.git

import pandas as pd

pd.__version__

covid_data = pd.read_csv('/content/covid-19-data/us-counties.csv')

covid_data.head(5)

covid_data = covid_data.drop('fips', axis=1)

covid_data.dtypes

covid_data.date = pd.to_datetime(covid_data.date)

covid_data = covid_data... | 0.65202 | 0.966505 |

## Welcome to Coding Exercise 5.

We'll only have 2 questions and both of them will be difficult. You may import other libraries to help you here. Clue: find out more about the ```itertools``` and ```math``` library.

### Question 1.

* List item

* List item

### "Greatest Possible Combination"

We have a functio... | github_jupyter |

Given 3 list/array/vector containing possible values of x1, x2, and x3, find the maximum output possible.

#### Explanation:

If x1 = 2, x2 = 5, x3 = 3, then...

The function's output is: (2^2 + 5 * 3) modulo 20 = (4 + 15) modulo 20 = 19 modulo 20 = 19.

If x1 = 3, x2 = 5, x3 = 3, then...

The function's output is:... | 0.883324 | 0.989977 |

```

"""Bond Breaking"""

__authors__ = "Victor H. Chavez", "Lyudmila Slipchenko"

__credits__ = ["Victor H. Chavez", "Lyudmila Slipchenko"]

__email__ = ["gonza445@purdue.edu", "lslipchenko@purdue.edu"]

__copyright__ = "(c) 2008-2019, The Psi4Education Developers"

__license__ = "BSD-3-Clause"

__date__ = "2019-1... | github_jupyter | """Bond Breaking"""

__authors__ = "Victor H. Chavez", "Lyudmila Slipchenko"

__credits__ = ["Victor H. Chavez", "Lyudmila Slipchenko"]

__email__ = ["gonza445@purdue.edu", "lslipchenko@purdue.edu"]

__copyright__ = "(c) 2008-2019, The Psi4Education Developers"

__license__ = "BSD-3-Clause"

__date__ = "2019-11-18... | 0.79657 | 0.925095 |

# Named Entity Recognition using Transformers

**Author:** [Varun Singh](https://www.linkedin.com/in/varunsingh2/)<br>

**Date created:** Jun 23, 2021<br>

**Last modified:** Jun 24, 2021<br>

**Description:** NER using the Transformers and data from CoNLL 2003 shared task.

## Introduction

Named Entity Recognition (NER)... | github_jupyter | !pip3 install datasets

!wget https://raw.githubusercontent.com/sighsmile/conlleval/master/conlleval.py

import os

import numpy as np

import tensorflow as tf

from tensorflow import keras

from tensorflow.keras import layers

from datasets import load_dataset

from collections import Counter

from conlleval import evaluate

... | 0.889966 | 0.936807 |

# Machine Learning

> A Summary of lecture "Introduction to Computational Thinking and Data Science", via MITx-6.00.2x (edX)

- toc: true

- badges: true

- comments: true

- author: Chanseok Kang

- categories: [Python, edX, Machine_Learning]

- image: images/ml_block.png

- What is Machine Learning

- Many useful progr... | github_jupyter | from lecture12_segment2 import *

cobra = Animal('cobra', [1,1,1,1,0])

rattlesnake = Animal('rattlesnake', [1,1,1,1,0])

boa = Animal('boa\nconstrictor', [0,1,0,1,0])

chicken = Animal('chicken', [1,1,0,1,2])

alligator = Animal('alligator', [1,1,0,1,4])

dartFrog = Animal('dart frog', [1,0,1,0,4])

zebra = Animal('zebra', [... | 0.641759 | 0.984246 |

# Workshop 12: Introduction to Numerical ODE Solutions

*Source: Eric Ayars, PHYS 312 @ CSU Chico*

**Submit this notebook to bCourses to receive a grade for this Workshop.**

Please complete workshop activities in code cells in this iPython notebook. The activities titled **Practice** are purely for you to explore Pyt... | github_jupyter | # Run this cell before preceding

%matplotlib inline

import numpy as np

import matplotlib.pyplot as plt

# Initial condition

t0 = 0.0

x0 = 0.75

# Make a grid of x,t values

t_values = np.linspace(t0, t0+3, 20)

x_values = np.linspace(-np.abs(x0)*1.2, np.abs(x0)*1.2, 20)

t, x = np.meshgrid(t_values, x_values)

# Evaluate ... | 0.828766 | 0.989928 |

Subsets and Splits

No community queries yet

The top public SQL queries from the community will appear here once available.