Published on July 10, 2024

Preference Optimization for Vision Language Models with TRL

Published on June 12, 2024

Putting RL back in RLHF

Published on September 29, 2023

Finetune Stable Diffusion Models with DDPO via TRL

Published on August 8, 2023

Fine-tune Llama 2 with DPO

Published on April 5, 2023

StackLLaMA: A hands-on guide to train LLaMA with RLHF

Published on March 9, 2023

Fine-tuning 20B LLMs with RLHF on a 24GB consumer GPU

Published on December 9, 2022

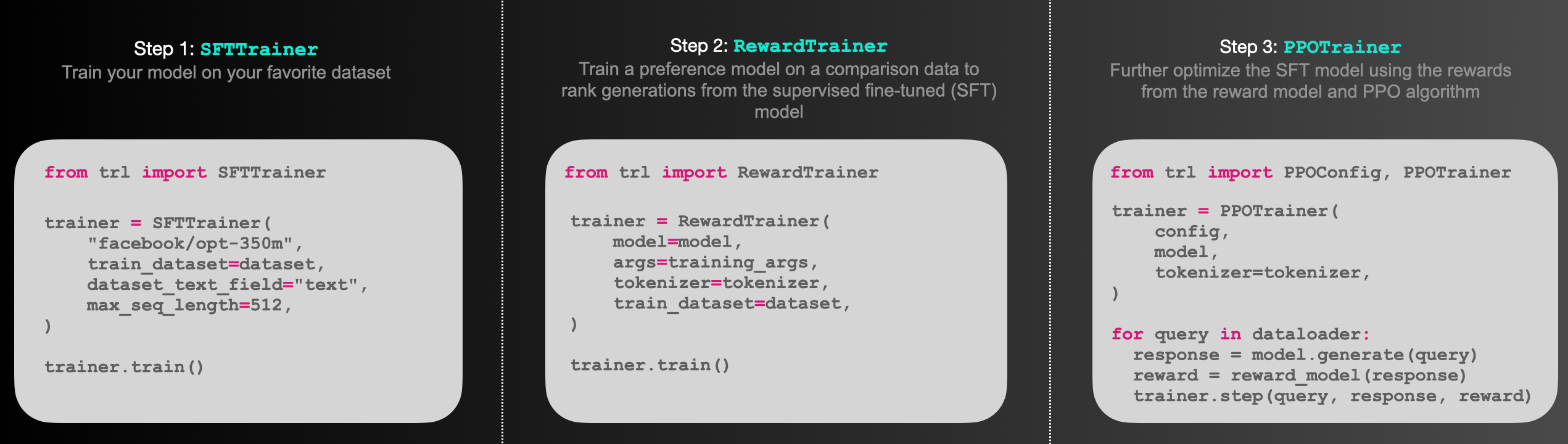

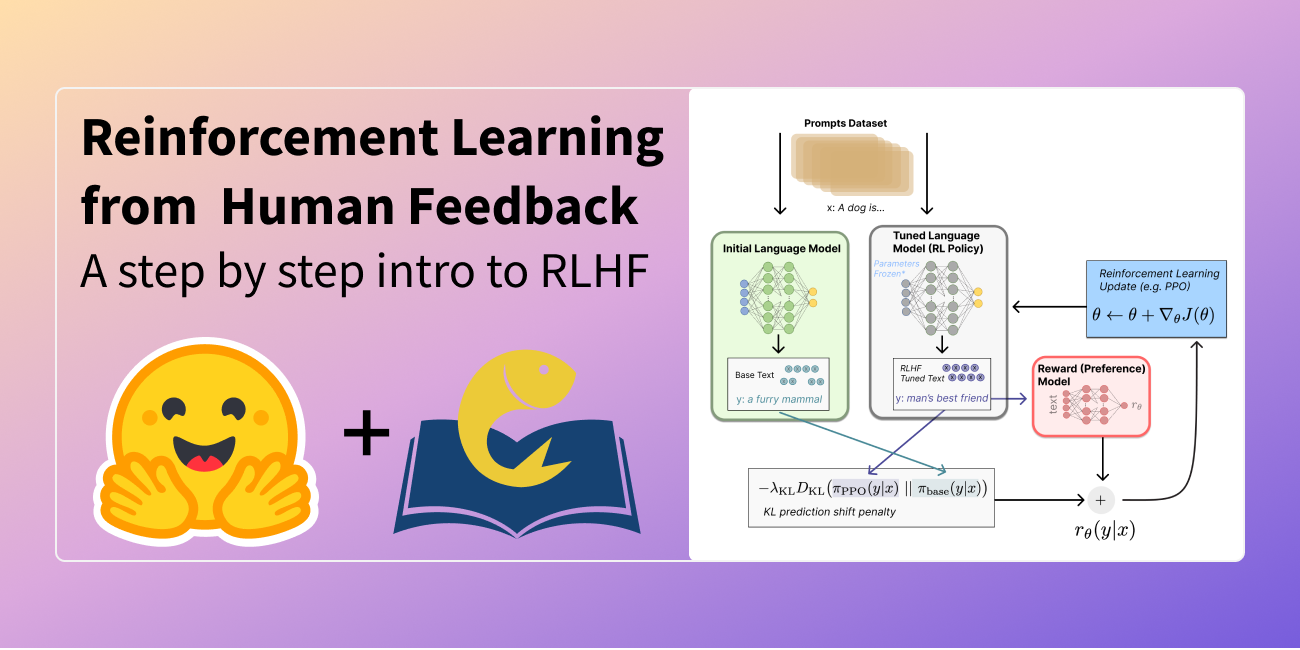

Illustrating Reinforcement Learning from Human Feedback