---

base_model:

- microsoft/phi-4

datasets:

- HuggingFaceFW/fineweb-edu

license: apache-2.0

model_name: Qwen3_1.7B_LoopUS_SFT

pipeline_tag: text-generation

tags:

- LoopUS

- LoopedTransformers

---

LoopUS:

Recasting Pretrained LLMs into Looped Latent Refinement Models

BAELAB, Pusan National University, Busan, Korea

DOLAB, Changwon National University, Changwon, Korea

Taekhyun Park1,

Yongjae Lee1,

Dohee Kim2,

Hyerim Bae1,†

🌟 Github |

🌐 Project Page |

📄 Paper

# Overview

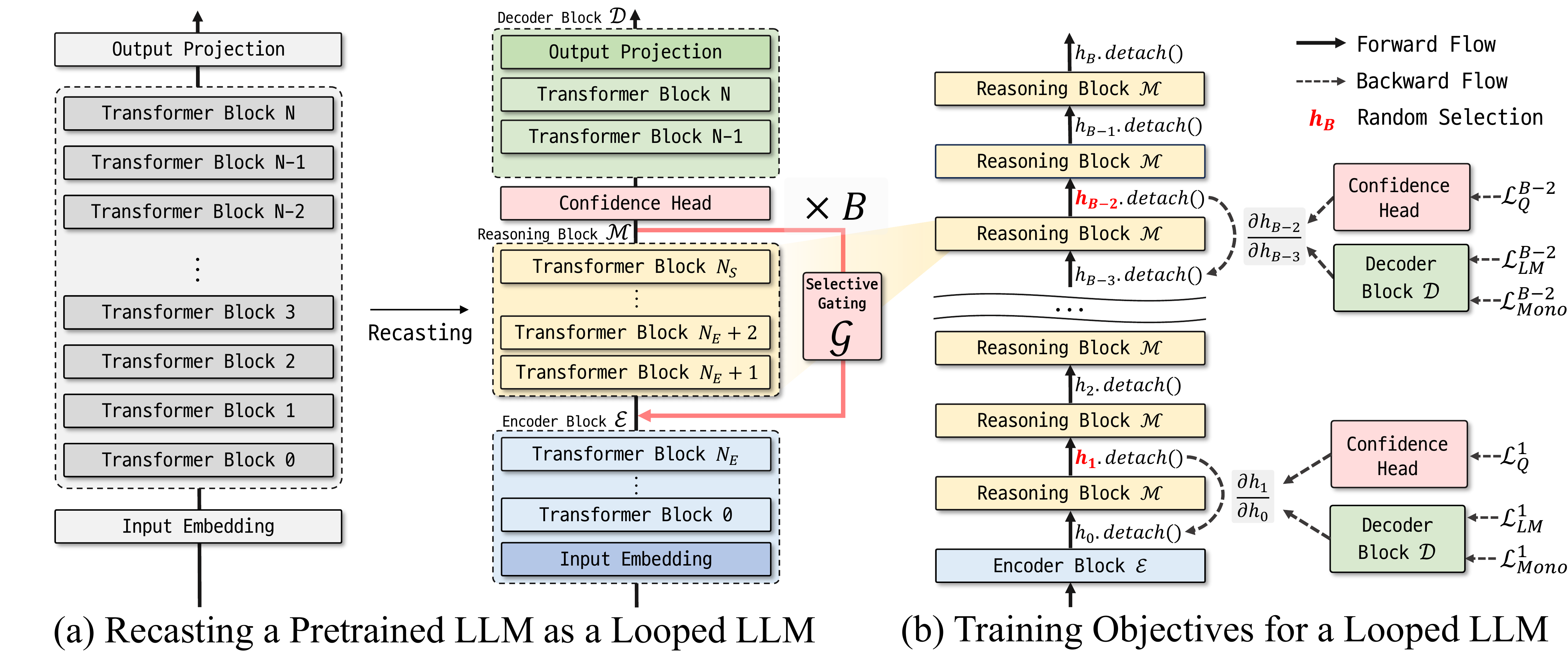

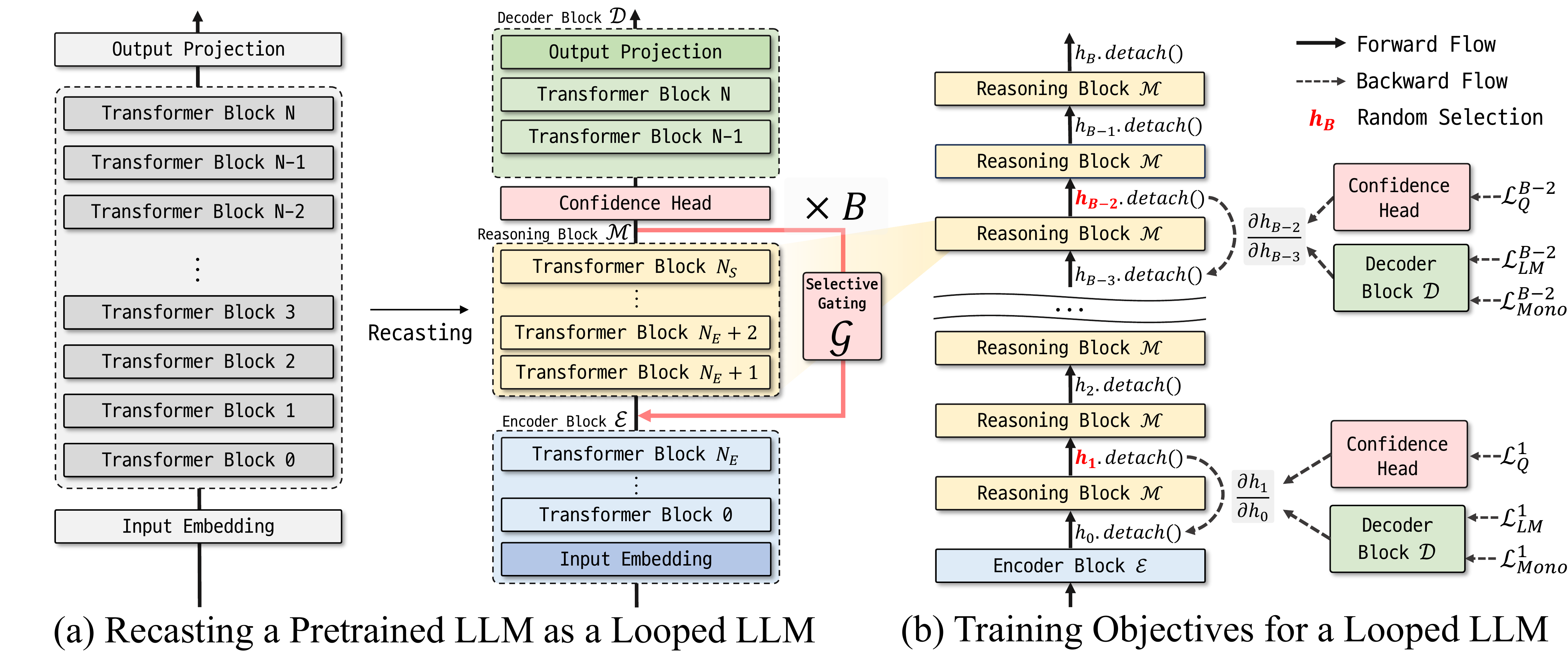

**Looped Depth Up-Scaling** (LoopUS) is a post-training framework that converts a standard pretrained LLM into a looped architecture. LoopUS recasts the pretrained LLM into an encoder, a looped reasoning block, and a decoder. It operationalizes this latent-refinement architecture through:

1. **Block Decomposition:** Recasts a pretrained transformer into a reusable latent-refinement architecture.

2. **Input-Dependent Selective Gate:** Adaptively controls hidden state propagation to mitigate drift.

3. **Random Deep Supervision:** Enables memory-efficient learning over long recursive horizons.

4. **Confidence Head:** Allows for adaptive early exiting during inference.

Through stable latent looping, LoopUS improves reasoning-oriented performance without extending the generated traces or requiring recurrent training from scratch.

# Illustration of LoopUS

# Quick Start

To use this model, please follow the installation instructions in the [official repository](https://github.com/Thrillcrazyer/LoopUS):

```bash

git clone https://github.com/Thrillcrazyer/LoopUS.git

cd LoopUS

uv sync

```

### Chatting Mode

```bash

uv run chat.py --model-name Thrillcrazyer/Qwen3_1.7B_LoopUS_SFT

```

### Qualitative Generation

```bash

uv run LoopUS-generate \

--model-name microsoft/phi-4 \

--decomposed-model Thrillcrazyer/Qwen3_1.7B_LoopUS_SFT \

--prompt "The meaning of life is" \

--n-recursion 8

```

# Citation

```bibtex

@misc{park2024loopus,

title={LoopUS: Recasting Pretrained LLMs into Looped Latent Refinement Models},

author={Taekhyun Park and Yongjae Lee and Dohee Kim and Hyerim Bae},

year={2024},

eprint={2605.11011},

archivePrefix={arXiv},

primaryClass={cs.CL},

url={https://arxiv.org/abs/2605.11011},

}

```