Update README.md

Browse files

README.md

CHANGED

|

@@ -1,3 +1,87 @@

|

|

| 1 |

-

---

|

| 2 |

-

|

| 3 |

-

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

---

|

| 2 |

+

language:

|

| 3 |

+

- en

|

| 4 |

+

license: cc-by-nc-sa-4.0

|

| 5 |

+

pipeline_tag: other

|

| 6 |

+

tags:

|

| 7 |

+

- motion-generation

|

| 8 |

+

- trajectory-prediction

|

| 9 |

+

- robotics

|

| 10 |

+

- computer-vision

|

| 11 |

+

- pytorch

|

| 12 |

+

- torch-hub

|

| 13 |

+

---

|

| 14 |

+

|

| 15 |

+

# ZipMo (Learning Long-term Motion Embeddings for Efficient Kinematics Generation)

|

| 16 |

+

|

| 17 |

+

[](https://compvis.github.io/long-term-motion)

|

| 18 |

+

[](https://arxiv.org/)

|

| 19 |

+

[](https://github.com/CompVis/long-term-motion)

|

| 20 |

+

[](https://compvis.github.io/long-term-motion)

|

| 21 |

+

|

| 22 |

+

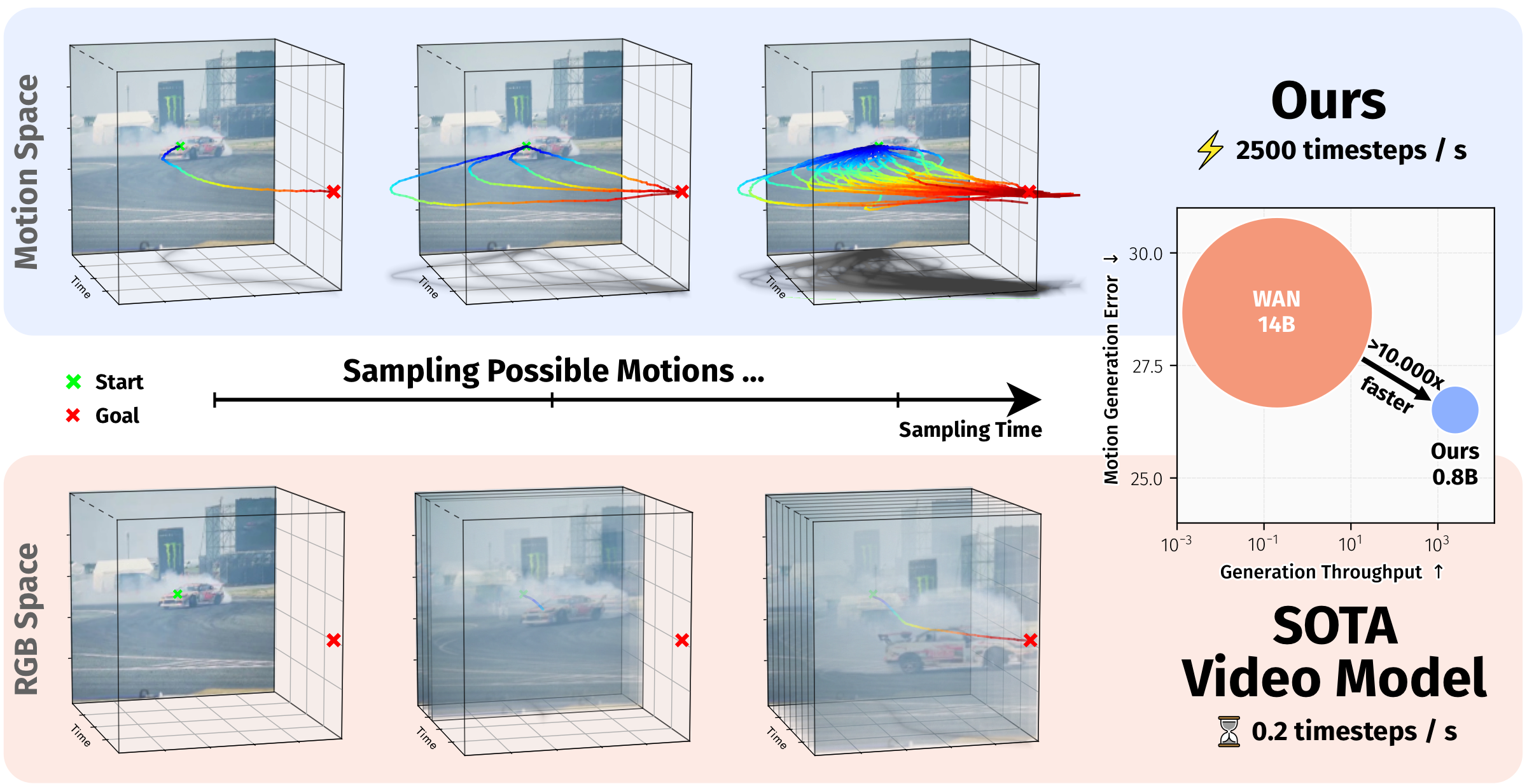

ZipMo is a motion-space model for efficient long-horizon kinematics generation. It learns compact long-term motion embeddings from large-scale tracker-derived trajectories and generates plausible future motion directly in this learned motion space. The model supports spatial-poke conditioning for open-domain videos and task/text-embedding conditioning for LIBERO robotics evaluation.

|

| 23 |

+

|

| 24 |

+

## Paper and Abstract

|

| 25 |

+

|

| 26 |

+

ZipMo was introduced in the CVPR 2026 paper **Learning Long-term Motion Embeddings for Efficient Kinematics Generation**.

|

| 27 |

+

|

| 28 |

+

Understanding and predicting motion is a fundamental component of visual intelligence. Although video models can synthesize scene dynamics, exploring many possible futures through full video generation is expensive. ZipMo instead operates directly on long-term motion embeddings learned from tracker trajectories, enabling efficient generation of long, realistic motions while preserving dense reconstruction at arbitrary spatial query points.

|

| 29 |

+

|

| 30 |

+

|

| 31 |

+

*ZipMo generates long-horizon motion in a compact learned motion space, supporting spatial-poke conditioning for open-domain videos and task-conditioned action prediction on LIBERO.*

|

| 32 |

+

|

| 33 |

+

## Usage

|

| 34 |

+

|

| 35 |

+

For programmatic use, the simplest way to use ZipMo is via `torch.hub`:

|

| 36 |

+

|

| 37 |

+

```python

|

| 38 |

+

import torch

|

| 39 |

+

|

| 40 |

+

repo = "CompVis/long-term-motion"

|

| 41 |

+

|

| 42 |

+

# Open-domain motion prediction

|

| 43 |

+

planner_sparse = torch.hub.load(repo, "zipmo_planner_sparse")

|

| 44 |

+

planner_dense = torch.hub.load(repo, "zipmo_planner_dense")

|

| 45 |

+

|

| 46 |

+

# Motion autoencoder

|

| 47 |

+

vae = torch.hub.load(repo, "zipmo_vae")

|

| 48 |

+

```

|

| 49 |

+

|

| 50 |

+

LIBERO planning and policy components can be loaded in the same way:

|

| 51 |

+

|

| 52 |

+

```python

|

| 53 |

+

import torch

|

| 54 |

+

|

| 55 |

+

repo = "CompVis/long-term-motion"

|

| 56 |

+

|

| 57 |

+

# LIBERO planners

|

| 58 |

+

libero_atm_planner = torch.hub.load(repo, "zipmo_planner_libero", "atm")

|

| 59 |

+

libero_tramoe_planner = torch.hub.load(repo, "zipmo_planner_libero", "tramoe")

|

| 60 |

+

|

| 61 |

+

# LIBERO policy heads

|

| 62 |

+

policy_head_atm = torch.hub.load(repo, "zipmo_policy_head", "atm")

|

| 63 |

+

policy_head_tramoe_goal = torch.hub.load(repo, "zipmo_policy_head", "tramoe", "goal")

|

| 64 |

+

```

|

| 65 |

+

|

| 66 |

+

Available Torch Hub entries:

|

| 67 |

+

|

| 68 |

+

- `zipmo_planner_sparse`: sparse-poke planner for open-domain motion prediction.

|

| 69 |

+

- `zipmo_planner_dense`: dense-conditioning planner for open-domain motion prediction.

|

| 70 |

+

- `zipmo_vae`: long-term motion autoencoder.

|

| 71 |

+

- `zipmo_planner_libero`: LIBERO planner with mode `atm` or `tramoe`.

|

| 72 |

+

- `zipmo_policy_head`: LIBERO policy head with mode `atm` or `tramoe`. For `tramoe`, pass one of `10`, `goal`, `object`, or `spatial`.

|

| 73 |

+

|

| 74 |

+

For the interactive demo, standard track prediction evaluation, LIBERO rollout evaluation, and training instructions, see the [GitHub repository](https://github.com/CompVis/long-term-motion).

|

| 75 |

+

|

| 76 |

+

## Citation

|

| 77 |

+

|

| 78 |

+

If you find our model or code useful, please cite our paper:

|

| 79 |

+

|

| 80 |

+

```bibtex

|

| 81 |

+

@inproceedings{stracke2026motionembeddings,

|

| 82 |

+

title = {Learning Long-term Motion Embeddings for Efficient Kinematics Generation},

|

| 83 |

+

author = {Stracke, Nick and Bauer, Kolja and Baumann, Stefan Andreas and Bautista, Miguel Angel and Susskind, Josh and Ommer, Bj{\"o}rn},

|

| 84 |

+

booktitle = {Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition},

|

| 85 |

+

year = {2026}

|

| 86 |

+

}

|

| 87 |

+

```

|