diff --git a/OpenOOD/.gitignore b/OpenOOD/.gitignore

new file mode 100644

index 0000000000000000000000000000000000000000..216d8d0a58840c3c1dd294e6fb76c24bd776b80e

--- /dev/null

+++ b/OpenOOD/.gitignore

@@ -0,0 +1,169 @@

+# ignore some temp/test files

+_test_*

+*-backup*

+

+# ignore data and output directory

+data

+data/

+results/

+checkpoints/

+ipynb_checkpoints/

+

+# Byte-compiled / optimized / DLL files

+__pycache__/

+*.py[cod]

+*$py.class

+

+# C extensions

+*.so

+

+# Distribution / packaging

+.Python

+build/

+develop-eggs/

+dist/

+downloads/

+eggs/

+.eggs/

+lib/

+lib64/

+parts/

+sdist/

+var/

+.vs/

+wheels/

+pip-wheel-metadata/

+share/python-wheels/

+*.egg-info/

+.installed.cfg

+*.egg

+MANIFEST

+

+# PyInstaller

+# Usually these files are written by a python script from a template

+# before PyInstaller builds the exe, so as to inject date/other infos into it.

+*.manifest

+*.spec

+

+# Installer logs

+pip-log.txt

+pip-delete-this-directory.txt

+

+# Unit test / coverage reports

+htmlcov/

+.tox/

+.nox/

+.coverage

+.coverage.*

+.cache

+nosetests.xml

+coverage.xml

+*.cover

+*.py,cover

+.hypothesis/

+.pytest_cache/

+

+# Translations

+*.mo

+*.pot

+

+# Django stuff:

+*.log

+local_settings.py

+db.sqlite3

+db.sqlite3-journal

+

+# Flask stuff:

+instance/

+.webassets-cache

+

+# Scrapy stuff:

+.scrapy

+

+# Sphinx documentation

+docs/

+OpenOOD.wiki/

+

+# PyBuilder

+target/

+

+# Jupyter Notebook

+.ipynb

+.ipynb_checkpoints

+

+# IPython

+profile_default/

+ipython_config.py

+

+# pyenv

+.python-version

+

+# pipenv

+# According to pypa/pipenv#598, it is recommended to include Pipfile.lock in version control.

+# However, in case of collaboration, if having platform-specific dependencies or dependencies

+# having no cross-platform support, pipenv may install dependencies that don't work, or not

+# install all needed dependencies.

+#Pipfile.lock

+

+# PEP 582; used by e.g. github.com/David-OConnor/pyflow

+__pypackages__/

+

+# Celery stuff

+celerybeat-schedule

+celerybeat.pid

+

+# SageMath parsed files

+*.sage.py

+

+# Environments

+.env

+.venv

+env/

+venv/

+ENV/

+env.bak/

+venv.bak/

+

+# Spyder project settings

+.spyderproject

+.spyproject

+

+# Rope project settings

+.ropeproject

+

+# mkdocs documentation

+/site

+

+# mypy

+.mypy_cache/

+.dmypy.json

+dmypy.json

+

+# Pyre type checker

+.pyre/

+

+# vscode debug

+.vscode/

+

+# macos files

+.DS_Store

+

+# check format

+.isort.cfg

+

+# no jupyter notebook

+*.ipynb_checkpoints

+*.ipynb

+

+# ignore custom config and scripts

+config/*/_*/

+scripts/_*/

+tools/mytools/

+

+# ignore pretrained bit model

+bit_pretrained_models/

+group_config/

+

+# local dev

+local/

+*legacy*

diff --git a/OpenOOD/.pre-commit-config.yaml b/OpenOOD/.pre-commit-config.yaml

new file mode 100644

index 0000000000000000000000000000000000000000..e7ed56abe4363b10cc4a3e64fc20b877a6472940

--- /dev/null

+++ b/OpenOOD/.pre-commit-config.yaml

@@ -0,0 +1,32 @@

+exclude: ^tests/data/

+repos:

+ - repo: https://github.com/PyCQA/flake8.git

+ rev: 3.8.3

+ hooks:

+ - id: flake8

+ - repo: https://github.com/pre-commit/mirrors-yapf

+ rev: v0.30.0

+ hooks:

+ - id: yapf

+ - repo: https://github.com/pre-commit/pre-commit-hooks

+ rev: v3.1.0

+ hooks:

+ - id: trailing-whitespace

+ - id: check-yaml

+ - id: end-of-file-fixer

+ - id: double-quote-string-fixer

+ - id: check-merge-conflict

+ - id: fix-encoding-pragma

+ args: ["--remove"]

+ - id: mixed-line-ending

+ args: ["--fix=lf"]

+ - repo: https://github.com/codespell-project/codespell

+ rev: v2.1.0

+ hooks:

+ - id: codespell

+ args: ["--ignore-words=codespell_ignored.txt"]

+ - repo: https://github.com/myint/docformatter

+ rev: v1.3.1

+ hooks:

+ - id: docformatter

+ args: ["--in-place", "--wrap-descriptions", "79"]

diff --git a/OpenOOD/CODE_OF_CONDUCT.md b/OpenOOD/CODE_OF_CONDUCT.md

new file mode 100644

index 0000000000000000000000000000000000000000..6784a8d16675b5010cdf149ae632c3ee6b2e791b

--- /dev/null

+++ b/OpenOOD/CODE_OF_CONDUCT.md

@@ -0,0 +1,128 @@

+# Contributor Covenant Code of Conduct

+

+## Our Pledge

+

+We as members, contributors, and leaders pledge to make participation in our

+community a harassment-free experience for everyone, regardless of age, body

+size, visible or invisible disability, ethnicity, sex characteristics, gender

+identity and expression, level of experience, education, socio-economic status,

+nationality, personal appearance, race, religion, or sexual identity

+and orientation.

+

+We pledge to act and interact in ways that contribute to an open, welcoming,

+diverse, inclusive, and healthy community.

+

+## Our Standards

+

+Examples of behavior that contributes to a positive environment for our

+community include:

+

+* Demonstrating empathy and kindness toward other people

+* Being respectful of differing opinions, viewpoints, and experiences

+* Giving and gracefully accepting constructive feedback

+* Accepting responsibility and apologizing to those affected by our mistakes,

+ and learning from the experience

+* Focusing on what is best not just for us as individuals, but for the

+ overall community

+

+Examples of unacceptable behavior include:

+

+* The use of sexualized language or imagery, and sexual attention or

+ advances of any kind

+* Trolling, insulting or derogatory comments, and personal or political attacks

+* Public or private harassment

+* Publishing others' private information, such as a physical or email

+ address, without their explicit permission

+* Other conduct which could reasonably be considered inappropriate in a

+ professional setting

+

+## Enforcement Responsibilities

+

+Community leaders are responsible for clarifying and enforcing our standards of

+acceptable behavior and will take appropriate and fair corrective action in

+response to any behavior that they deem inappropriate, threatening, offensive,

+or harmful.

+

+Community leaders have the right and responsibility to remove, edit, or reject

+comments, commits, code, wiki edits, issues, and other contributions that are

+not aligned to this Code of Conduct, and will communicate reasons for moderation

+decisions when appropriate.

+

+## Scope

+

+This Code of Conduct applies within all community spaces, and also applies when

+an individual is officially representing the community in public spaces.

+Examples of representing our community include using an official e-mail address,

+posting via an official social media account, or acting as an appointed

+representative at an online or offline event.

+

+## Enforcement

+

+Instances of abusive, harassing, or otherwise unacceptable behavior may be

+reported to the community leaders responsible for enforcement at

+yangjingkang001@gmail.com.

+All complaints will be reviewed and investigated promptly and fairly.

+

+All community leaders are obligated to respect the privacy and security of the

+reporter of any incident.

+

+## Enforcement Guidelines

+

+Community leaders will follow these Community Impact Guidelines in determining

+the consequences for any action they deem in violation of this Code of Conduct:

+

+### 1. Correction

+

+**Community Impact**: Use of inappropriate language or other behavior deemed

+unprofessional or unwelcome in the community.

+

+**Consequence**: A private, written warning from community leaders, providing

+clarity around the nature of the violation and an explanation of why the

+behavior was inappropriate. A public apology may be requested.

+

+### 2. Warning

+

+**Community Impact**: A violation through a single incident or series

+of actions.

+

+**Consequence**: A warning with consequences for continued behavior. No

+interaction with the people involved, including unsolicited interaction with

+those enforcing the Code of Conduct, for a specified period of time. This

+includes avoiding interactions in community spaces as well as external channels

+like social media. Violating these terms may lead to a temporary or

+permanent ban.

+

+### 3. Temporary Ban

+

+**Community Impact**: A serious violation of community standards, including

+sustained inappropriate behavior.

+

+**Consequence**: A temporary ban from any sort of interaction or public

+communication with the community for a specified period of time. No public or

+private interaction with the people involved, including unsolicited interaction

+with those enforcing the Code of Conduct, is allowed during this period.

+Violating these terms may lead to a permanent ban.

+

+### 4. Permanent Ban

+

+**Community Impact**: Demonstrating a pattern of violation of community

+standards, including sustained inappropriate behavior, harassment of an

+individual, or aggression toward or disparagement of classes of individuals.

+

+**Consequence**: A permanent ban from any sort of public interaction within

+the community.

+

+## Attribution

+

+This Code of Conduct is adapted from the [Contributor Covenant][homepage],

+version 2.0, available at

+https://www.contributor-covenant.org/version/2/0/code_of_conduct.html.

+

+Community Impact Guidelines were inspired by [Mozilla's code of conduct

+enforcement ladder](https://github.com/mozilla/diversity).

+

+[homepage]: https://www.contributor-covenant.org

+

+For answers to common questions about this code of conduct, see the FAQ at

+https://www.contributor-covenant.org/faq. Translations are available at

+https://www.contributor-covenant.org/translations.

diff --git a/OpenOOD/CONTRIBUTING.md b/OpenOOD/CONTRIBUTING.md

new file mode 100644

index 0000000000000000000000000000000000000000..1568a09285409b22052e428741e315520b8f91bc

--- /dev/null

+++ b/OpenOOD/CONTRIBUTING.md

@@ -0,0 +1,68 @@

+## Contributing to OpenOOD

+

+All kinds of contributions are welcome, including but not limited to the following.

+

+- Integrate more methods under generalized OOD detection

+- Fix typo or bugs

+- Add new features and components

+

+### Workflow

+

+1. fork and pull the latest OpenOOD repository

+2. checkout a new branch (do not use master branch for PRs)

+3. commit your changes

+4. create a PR

+

+```{note}

+If you plan to add some new features that involve large changes, it is encouraged to open an issue for discussion first.

+```

+### Code style

+

+#### Python

+

+We adopt [PEP8](https://www.python.org/dev/peps/pep-0008/) as the preferred code style.

+

+We use the following tools for linting and formatting:

+

+- [flake8](http://flake8.pycqa.org/en/latest/): A wrapper around some linter tools.

+- [yapf](https://github.com/google/yapf): A formatter for Python files.

+- [isort](https://github.com/timothycrosley/isort): A Python utility to sort imports.

+- [markdownlint](https://github.com/markdownlint/markdownlint): A linter to check markdown files and flag style issues.

+- [docformatter](https://github.com/myint/docformatter): A formatter to format docstring.

+

+Style configurations of yapf and isort can be found in [setup.cfg](./setup.cfg).

+

+We use [pre-commit hook](https://pre-commit.com/) that checks and formats for `flake8`, `yapf`, `isort`, `trailing whitespaces`, `markdown files`,

+fixes `end-of-files`, `double-quoted-strings`, `python-encoding-pragma`, `mixed-line-ending`, sorts `requirments.txt` automatically on every commit.

+The config for a pre-commit hook is stored in [.pre-commit-config](./.pre-commit-config.yaml).

+

+After you clone the repository, you will need to install initialize pre-commit hook.

+

+```shell

+pip install -U pre-commit

+```

+

+From the repository folder

+

+```shell

+pre-commit install

+```

+

+## Contributing to OpenOOD leaderboard

+

+We welcome new entries submitted to the leaderboard. Please follow the instructions below to submit your results.

+

+1. Evaluate your model/method with OpenOOD's benchmark and evaluator such that the comparison is fair.

+

+2. Report your new results by opening an issue. Remember to specify the following information:

+

+- **`Training`**: The training method of your model, e.g., `CrossEntropy`.

+- **`Postprocessor`**: The postprocessor of your model, e.g., `MSP`, `ReAct`, etc.

+- **`Near-OOD AUROC`**: The AUROC score of your model on the near-OOD split.

+- **`Far-OOD AUROC`**: The AUROC score of your model on the far-OOD split.

+- **`ID Accuracy`**: The accuracy of your model on the ID test data.

+- **`Outlier Data`**: Whether your model uses the outlier data for training.

+- **`Model Arch.`**: The architecture of your base classifier, e.g., `ResNet18`.

+- **`Additional Description`**: Any additional description of your model, e.g., `100 epochs`, `torchvision pretrained`, etc.

+

+3. Ideally, send us a copy of your model checkpoint so that we can verify your results on our end. You can either upload the checkpoint to a cloud storage and share the link in the issue, or send us an email at [jz288@duke.edu](mailto:jz288@duke.edu).

diff --git a/OpenOOD/LICENSE b/OpenOOD/LICENSE

new file mode 100644

index 0000000000000000000000000000000000000000..606b40619e6b44313bb1c40d4e6c9c9f96623bd8

--- /dev/null

+++ b/OpenOOD/LICENSE

@@ -0,0 +1,21 @@

+MIT License

+

+Copyright (c) 2021 Jingkang Yang

+

+Permission is hereby granted, free of charge, to any person obtaining a copy

+of this software and associated documentation files (the "Software"), to deal

+in the Software without restriction, including without limitation the rights

+to use, copy, modify, merge, publish, distribute, sublicense, and/or sell

+copies of the Software, and to permit persons to whom the Software is

+furnished to do so, subject to the following conditions:

+

+The above copyright notice and this permission notice shall be included in all

+copies or substantial portions of the Software.

+

+THE SOFTWARE IS PROVIDED "AS IS", WITHOUT WARRANTY OF ANY KIND, EXPRESS OR

+IMPLIED, INCLUDING BUT NOT LIMITED TO THE WARRANTIES OF MERCHANTABILITY,

+FITNESS FOR A PARTICULAR PURPOSE AND NONINFRINGEMENT. IN NO EVENT SHALL THE

+AUTHORS OR COPYRIGHT HOLDERS BE LIABLE FOR ANY CLAIM, DAMAGES OR OTHER

+LIABILITY, WHETHER IN AN ACTION OF CONTRACT, TORT OR OTHERWISE, ARISING FROM,

+OUT OF OR IN CONNECTION WITH THE SOFTWARE OR THE USE OR OTHER DEALINGS IN THE

+SOFTWARE.

diff --git a/OpenOOD/README.md b/OpenOOD/README.md

new file mode 100644

index 0000000000000000000000000000000000000000..8751acf24f928a737cd5c829b85dd0ab33469fd8

--- /dev/null

+++ b/OpenOOD/README.md

@@ -0,0 +1,367 @@

+# OpenOOD: Benchmarking Generalized OOD Detection

+

+

+

+

+| :exclamation: When using OpenOOD in your research, it is vital to cite both the OpenOOD benchmark (versions 1 and 1.5) and the individual works that have contributed to your research. Accurate citation acknowledges the efforts and contributions of all researchers involved. For example, if your work involves the NINCO benchmark within OpenOOD, please include a citation for NINCO apart of OpenOOD.|

+|-----------------------------------------|

+

+

+[-b31b1b?style=for-the-badge)](https://openreview.net/pdf?id=gT6j4_tskUt)

+

+[-yellowgreen?style=for-the-badge)](https://arxiv.org/abs/2306.09301)

+

+

+

+

+[](https://zjysteven.github.io/OpenOOD/)

+

+[](https://colab.research.google.com/drive/1tvTpCM1_ju82Yygu40fy7Lc0L1YrlkQF?usp=sharing)

+

+[](https://openood.slack.com/)

+

+

+ +

+

+This repository reproduces representative methods within the [`Generalized Out-of-Distribution Detection Framework`](https://arxiv.org/abs/2110.11334),

+aiming to make a fair comparison across methods that were initially developed for anomaly detection, novelty detection, open set recognition, and out-of-distribution detection.

+This codebase is still under construction.

+Comments, issues, contributions, and collaborations are all welcomed!

+

+|  |

+|:--:|

+| Timeline of the methods that OpenOOD supports. More methods are included as OpenOOD iterates.|

+

+

+## Updates

+- **27 Oct, 2023**: A short version of OpenOOD `v1.5` is accepted to [NeurIPS 2023 Workshop on Distribution Shifts](https://sites.google.com/view/distshift2023/home?authuser=0) as an oral presentation. You may want to check out our [presentation slides](https://drive.google.com/file/d/1qlLQxWpYqFMwjgAHayV_ly2MSGbQ8b18/view?usp=drive_link) and [video recording](https://youtu.be/l58qYmY9NVw).

+- **25 Sept, 2023**: OpenOOD now supports OOD detection with foundation models including zero-shot CLIP and DINOv2 linear probe. Check out the example evaluation script [here](https://github.com/Jingkang50/OpenOOD/blob/main/scripts/eval_ood_imagenet_foundation_models.py).

+- **16 June, 2023**: :boom::boom: We are releasing OpenOOD `v1.5`, which includes the following exciting updates. A detailed changelog is provided in the [Wiki](https://github.com/Jingkang50/OpenOOD/wiki/OpenOOD-v1.5-change-log). An overview of the supported methods and benchmarks (with paper links) is available [here](https://github.com/Jingkang50/OpenOOD/wiki/OpenOOD-v1.5-methods-&-benchmarks-overview).

+ - A new [report](https://arxiv.org/abs/2306.09301) which provides benchmarking results on ImageNet and for full-spectrum detection.

+ - A unified, easy-to-use evaluator that allows evaluation by simply creating an evaluator instance and calling its functions. Check out this [colab tutorial](https://colab.research.google.com/drive/1tvTpCM1_ju82Yygu40fy7Lc0L1YrlkQF?usp=sharing)!

+ - A live [leaderboard](https://zjysteven.github.io/OpenOOD/) that tracks the state-of-the-art of this field.

+- **14 October, 2022**: OpenOOD `v1.0` is accepted to NeurIPS 2022. Check the report [here](https://arxiv.org/abs/2210.07242).

+- **14 June, 2022**: We release `v0.5`.

+- **12 April, 2022**: Primary release to support [Full-Spectrum OOD Detection](https://arxiv.org/abs/2204.05306).

+

+## FAQ

+- `APS_mode` means Automatic (hyper)Parameter Searching mode, which enables the model to validate all the hyperparameters in the sweep list based on the validation ID/OOD set. The default value is False. Check [here](https://github.com/Jingkang50/OpenOOD/blob/main/configs/postprocessors/dice.yml) for example.

+

+

+## Get Started

+

+### v1.5 (up-to-date)

+#### Installation

+OpenOOD now supports installation via pip.

+```

+pip install git+https://github.com/Jingkang50/OpenOOD

+# optional, if you want to use CLIP

+# pip install git+https://github.com/openai/CLIP.git

+```

+

+#### Data

+If you only use our evaluator, the benchmarks for evaluation will be automatically downloaded by the evaluator (again check out this [tutorial](https://colab.research.google.com/drive/1tvTpCM1_ju82Yygu40fy7Lc0L1YrlkQF?usp=sharing)). If you would like to also use OpenOOD for training, you can get all data with our [downloading script](https://github.com/Jingkang50/OpenOOD/tree/main/scripts/download). Note that ImageNet-1K training images should be downloaded from its official website.

+

+#### Pre-trained checkpoints

+OpenOOD v1.5 focuses on 4 ID datasets, and we release pre-trained models accordingly.

+- CIFAR-10 [[Google Drive]](https://drive.google.com/file/d/1byGeYxM_PlLjT72wZsMQvP6popJeWBgt/view?usp=drive_link): ResNet-18 classifiers trained with cross-entropy loss from 3 training runs.

+- CIFAR-100 [[Google Drive]](https://drive.google.com/file/d/1s-1oNrRtmA0pGefxXJOUVRYpaoAML0C-/view?usp=drive_link): ResNet-18 classifiers trained with cross-entropy loss from 3 training runs.

+- ImageNet-200 [[Google Drive]](https://drive.google.com/file/d/1ddVmwc8zmzSjdLUO84EuV4Gz1c7vhIAs/view?usp=drive_link): ResNet-18 classifiers trained with cross-entropy loss from 3 training runs.

+- ImageNet-1K [[Google Drive]](https://drive.google.com/file/d/15PdDMNRfnJ7f2oxW6lI-Ge4QJJH3Z0Fy/view?usp=drive_link): ResNet-50 classifiers including 1) the one from torchvision, 2) the ones that are trained by us with specific methods such as MOS, CIDER, and 3) the official checkpoints of data augmentation methods such as AugMix, PixMix.

+

+Again, these checkpoints can be downloaded with the downloading script [here](https://github.com/Jingkang50/OpenOOD/tree/main/scripts/download).

+

+

+Our codebase accesses the datasets from `./data/` and pretrained models from `./results/checkpoints/` by default.

+```

+├── ...

+├── data

+│ ├── benchmark_imglist

+│ ├── images_classic

+│ └── images_largescale

+├── openood

+├── results

+│ ├── checkpoints

+│ └── ...

+├── scripts

+├── main.py

+├── ...

+```

+

+#### Training and evaluation scripts

+We provide training and evaluation scripts for all the methods we support in [scripts folder](https://github.com/Jingkang50/OpenOOD/tree/main/scripts).

+

+---

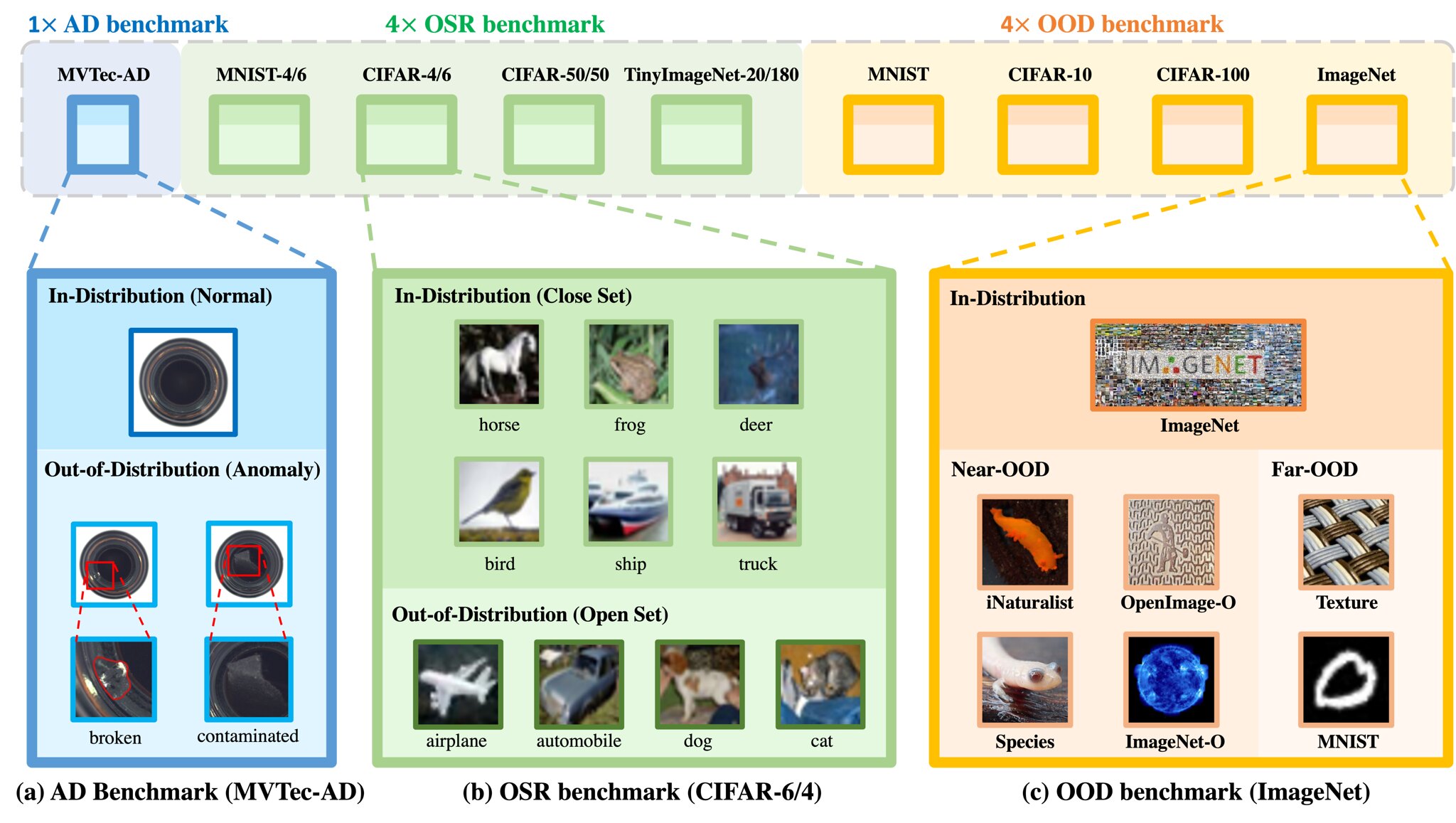

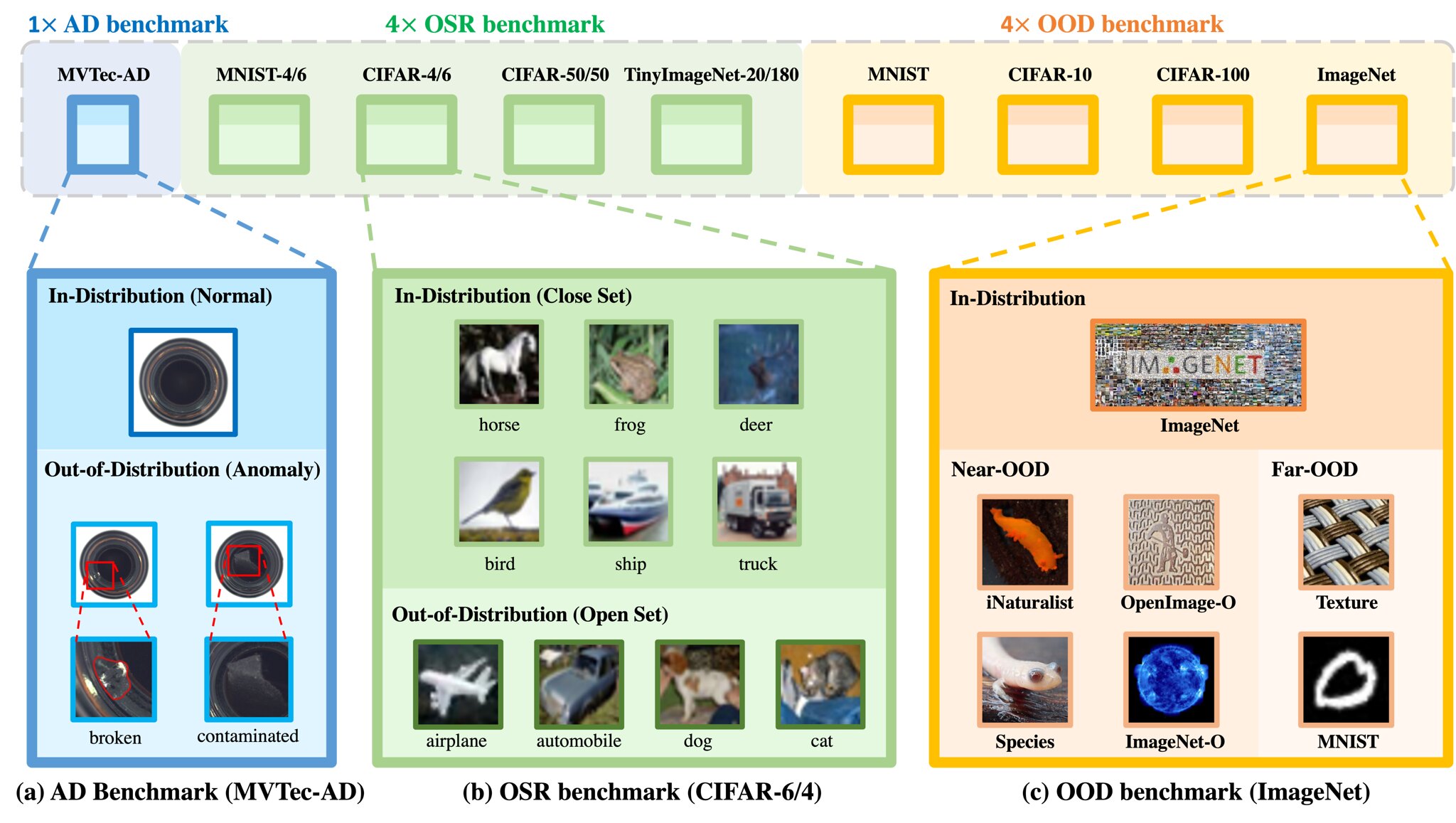

+## Supported Benchmarks (10)

+This part lists all the benchmarks we support. Feel free to include more.

+

+

+

+

+This repository reproduces representative methods within the [`Generalized Out-of-Distribution Detection Framework`](https://arxiv.org/abs/2110.11334),

+aiming to make a fair comparison across methods that were initially developed for anomaly detection, novelty detection, open set recognition, and out-of-distribution detection.

+This codebase is still under construction.

+Comments, issues, contributions, and collaborations are all welcomed!

+

+|  |

+|:--:|

+| Timeline of the methods that OpenOOD supports. More methods are included as OpenOOD iterates.|

+

+

+## Updates

+- **27 Oct, 2023**: A short version of OpenOOD `v1.5` is accepted to [NeurIPS 2023 Workshop on Distribution Shifts](https://sites.google.com/view/distshift2023/home?authuser=0) as an oral presentation. You may want to check out our [presentation slides](https://drive.google.com/file/d/1qlLQxWpYqFMwjgAHayV_ly2MSGbQ8b18/view?usp=drive_link) and [video recording](https://youtu.be/l58qYmY9NVw).

+- **25 Sept, 2023**: OpenOOD now supports OOD detection with foundation models including zero-shot CLIP and DINOv2 linear probe. Check out the example evaluation script [here](https://github.com/Jingkang50/OpenOOD/blob/main/scripts/eval_ood_imagenet_foundation_models.py).

+- **16 June, 2023**: :boom::boom: We are releasing OpenOOD `v1.5`, which includes the following exciting updates. A detailed changelog is provided in the [Wiki](https://github.com/Jingkang50/OpenOOD/wiki/OpenOOD-v1.5-change-log). An overview of the supported methods and benchmarks (with paper links) is available [here](https://github.com/Jingkang50/OpenOOD/wiki/OpenOOD-v1.5-methods-&-benchmarks-overview).

+ - A new [report](https://arxiv.org/abs/2306.09301) which provides benchmarking results on ImageNet and for full-spectrum detection.

+ - A unified, easy-to-use evaluator that allows evaluation by simply creating an evaluator instance and calling its functions. Check out this [colab tutorial](https://colab.research.google.com/drive/1tvTpCM1_ju82Yygu40fy7Lc0L1YrlkQF?usp=sharing)!

+ - A live [leaderboard](https://zjysteven.github.io/OpenOOD/) that tracks the state-of-the-art of this field.

+- **14 October, 2022**: OpenOOD `v1.0` is accepted to NeurIPS 2022. Check the report [here](https://arxiv.org/abs/2210.07242).

+- **14 June, 2022**: We release `v0.5`.

+- **12 April, 2022**: Primary release to support [Full-Spectrum OOD Detection](https://arxiv.org/abs/2204.05306).

+

+## FAQ

+- `APS_mode` means Automatic (hyper)Parameter Searching mode, which enables the model to validate all the hyperparameters in the sweep list based on the validation ID/OOD set. The default value is False. Check [here](https://github.com/Jingkang50/OpenOOD/blob/main/configs/postprocessors/dice.yml) for example.

+

+

+## Get Started

+

+### v1.5 (up-to-date)

+#### Installation

+OpenOOD now supports installation via pip.

+```

+pip install git+https://github.com/Jingkang50/OpenOOD

+# optional, if you want to use CLIP

+# pip install git+https://github.com/openai/CLIP.git

+```

+

+#### Data

+If you only use our evaluator, the benchmarks for evaluation will be automatically downloaded by the evaluator (again check out this [tutorial](https://colab.research.google.com/drive/1tvTpCM1_ju82Yygu40fy7Lc0L1YrlkQF?usp=sharing)). If you would like to also use OpenOOD for training, you can get all data with our [downloading script](https://github.com/Jingkang50/OpenOOD/tree/main/scripts/download). Note that ImageNet-1K training images should be downloaded from its official website.

+

+#### Pre-trained checkpoints

+OpenOOD v1.5 focuses on 4 ID datasets, and we release pre-trained models accordingly.

+- CIFAR-10 [[Google Drive]](https://drive.google.com/file/d/1byGeYxM_PlLjT72wZsMQvP6popJeWBgt/view?usp=drive_link): ResNet-18 classifiers trained with cross-entropy loss from 3 training runs.

+- CIFAR-100 [[Google Drive]](https://drive.google.com/file/d/1s-1oNrRtmA0pGefxXJOUVRYpaoAML0C-/view?usp=drive_link): ResNet-18 classifiers trained with cross-entropy loss from 3 training runs.

+- ImageNet-200 [[Google Drive]](https://drive.google.com/file/d/1ddVmwc8zmzSjdLUO84EuV4Gz1c7vhIAs/view?usp=drive_link): ResNet-18 classifiers trained with cross-entropy loss from 3 training runs.

+- ImageNet-1K [[Google Drive]](https://drive.google.com/file/d/15PdDMNRfnJ7f2oxW6lI-Ge4QJJH3Z0Fy/view?usp=drive_link): ResNet-50 classifiers including 1) the one from torchvision, 2) the ones that are trained by us with specific methods such as MOS, CIDER, and 3) the official checkpoints of data augmentation methods such as AugMix, PixMix.

+

+Again, these checkpoints can be downloaded with the downloading script [here](https://github.com/Jingkang50/OpenOOD/tree/main/scripts/download).

+

+

+Our codebase accesses the datasets from `./data/` and pretrained models from `./results/checkpoints/` by default.

+```

+├── ...

+├── data

+│ ├── benchmark_imglist

+│ ├── images_classic

+│ └── images_largescale

+├── openood

+├── results

+│ ├── checkpoints

+│ └── ...

+├── scripts

+├── main.py

+├── ...

+```

+

+#### Training and evaluation scripts

+We provide training and evaluation scripts for all the methods we support in [scripts folder](https://github.com/Jingkang50/OpenOOD/tree/main/scripts).

+

+---

+## Supported Benchmarks (10)

+This part lists all the benchmarks we support. Feel free to include more.

+

+ +

+

+

+

+Anomaly Detection (1)

+

+> - [x] [MVTec-AD](https://www.mvtec.com/company/research/datasets/mvtec-ad)

+

+

+

+Open Set Recognition (4)

+

+> - [x] [MNIST-4/6]()

+> - [x] [CIFAR-4/6]()

+> - [x] [CIFAR-40/60]()

+> - [x] [TinyImageNet-20/180]()

+

+

+

+Out-of-Distribution Detection (6)

+

+> - [x] [BIMCV (A COVID X-Ray Dataset)]()

+> > Near-OOD: `CT-SCAN`, `X-Ray-Bone`;

+> > Far-OOD: `MNIST`, `CIFAR-10`, `Texture`, `Tiny-ImageNet`;

+> - [x] [MNIST]()

+> > Near-OOD: `NotMNIST`, `FashionMNIST`;

+> > Far-OOD: `Texture`, `CIFAR-10`, `TinyImageNet`, `Places365`;

+> - [x] [CIFAR-10]()

+> > Near-OOD: `CIFAR-100`, `TinyImageNet`;

+> > Far-OOD: `MNIST`, `SVHN`, `Texture`, `Places365`;

+> - [x] [CIFAR-100]()

+> > Near-OOD: `CIFAR-10`, `TinyImageNet`;

+> > Far-OOD: `MNIST`, `SVHN`, `Texture`, `Places365`;

+> - [x] [ImageNet-200]()

+> > Near-OOD: `SSB-hard`, `NINCO`;

+> > Far-OOD: `iNaturalist`, `Texture`, `OpenImage-O`;

+> > Covariate-Shifted ID: `ImageNet-C`, `ImageNet-R`, `ImageNet-v2`;

+> - [x] [ImageNet-1K]()

+> > Near-OOD: `SSB-hard`, `NINCO`;

+> > Far-OOD: `iNaturalist`, `Texture`, `OpenImage-O`;

+> > Covariate-Shifted ID: `ImageNet-C`, `ImageNet-R`, `ImageNet-v2`;

+

+

+Note that OpenOOD v1.5 emphasizes and focuses on the last 4 benchmarks for OOD detection.

+

+---

+## Supported Backbones (6)

+This part lists all the backbones we will support in our codebase, including CNN-based and Transformer-based models. Backbones like ResNet-50 and Transformer have ImageNet-1K/22K pretrained models.

+

+

+CNN-based Backbones (4)

+

+> - [x] [LeNet-5](http://yann.lecun.com/exdb/lenet/)

+> - [x] [ResNet-18](https://openaccess.thecvf.com/content_cvpr_2016/html/He_Deep_Residual_Learning_CVPR_2016_paper.html)

+> - [x] [WideResNet-28](https://arxiv.org/abs/1605.07146)

+> - [x] [ResNet-50](https://openaccess.thecvf.com/content_cvpr_2016/html/He_Deep_Residual_Learning_CVPR_2016_paper.html) ([BiT](https://github.com/google-research/big_transfer))

+

+

+

+

+Transformer-based Architectures (2)

+

+> - [x] [ViT](https://github.com/google-research/vision_transformer) ([DeiT](https://github.com/facebookresearch/deit))

+> - [x] [Swin Transformer](https://openaccess.thecvf.com/content/ICCV2021/html/Liu_Swin_Transformer_Hierarchical_Vision_Transformer_Using_Shifted_Windows_ICCV_2021_paper.html)

+

+

+---

+## Supported Methods (50+)

+This part lists all the methods we include in this codebase. Up to `v1.5`, we totally support **more than 50 popular methods** for generalized OOD detection.

+

+All the supported methodolgies can be placed in the following four categories.

+

+![density] ![reconstruction] ![classification] ![distance]

+

+We also note our supported methodolgies with the following tags if they have special designs in the corresponding steps, compared to the standard classifier training process.

+

+![preprocess] ![extradata] ![training] ![postprocess]

+

+

+

+

+Anomaly Detection (5)

+

+> - [x] [](https://github.com/lukasruff/Deep-SVDD-PyTorch) ![training] ![postprocess]

+> - [x] []()

+![training] ![postprocess]

+> - [x] [](https://github.com/lukasruff/Deep-SVDD-PyTorch)

+![training] ![postprocess]

+> - [x] [](https://github.com/lukasruff/Deep-SVDD-PyTorch) ![training] ![postprocess]

+> - [x] [](https://github.com/lukasruff/Deep-SVDD-PyTorch) ![training] ![postprocess]

+

+

+

+

+Open Set Recognition (3)

+

+> Post-Hoc Methods (1):

+> - [x] [](https://github.com/13952522076/Open-Set-Recognition) ![postprocess]

+> - [x] [](https://github.com/aimerykong/OpenGAN/tree/main/utils) ![postprocess]

+

+> Training Methods (1):

+> - [x] [](https://github.com/iCGY96/ARPL) ![training] ![postprocess]

+

+

+

+

+Out-of-Distribution Detection (22)

+

+

+

+> Post-Hoc Methods (13):

+> - [x] [](https://openreview.net/forum?id=Hkg4TI9xl)

+> - [x] [](https://openreview.net/forum?id=H1VGkIxRZ) ![postprocess]

+> - [x] [](https://papers.nips.cc/paper/2018/hash/abdeb6f575ac5c6676b747bca8d09cc2-Abstract.html) ![postprocess]

+> - [x] [](https://papers.nips.cc/paper/2018/hash/abdeb6f575ac5c6676b747bca8d09cc2-Abstract.html) ![postprocess]

+> - [x] [](https://github.com/VectorInstitute/gram-ood-detection) ![postprocess]

+> - [x] [](https://github.com/wetliu/energy_ood) ![postprocess]

+> - [x] [](https://arxiv.org/abs/2106.09022) ![postprocess]

+> - [x] [](https://github.com/deeplearning-wisc/gradnorm_ood) ![postprocess]

+> - [x] [](https://github.com/deeplearning-wisc/react) ![postprocess]

+> - [x] [](https://github.com/hendrycks/anomaly-seg) ![postprocess]

+> - [x] [](https://github.com/hendrycks/anomaly-seg) ![postprocess]

+> - [x] []() ![postprocess]

+> - [x] [](https://ooddetection.github.io/) ![postprocess]

+> - [x] [](https://github.com/deeplearning-wisc/knn-ood) ![postprocess]

+> - [x] [](https://github.com/deeplearning-wisc/dice) ![postprocess]

+> - [x] [](https://github.com/KingJamesSong/RankFeat) ![postprocess]

+> - [x] [](https://andrijazz.github.io/ash) ![postprocess]

+> - [x] [](https://github.com/zjs975584714/SHE) ![postprocess]

+> - [x] [](https://openaccess.thecvf.com/content/CVPR2023/papers/Liu_GEN_Pushing_the_Limits_of_Softmax-Based_Out-of-Distribution_Detection_CVPR_2023_paper.pdf) ![postprocess]

+> - [x] [](https://arxiv.org/abs/2309.14888) ![postprocess]

+> - [x] [](https://arxiv.org/abs/2301.12321) ![postprocess]

+> - [x] [](https://github.com/kai422/SCALE) ![postprocess]

+

+> Training Methods (6):

+> - [x] [](https://github.com/uoguelph-mlrg/confidence_estimation) ![preprocess] ![training]

+> - [x] [](https://github.com/hendrycks/ss-ood) ![preprocess] ![training]

+> - [x] [](https://github.com/guyera/Generalized-ODIN-Implementation) ![training] ![postprocess]

+> - [x] [](https://github.com/alinlab/CSI) ![preprocess] ![training] ![postprocess]

+> - [x] [](https://github.com/inspire-group/SSD) ![training] ![postprocess]

+> - [x] [](https://github.com/deeplearning-wisc/large_scale_ood) ![training]

+> - [x] [](https://github.com/deeplearning-wisc/vos) ![training] ![postprocess]

+> - [x] [](https://github.com/hongxin001/logitnorm_ood) ![training] ![preprocess]

+> - [x] [](https://github.com/deeplearning-wisc/cider) ![training] ![postprocess]

+> - [x] [](https://github.com/deeplearning-wisc/npos) ![training] ![postprocess]

+> - [x] [](https://arxiv.org/abs/2305.17797) ![training]

+> - [x] [](https://github.com/kai422/SCALE) ![training]

+

+

+> Training With Extra Data (3):

+> - [x] [](https://openreview.net/forum?id=HyxCxhRcY7) ![extradata] ![training]

+> - [x] [](https://openaccess.thecvf.com/content_ICCV_2019/papers/Yu_Unsupervised_Out-of-Distribution_Detection_by_Maximum_Classifier_Discrepancy_ICCV_2019_paper.pdf) ![extradata] ![training]

+> - [x] [](https://openaccess.thecvf.com/content/ICCV2021/html/Yang_Semantically_Coherent_Out-of-Distribution_Detection_ICCV_2021_paper.html) ![extradata] ![training]

+> - [x] [](https://openaccess.thecvf.com/content/WACV2023/html/Zhang_Mixture_Outlier_Exposure_Towards_Out-of-Distribution_Detection_in_Fine-Grained_Environments_WACV_2023_paper.html) ![extradata] ![training]

+

+

+

+

+Method Uncertainty (4)

+

+> - [x] []() ![training] ![postprocess]

+> - [x] []() ![training]

+> - [x] [](https://proceedings.mlr.press/v70/guo17a.html) ![postprocess]

+> - [x] []() ![training] ![postprocess]

+

+

+

+

+Data Augmentation (3)

+

+> - [x] []() ![preprocess]

+> - [x] []() ![preprocess]

+> - [x] [](https://openreview.net/forum?id=Bygh9j09KX) ![preprocess]

+> - [x] [](https://openaccess.thecvf.com/content_CVPRW_2020/html/w40/Cubuk_Randaugment_Practical_Automated_Data_Augmentation_With_a_Reduced_Search_Space_CVPRW_2020_paper.html) ![preprocess]

+> - [x] [](https://github.com/google-research/augmix) ![preprocess]

+> - [x] [](https://github.com/hendrycks/imagenet-r) ![preprocess]

+> - [x] [](https://openaccess.thecvf.com/content/CVPR2022/html/Hendrycks_PixMix_Dreamlike_Pictures_Comprehensively_Improve_Safety_Measures_CVPR_2022_paper.html) ![preprocess]

+> - [x] [](https://github.com/FrancescoPinto/RegMixup) ![preprocess]

+

+

+---

+## Contributing

+We appreciate all contributions to improve OpenOOD.

+We sincerely welcome community users to participate in these projects. Please refer to [CONTRIBUTING.md](https://github.com/Jingkang50/OpenOOD/blob/main/CONTRIBUTING.md) for the contributing guideline.

+

+## Contributors

+

+  +

+

+

+## Citation

+If you find our repository useful for your research, please consider citing our paper:

+```bibtex

+# v1.5 report

+@article{zhang2023openood,

+ title={OpenOOD v1.5: Enhanced Benchmark for Out-of-Distribution Detection},

+ author={Zhang, Jingyang and Yang, Jingkang and Wang, Pengyun and Wang, Haoqi and Lin, Yueqian and Zhang, Haoran and Sun, Yiyou and Du, Xuefeng and Zhou, Kaiyang and Zhang, Wayne and Li, Yixuan and Liu, Ziwei and Chen, Yiran and Li, Hai},

+ journal={arXiv preprint arXiv:2306.09301},

+ year={2023}

+}

+

+# v1.0 report

+@article{yang2022openood,

+ author = {Yang, Jingkang and Wang, Pengyun and Zou, Dejian and Zhou, Zitang and Ding, Kunyuan and Peng, Wenxuan and Wang, Haoqi and Chen, Guangyao and Li, Bo and Sun, Yiyou and Du, Xuefeng and Zhou, Kaiyang and Zhang, Wayne and Hendrycks, Dan and Li, Yixuan and Liu, Ziwei},

+ title = {OpenOOD: Benchmarking Generalized Out-of-Distribution Detection},

+ year = {2022}

+}

+

+@article{yang2022fsood,

+ title = {Full-Spectrum Out-of-Distribution Detection},

+ author = {Yang, Jingkang and Zhou, Kaiyang and Liu, Ziwei},

+ journal={arXiv preprint arXiv:2204.05306},

+ year = {2022}

+}

+

+@article{yang2021oodsurvey,

+ title={Generalized Out-of-Distribution Detection: A Survey},

+ author={Yang, Jingkang and Zhou, Kaiyang and Li, Yixuan and Liu, Ziwei},

+ journal={arXiv preprint arXiv:2110.11334},

+ year={2021}

+}

+

+@inproceedings{bitterwolf2023ninco,

+ title={In or Out? Fixing ImageNet Out-of-Distribution Detection Evaluation},

+ author={Julian Bitterwolf and Maximilian Mueller and Matthias Hein},

+ booktitle={ICML},

+ year={2023},

+ url={https://proceedings.mlr.press/v202/bitterwolf23a.html}

+}

+```

+

+

+

+

+

+

+

+[density]: https://img.shields.io/badge/Density-d0e9ff?style=for-the-badge&logo=data:image/png;base64,iVBORw0KGgoAAAANSUhEUgAAADIAAAAyCAYAAAAeP4ixAAAABmJLR0QA/wD/AP+gvaeTAAACuElEQVRoge2Zu2tUQRSHv9UomO0SFKNBrJQkFnY2Ij6iJig+EMRKbIUk+B9YWxn/gdSCIIIkgmiChY3BwkZN8IVoIgQECw0KSSxmxj1z9e7eOTObXeR+cGEm95wz58e8zt1AScm68AR4a58DLc4liI5Mfxew27a3rHMuUWxodQKpKIW0G6WQdqMU0m5k75GUdAJHgb3AGjAHTAPLTRzzD+/toGvAYWWMCjAGLIlY7lkCRq1NU4kVUgEm+FtA9pmgyWJihdzCT/gbMAU8sG357maCfHOJETKEn+hjoEe877F/kzYnIvPNRSukM+P7DKj+w64KzAq7dzSpONUKGRV+P4GBOrb7rI2zv6rKtAEaIZuAD8JvvIDPOP6sJL8GNEIuCJ9fmG+aRvTiz8rZ4EwzpLjZL4v2HeBjAZ9PwF3Rv5IgD4/QGenGzILzOR4w1kn8fdUVlGmG2BkZxuwRgAXM8VqUR8AX296MOb7VxAo5Jdr3gdUA3xVgMidWMDFCNuIvpck8wzpInyESnl4he+SQsP2BuRRDqWKqYRfnoCIGEDcjw6I9gxETynfMb2kO9fKKESLrpKmIOHJ5Jau9ii6tbsxmdbZ7IsbsE3FWgK2aINoZGRS+n4F5ZRyAV5gL0uVzRBMkRojjoTKGZFq0j2kCpBAScgnmIWMM5loFUGSPDAibVfyPJy07bCwXtz80gGZGzov2LLCoiJFlAXgu+udCA2iEyJL7nsI/DxkruqxvtLR24i+BvtgBBf34S7Y3xDl0Ri5R+xlnHnN0puIltWO8AlwMcQ4R0gGMiP7tkIEKImOOYApTFfWW1jXxbhnYrh2kDtswNZsbZ6yoY72y+TS10qMLuC7evQbOhOVYmDlgv23fwFTVX21/EfPd0xA5I+34zOQl/t/+W+Ep8KYViRTkRasTKCkpKUnDb6XM8jMAxEX4AAAAAElFTkSuQmCC

+

+[reconstruction]: https://img.shields.io/badge/Reconstruction-c2e2de?style=for-the-badge&logo=data:image/png;base64,iVBORw0KGgoAAAANSUhEUgAAADIAAAAyCAYAAAAeP4ixAAAABmJLR0QA/wD/AP+gvaeTAAADj0lEQVRoge3aS2tdVRQH8F80bS5Y2yYx1qEFrYpSMxBHKjrRFEV8oFB1Wuqotv0A+h3EfBQpitLGRGhsrQ9Ek0adVIUKWvARoxWvg7U35yTcpLnnnnvuLfQPhwX7+V/7sfbaax9uYLgw0kAf83gYf+IyvsfXWMBHuNIAh1owj/Ym3794D69hbFAEu8EOjOM+PIc3cRprCqV+xEm06uhwq9Hb+NWBvTiC86V2v8WhXhtuWpEyZvBZqf1ZNc1OGVM4q7+KwM14A6upj3PYV1fjd2IlNfyd/iqScRDLqZ8V7O+1wduwlBr8RIxOE4oQxmFBMYB3VG2oJaY2T/GtKb0pReAWxZI+p+KeeUcxtVOl9CYVgUnFMpvttvLT+E/Y+ekNeU0rQuyZbABmtltpp2Jzn+iQPwhF4LhihWxriZ1IFb7CaIf8QSkyis9T38evVXgHfkqFNztd54WzNwgcEtx+cA3f7HAq+EUDpKpgBJ8Kjq9sVfCDVOhIA6Sq4qjgeGqzArvxD66Kw2hYMY6/Bc89nQq8KDQ90yCpqjgjuD6bE24qZT6U5OkmGVVEHuxHckJZkYNJftkYnerIxujenFBW5O4kv2mMTnVcTPJATigrsjfJXxqjUx2Xk5zolJnvz9dDEGBMcF3LCeUZyaGhQbgfPaOsyB9J7hoEkS6xO8nfckJZkbw3arsj9xG3J/lrTigrspLkAcOPe5LM1mudItnsPtgYnerIZ95STigrspDk443RqY4nkux4nRgXsdg1xZkyjJhQOI1506+bkSv4UNjolxql1h1eFtfx95Ws1ka8Ks6R8w2R6hYjuCA4Ht6q4Ji4RrbxVP95dY1nBLdLtuGBnEyFL4hY7LBgVHi9bRzbToWWCO23RUB5WJAHeFkX/mCOVqwqbPYgMY2/BKcnu608qxiByXp5dYUpRcDw7SoNlIPYZ0VAuWnswmLisKiHK8aU9c8KU1sXrxUT+FjxHNezM7tfMbXLmvHFpkt9XhSPTbVgn2KZrYrYa6e4cK8YFdYpb+xFhcteG1oKA9AWAeWeX18TRsRTRj4n8sbu67V7RjHtbRGLPapadHICryvcjryUujaxVdESyyu7M23hkc7hLTyP+4XZ3pm+STyAF1KZuVQn178kTuyBBD/GRFT8lLgCbPeNPn9X8a5wAHtSoM6favbgMTwqftW4S5jr/Ij6O34WpnRJvLPM2cIVv4HrGf8Dfs0JOaMPQmgAAAAASUVORK5CYII=

+

+[classification]: https://img.shields.io/badge/Classification-fdd7e6?style=for-the-badge&logo=data:image/png;base64,iVBORw0KGgoAAAANSUhEUgAAADIAAAAyCAYAAAAeP4ixAAAABmJLR0QA/wD/AP+gvaeTAAAD3klEQVRogcWZu09UQRTGfwILhTw0cQFRG0tQEwsrG4ydiQExFsQEY6h4KJLYU6M22qGxImpjYvwHLBQBDSq6KqBg1MSOGOWlaHAtztnMZbl79965j/2SCRvuzPnm3Jlz5ptzwaAKuABMAivaJoB+oJLoECvPHmAayBZoL4GmsCRx81Q5jL8D2oAabe3AjD57Qbg3FjvPRTXwHqhzeV7nIOmzIUiK55kObvPoc0r7TNgQJMWzrINrPPrUap8lG4IkeMp0YDFsc/S3xZ8APIFRhuxZgOMe/XLPMrZEyP73y/PWhqAfWZUZ3INwBzCnfbpsCBTdAXh6bQgqkfydBWaRgKvV1uEwvg402hAoUsCYD54p7WuFJowzbm1d/2aAelsSoAF44sEzBewOYR+QlekDxpEMs4qkwS5kJTJE48w+tbOhHMvAU2Q7Wa9EEKSBN5h9bvvmLquNBxHNywppzMrMYufMax3fHuG8rFDPZmeCiLzDOm4R0V4lRwMi/HLayZnNUkAncBdYQOJgDZhHzocscCPJyRZDA+KE05l24AuFs1KuLQAnk59yYTRinFnETDSDqN1mYLu2FmAAkzD+AcOEkCVRoxHjxG8kjXppsjKgR/tmgStxT9Av2jFOtAYY14pxxkvaJ4IUJiZ6LMb36thPRFsXCIxOTEzYSPxyTCo/E2YiYe4XYDLPLSR4g2IDuK2/T4ecSygsIG+zOYSNFkxKdkMKuQKMIUklljLVik6iOoSNGrWx6vIsjSjiuMtUrFH8Hl4MOUd+5f2/AnhF/GUqIJqtdQBzQE4DI8B5YIhkylQA3FNDAyFsDOItZ+IuUwGSabKI7LBNvzkheQ44ClwC7mAcibtMBcjenMf+QMwVPj6z9Xa4RHFH6ojIkQrgPnYS5RimFuCmhCcpfgHr0D7jAXi3IA08wgSqUzSWe4wrR1Yi58RwgX5BylRW5SOAI8BXNfIN2dtDGIcyyF5vQc6YaiQ7DWJiIgtcpbCM91umsi4fdWNU62M23w5PKGmxi5Xf4kOxMpVr+cjtS9IkssRV2kYcRq7j/iZSyNsbRc6AZbX1Abn+9gF/ka2134cz+WUqz/KRny9JueBbBc76mIAXbqqt0ZB2NsHvl6QskmoPRcC5F5EkG8DBCOwBwb4kWWcHF1xTmw+jMhjkS9JYVKTALuAnJmhXCSnRg3xJ+h7UuAfSSAKITKInKgUUFcBztfkRORdqCSnRE5MCDvSovTnkhM6HlURPRArkYVztdXj0CSzRY5cCLvihNnd69LGS6FZSIARijctAUiAkShGXsaAUcRkLShGXsSHpuIwVkcXlf7gl3GNHJu+DAAAAAElFTkSuQmCC

+

+[distance]: https://img.shields.io/badge/Distance-f4d5b3?style=for-the-badge&&logo=data:image/png;base64,iVBORw0KGgoAAAANSUhEUgAAADIAAAAyCAYAAAAeP4ixAAAABmJLR0QA/wD/AP+gvaeTAAAB3ElEQVRoge2aO07DQBRFDzT5LABFNLRIFIiSgoYC0SGxAgoaPoVXQ4OggR2AxH8XSBErgB4huoTCfnjk2HHi+fiNlNMkhT2ak+vnGzmBBc7ZAo6ApbY3YsMO8A2MgUtgud3tNGMX+CGVGGWvF0SWjJnENbAP/BJZMkUJ2fQeEcmYl9MNk5s1k1F7mZlJjIEPYLXkONXJmEncAsPs/RAYlByvMhlT4or0Ex6Qy5xXnKdKZtpMDICzmvNVyMw6E3W0OjPzzkQdrSRT1hMrwDsRJVM3E7bJHJBfqodWO52CKVG1WRuZLvCQnfsJrFnut5R5ZmKWW2+RLvBILrFuv+VJmvTELLdeIbhEk56oI4iEq56oogPcZWt/ARsO1/7HdU8UCZ6Ey54QOsA9AZPw0RNd4AkFM2GTTPAkfPREjwBJ+O6J4BI+eqIHPKNgJmxQNxNNCJ6Ej57oAS9E3hNqZsImmeBJ+OoJkYi6J7xLbDIpIbjqCe8SkD6ZkJk4drx2MAkhIf2hZQScOFozyGCX4VKmNQnBlDltuEbrEoKNjBoJoYlMH2USwjwyfeAVhRKCKVPVJ+olhGky0UgIZTLRSQgJ+T8UEuCNgI3tGklGvtJElUQRkYlaQtjG7UOIBQB/hf9HJ+Iv7O8AAAAASUVORK5CYII=

+

+

+

+

+[preprocess]: https://img.shields.io/badge/PreProcess-f4d5b3?style=social&logo=data:image/png;base64,iVBORw0KGgoAAAANSUhEUgAAABAAAAAQCAYAAAAf8/9hAAAABmJLR0QA/wD/AP+gvaeTAAABDUlEQVQ4jc3TPy8EURQF8N8uS/wJGxuh0tH7CBKthk/gk6iIQiFRSEhEFEQhGoSQbERUEo2SGp1CwTa7infJZE3sbuckr5j75p5z7pk7/BesoIZGm6eG5SxBDSMdCFbwmS002mi6xU1zT7ED1fpfQtmLAexhtAVhI++hGyd4wD3KUS/jUJr9G8P8HmETBUzjGqdSuMeYwno4PMMjZrMOlnCHwagVsI23UC9iHNWoz+AlS/CEsSZHXTjABvpwiZ0YdSsc/hBMykcJEziXwi0FSTXGQVqkSl43ekNpHz1BcoV+YQXW8BwvZLGKVymPRexKoc7hQ1y0whHepXzqWJBZ41abWJA+3xAuMK/pH/gCPJhBnIabIDQAAAAASUVORK5CYII=

+

+[training]: https://img.shields.io/badge/Training-f4d5b3?style=social&logo=data:image/png;base64,iVBORw0KGgoAAAANSUhEUgAAAGAAAABgCAYAAADimHc4AAAABmJLR0QA/wD/AP+gvaeTAAAH5UlEQVR4nO2dW2wVRRjHf6WVwgNVlKCERJDihUiQxhvGFjCgFEXig8QgiSHGywOCD14wmpgYiakEEyMGNUEgImBQ4+VBJdqICMGgKAhYvIBGgaIhEAVLKbTHhzkbjqczszOzs2d3YX/JvJye/c+337dnZvabSyEnJycnJycnJycd1AILgQNAwbIcKF7bt+JWn0EsxN7x5aWl4lafQbQTPQDtFbfakaqkDZBQ8KSTxnvrRZ+kDTjbyQOQMDUxas8EpgCHgSXALxH1VE1K1CarHngIGAh8Cqz2oJkoNZy+iaD8CzQbXFuPumNVofr+KIP6JgHHyq5bTbwPZqzUAG8hd8hx5EEYDMwFNiuucw1AAfgWeAQYKrluEtChuG4NUG10xylC53xZEIYjmqbOkGsKwClNvacMru8CliJ+YaB3fukvITNBMHF+aRDeRjjFdFz/nabu7RY6J4F3CHd+poJg43yX0onozFU0AydirH8VKQ5CnM5vAxYDYwzsGFP87q6YbHmTFAbBxPnfY9bGB2ULMAcYEsGuC4EHgC+BHsN6O4u2ZiYIJs7fDNQBU9EHoRtYC4yNwc7LgWXo+5vOoo11hI/EVpKCIFTRe5yvcn6AKgitwJUVsHk48K6k/sD5ASZBWFEBe7XMxM75ATcCWxHNwjbgrkoYW0YzsBE4AmwCxkm+YxKEGZUwVsUKhVFBmy9zftaoQ98nLPNVkUsy7rDmb5cBTY62pIkmxL2oOFQpQ2SMROR2dJ3atMSsi8409IOGo8AliVlXZAr6t8kTwITErHOnEf1LXQcijZEKJqP/JWxLzjRnviIjzg+4id7p3NLxfSamBUv4hww5P2A5cqN3JGmUIxuR38vaJI3SMQR1XzDLg35fYCLwHKJ5+IvTv64/i5+9BIzHz/RqM/J76UK8zKWORcgNXk+05qc/8DB2i7N+B54E+kWoF+A9hf7LEXW9MxB5+98DXBVB91bcVsUFZQ9we4T66xHzBrJ+4IIIut65H7kD3nfUqwLmI5oXV+eXlhbcf4WqXNeDjnqx0IrcSFl+JYwqxOu9D8eXltdx6xsaFHqfO2jFwhDkc7G7HPWelWj5Ks842iSb6uxGPsEfCy6rlB93qGe6ge5HwGxECmBA0bZRxc/WoZ946cZsSUw5TxjYFZRYVmW7rFIeaVlHP0SnqdJrA6410GkEdmp09mE/Ohql0VMVr6uybUcifzjU8ZRG7zPE025KHep+qQDMs7StCjio0ZOV/ZZ1aLGN/nJL/T6IsbtMawdu8woDgN0Kzf3AOZZ6qxRauuINm0pPAtdb6k9QaPUgZs9cGaexc7ylVhNmC78SC8ApxNM63UF/sULzk6iGFzVk2gsctO5EjO5M30+8EXcFqtTvPR6071Vob/KgHZD5AASJtfJyhQftkQrtgx60AzIfAFXb2t+D9gCFdpcH7QAv/klyh4zKWB9r81WrqVO3IyhJg44oPh/sQVuVu090NYOMJAOwR/G5j2UtqiGxqs7EcAlA+TB0O265FlVm0ceKubsVn29x0LoDMQwt77Nix+bl4wRmy8hLUb2IFYDrItjdhDo5Z/uCdw3yyZlE3wNUxXbKri/qfNNu7PJAAecCPyg0f8V+guZVhVZFAmCbjGtzqGOeRq8Vu3xQWDJuvoN9ezV6suI1Gfe8ZeUF7DdW9EMYrdLbiUg1h3ED8KNG52fEHIINIzR6quI1HV2LCILOQeVljkM9zejzLD2ISZfZiBx9LeJpvxSRclgXYlMPYqLflsdCdMuf/BYqeExOPfKOzmWUAWLa0PZpMy1PO9okW57eQ4rWB6k2MTQ4aPVBTKD7dv5SpztTp7TXO+rFgqoDjbKMbz7mG+vCmp0W3PdzfajQvc9RLxYGIzZdlxvZDYyOoHsbotN0df4+4JYI9TcgfwiOAedF0I2FJcid0BpRtxbxa/hNoa9y/FyiLU2sAjYo9BdF0I2N4ai3gPpIKVQhhqEvIiZT2jk9YmovfrYAMdVoO98rYxbye+kALvKgHwtrkRu9PUmjHGlDfi/eNuX5ZgryfqCASF5laYNGDeoBwDFSuOXqZvT7xLYmZ5ozupNXUhWEyeid30m0pSVJ0Yh+h2QqgjAC9b6wwPlTlVenn7CzLf4GhiVmHfCCxKgzxfkBYUFY6Ksilxkx3S6Rn/C79iYpNiHuRcWgShkiw/WwjkbE3uECYopvFpUfJc1AHH/WDXxDRg/rqCJ80arpcTVfEM85QeWMRj5Z08H/0xaZOK4GxJh5DWZBCGtPe4APcNvaFEYD4pA+3XxDcJJjZg5sCqgm/OAm2yPLdgCPIkZargxF5IS+tqj3OOFHlqXK+QEmQXAte4HXgKsN7BiLWBSg2hsQtaTS+QHVuG1kMC0n0S9/vxW7M0jPKOcH2AShA3ECoW3TpCKs6SgtXYjkoSp/lUnnB1QjHBvm/ODUkaGINLPuyJugRD26uBMxdxHM54blsTLn/IBqhOFhzi/lfMQO9A3oRysqdE7cjOiQZQt+J6EOfiadH1BN74P9jmJ23s7F+AtAveaagIn0PiNoBRV0fpxvojMQ7wCHgFcQSwNNUDnb9h84mN7bMMR6pkHAx4iDxStGGidMdE+7DWm8t16kbsfI2UYegIRJYwB8/BM2l2MTEiGNAVjpQeMNDxpnLS6rsoOyH3HAX/7PPHNycnJycnJycnJycnJycnJyUsd/Xk5Gaglg9FgAAAAASUVORK5CYII=

+

+

+[extradata]: https://img.shields.io/badge/ExtraData-f4d5b3?style=social&logo=data:image/png;base64,iVBORw0KGgoAAAANSUhEUgAAAGQAAABkCAYAAABw4pVUAAAABmJLR0QA/wD/AP+gvaeTAAAGYElEQVR4nO2d228VRRzHP6fl2ipFvECN1gpoQR6MVEXFN28hkQdS0FdFRf0P8E8QjXh5ML6aiAFvKD6p9U2lGkuJxEtiI1ClRWy5lYrc6sNvT/acPbN7dk9nd6dnf59ksifl7G9+85uzM7PznWFKuEcL0A3cCtwE3OJdrweu8dISoN37/kJggff5PPCv9/kcMAGc9K7/AH8CR4AR4A/gMDCdamkSUso5/4XAWuBe77raS20Z5T8F/AL8DBwAvgcG8Ss1c7KukPnAeuAR4GHgTmBuxj7U4yIwBPQDXwDfABdy9cgy7cCTwIfAJNJEzKY06fn+BBk8uWk+IeuBF4FNJC/IaeA3/Pb+CDAGjCP9wQRwxvtuZb9R2Z904Pc31wLLkP7oZu+6CliU0K8p4GPgbeDbhPfmwhzgKeAg8X+Bx4BPgO3AY0BXhv52eXlu93w4lsDvg0hZ52Tob2xagWeAYeoXZBzY7X2/Ow9n69ANPAvsQXytV55hYCsSAyfoBQaoXwnvAhtxrxOPogV4EHgDGCW6jEPAA/m4KSwA3gKuYHbwCvAV0Iejj3VC5gKbkdFXWJkvA28io8lMuQ0Zr5ucugS8B6zJ2qkMWYOU8RLmGAwCK7Nyphc4EeLIZ8DtWTniAD3APsyx+Bt52U2V+4BThsxHgMfTztxhNiIxCMblFBKzVLgR+MuQ6afIOL/odCAjs2B8xpB3H6u0IvM8wcxeI//5MJcoATupjdMAlofFLxgyedlmBk3GDmrj9bwt421IBxVsplpsZdCEtCADnMqYHUemdmbMloDhM0CnDcNNzlJkTq4ydn02DO8OGN1hw2hBeIXq2L1vw+ihgNF7bBgtCOuojt1PNowGH7urbRgtCIuobe4jidMxBzUDHebGJxjfuj/mRkZKPQ3cU1QSx6qRCtnSwD1FZXMaRoMvOGeRaRQlmmXU9r/TNgybZjL3oS+GUbQQPgs8Y8IUMn0fCcc0dZJ6hUwjiqE+KT4l4FWiYzZjooxPI4vJltrIaJZzHeHNVKoVYuqoRhCRpqiECVSZdOprEdElrLNfZSPTWcJq4HPMsRgF7jL8fcaYDK4gfJHDZWAXzb/IYRdSVlMMfgSWe9/NpEJAlrq8TvQyoH7kRXI2rcUKYx6yvvdrosu8k+plQJlVSJm7gf0hDpbTBLNzoVwr/kK540SXcRDzQrnMK6Ts+FbiLSWdAD4AnsN/rF1iObANWfF+kvrlGQaeJnz4n0uFlCkvth4y3BeWRoG9wEvABmRtbRYzyiUvrw1e3nsJH6yY0hDxFlsnqpA4BQ8aiRus+5HFEX3428/iMom/HeGodx3F36I2jqx5AtlMc8773I609eBvRVjife5EKqC8HaEHuKoBvz4C3gG+i3lPo/GLNDiTR64NmfXcg0xMxv0FupLOIjJ2H40tUnDmCTExD3lyHgUeQsbp8yLvyJ4LSAfdD3yJbMy5OAN7ieKXdYUEWYBUyjrvegfyYpm0KWmUSeBX/E2fA0hl/Gcxj1lVISZKyM6mbu/ahWyLvoHqbdFlOXQ+/pa5KfxgnqV6W/QJZFv0US8dRvqmtEm9D0m6L6/ILCZhH6Kaerqopu4Yqqk7hGrqDqGaumOopu4Iqqk7hGrqDqGauiOopu4Iqqk7gGrqDqCaugOopo5q6lVO1SNoRDV11dRzTaqp54xq6qimHu8fGzFoAdXUYxisTKqpx0c1dcdQTd0xVFN3CNXUHUI1dcdQTd0RVFN3CNXUHUI1dUdQTd0RVFN3ANXUHUA1dQdQTR3V1KucqkfQiGrqqqnnmlRTzxnnNfXTVKuEHcT4L7Nj0uyaegd+0woSy8VRN8Q5zm6E6pe8HuCHxK6ZOY+0xZXtcTNp6sFZi5F6N8SpkENUV8gW7FWIiWkkUFksQEiboGJo5XSEzdR2cqoY1sekGG6yYXghtW+pqhhGY1IMrR15BHKgVXD4pophOCbFcJvNDFoxH0C8Ez1PpJISMuEajNN+UjhNuhPzwZKqGAphiuEYMkpMhaijV1UxrI1LqkevlllL7bmGlZ29KoaSMjmcuMxKRBUzOaKKocRmRdZOzUcOcw9zqoiKYW4H3FfSi3kEVpmKoBgewKwY5kKRFcPfEcXQ+rDWBqoYWnAqLVQxdJSiK4aJyHrao4iKYSLynodqdsUwMXlXiIlmUgwVRbHK/zvve/uixi6eAAAAAElFTkSuQmCC

+

+

+

+[postprocess]: https://img.shields.io/badge/PostProcess-f4d5b3?style=social&logo=data:image/png;base64,iVBORw0KGgoAAAANSUhEUgAAAGAAAABgCAYAAADimHc4AAAABmJLR0QA/wD/AP+gvaeTAAAFXElEQVR4nO2dW2gdRRjHf7bRIiKiVNSK2IqkCV4ajdfUqEWrSOKt1KpVUi+NN0SliD5oVfClCj4Vi/oo6oNI38UHxUbEB29QwUtVvEWbqmlMTc31+DCNrbDz7ew5szu7O98Pljxk58+3//85s7O7s3NAURRFURRFURRFURRFUVpnAbAGeAP4FpgAGgG3F4HDcj3iEnEh8BlhDY82hJuA/YQ3O8oQVgL/EN7ktO0VTBdZK44AdhHe3GhDuJ/wpkbdHb1H8kH+AQwAxxZYy3JLLbUNoQ2YJvkA+wPUkyWAWoRwMskHtpcwB5Y1gMqfE2wH/GXJ6ildCJVNvEmGgc+F/w8CWynwWxtbAOPAKuBjYZ8HgJcpyJvYAgAYBVYjhzAIvEQB/sQYAJQohFgDgJKEEHMAUIIQYg8AAoegARiChdDmU6wCzF+gNcvggb/3AXOtl6PfgGYYBF7wJaYBNMc1voQ0gMBoAIHRAAJT1wC+wtzRbHXryLvQugZQGTSAwGgAgdEAAqMBBCa2e0FZmR9N5YZ+AwKjAQRGAwiMBhAYDSAwGoAfjgc2Ax8Bu4ExYCdmlt1ZRRRQtrmhRXInxnDbfNNZYBvmBZbciDWAZ3Cf+PsucFRehcQYwNNkn309BBydRzGxBfAozU1/zy2EmAJ4jObNP7Q78npOiCWATcjG7gJWAIuB7Sn7bvNZWAwBPISZjGUz9Adg6SH7LwReF/afBbp8FVf3ADYim/8jsCyhXVoIW30VWOcABkk3/zShfRv27minryLrGsBdmK7CZv7PwOkOOost7cd8FVrHAAaQzR/GHLcLZ1g09vgqtm4BrAdmsJv/G9DpqHUi8IVF50NfBdcpgHXI5o8AZzpqLcF4YNN6ylfRdQlgDTCF3bBRoNtR6xTga0FrHDjBV+F1COB6ZPP/BM5x1DoVs0ybTavBwZc9vFD1APqBSeRP/nmOWsuA7wWtBvCcx9qBagdwFfISa2OYNfBcWAp8J2g1gOc91v4fVQ1gNbL5+4BLHbXagZ8ErQawxWPt/6OKAfRiDLaZ9TdwuaPWcuAXQStX8+cLqFIAKzGjEMn8VY5aHZiLMsn8zR5rT6RKAfQAf2E3awK4wlGrE/hV0GoAT3is3UpVArgI2fxJoM9RqwtzK8GmNQc84rF2kSoEcC5mLC+Zf20Grd8FrTnM84PCKHsAXZjVG22GTQHXOWp1p2jNAQ96rN2JMgewAvnTOgPc7KjVgzwHaA6z4lbhlDWAs5H76RngVketS5DPH7PAHR5rz8QSS1FjhFuPswN5hDID3Oao1Yts/gywwWPtmSnbwq2dmHv2kmG3O2pdibkusGlNA7d4rL1ppKWLNwDHFVRHO/KFUZau4mrkH56YAtZ6rL0l7sVeqI/NhWMwz2mlk+Tdjlp9yEvxTwI3OmoVwuHAN4QNoE9on2WE0od8k24SuMFRq1B6yO8HHFzYYmmbZWzen3IMk7hfMwRhLfn8hIkLQ5a2zzq2X4f8VGwC8/yg9JwPfEKxARyJ/ZPrMoNhPfaRXAMzEnK9SVcKFmD6ydcw54ZWf8Yqjcss7UZIvxYZQJ4JMX5AXxF4kmTztqe0S5v9tg/3ZwNR8zbJBm4S2mxENn8vcHF+JdeHhdhvkl2QsH8HZlKUNOl21NJWSaAbe/exCDM38x7gVcxs5rTzjZqfkYdJNnI/8k20pG0PHl+ciIW38DPUHcHcwlYykjYrwWXbTUFvtdeNdlo3f5gClqhMog4rZvU20WYas1T9ELADeB8z5CycWAKYAD7FGP4BxnBvrwfFTtI08DHgHeBxzLPcRcGqqzkncbAPfxMzHO2mQsvw/Atv0E+fkVDOBwAAAABJRU5ErkJggg==

diff --git a/OpenOOD/bash_allocation.slurm b/OpenOOD/bash_allocation.slurm

new file mode 100644

index 0000000000000000000000000000000000000000..ad5e8f33387306f5ef91cfa71eba054ed7b609db

--- /dev/null

+++ b/OpenOOD/bash_allocation.slurm

@@ -0,0 +1,16 @@

+#!/bin/bash

+#SBATCH --job-name=zzzz2

+#SBATCH --output=output2.txt

+#SBATCH --error=error2.txt

+#SBATCH --cpus-per-task=5

+#SBATCH --ntasks=4

+#SBATCH --gres=gpu:1

+#SBATCH --mem=100000

+#SBATCH -N 1

+

+

+./batch_file_deal3_train_method.sh

+

+

+# 取消当前作业以释放节点

+scancel $SLURM_JOB_ID

\ No newline at end of file

diff --git a/OpenOOD/bash_allocation2.slurm b/OpenOOD/bash_allocation2.slurm

new file mode 100644

index 0000000000000000000000000000000000000000..a390c848e9ffae0a2200e8299c8db24cc3349ad7

--- /dev/null

+++ b/OpenOOD/bash_allocation2.slurm

@@ -0,0 +1,16 @@

+#!/bin/bash

+#SBATCH --job-name=OOD_post_method

+#SBATCH --output=output_OOD_post_method.txt

+#SBATCH --error=error_OOD_post_method.txt

+#SBATCH --cpus-per-task=5

+#SBATCH --ntasks=4

+#SBATCH --gres=gpu:1

+#SBATCH --mem=100000

+#SBATCH -N 1

+

+

+python batch_file_deal_post_method_Ours_Notline.py

+

+

+# 取消当前作业以释放节点

+scancel $SLURM_JOB_ID

\ No newline at end of file

diff --git a/OpenOOD/batch_file_deal2.py b/OpenOOD/batch_file_deal2.py

new file mode 100644

index 0000000000000000000000000000000000000000..5563e61cd66cd094d472856f407161d971806280

--- /dev/null

+++ b/OpenOOD/batch_file_deal2.py

@@ -0,0 +1,120 @@

+import subprocess

+import os

+

+# 设置 PYTHONPATH 环境变量

+pythonpath = '.'

+if 'PYTHONPATH' in os.environ:

+ pythonpath += ':' + os.environ['PYTHONPATH']

+os.environ['PYTHONPATH'] = pythonpath

+

+ROOT = "/home/zhourixin/OOD_Folder/CODE/other_methods/openOOD_code/OpenOOD"

+

+

+run_file = ROOT+"/eval_ood.py"

+

+

+subprocess.run(["python", run_file, "--id-data=bronze2", \

+ "--root=/home/zhourixin/OOD_Folder/CODE/other_methods/openOOD_code/OpenOOD/results/bronze_2_p2pnet_415", \

+ "--postprocessor=gram",\

+ "--batch-size=20",\

+ "--save-score",\

+ "--save-csv",\

+ ])

+

+# subprocess.run(["python", run_file, "--id-data=bronze2", \

+# "--root=/home/zhourixin/OOD_Folder/CODE/other_methods/openOOD_code/OpenOOD/results/bronze_2_p2pnet_415", \

+# "--postprocessor=gradnorm",\

+# "--batch-size=20",\

+# "--save-score",\

+# "--save-csv",\

+# ])

+

+

+

+# subprocess.run(["python", run_file, "--id-data=bronze2", \

+# "--root=/home/zhourixin/OOD_Folder/CODE/other_methods/openOOD_code/OpenOOD/results/bronze_2_p2pnet_415", \

+# "--postprocessor=react",\

+# "--batch-size=100",\

+# "--save-score",\

+# "--save-csv",\

+# ])

+

+# subprocess.run(["python", run_file, "--id-data=bronze2", \

+# "--root=/home/zhourixin/OOD_Folder/CODE/other_methods/openOOD_code/OpenOOD/results/bronze_2_p2pnet_415", \

+# "--postprocessor=mls",\

+# "--batch-size=100",\

+# "--save-score",\

+# "--save-csv",\

+# ])

+

+# subprocess.run(["python", run_file, "--id-data=bronze2", \

+# "--root=/home/zhourixin/OOD_Folder/CODE/other_methods/openOOD_code/OpenOOD/results/bronze_2_p2pnet_415", \

+# "--postprocessor=klm",\

+# "--batch-size=100",\

+# "--save-score",\

+# "--save-csv",\

+# ])

+

+# subprocess.run(["python", run_file, "--id-data=bronze2", \

+# "--root=/home/zhourixin/OOD_Folder/CODE/other_methods/openOOD_code/OpenOOD/results/bronze_2_p2pnet_415", \

+# "--postprocessor=vim",\

+# "--batch-size=100",\

+# "--save-score",\

+# "--save-csv",\

+# ])

+

+# subprocess.run(["python", run_file, "--id-data=bronze2", \

+# "--root=/home/zhourixin/OOD_Folder/CODE/other_methods/openOOD_code/OpenOOD/results/bronze_2_p2pnet_415", \

+# "--postprocessor=knn",\

+# "--batch-size=100",\

+# "--save-score",\

+# "--save-csv",\

+# ])

+

+# subprocess.run(["python", run_file, "--id-data=bronze2", \

+# "--root=/home/zhourixin/OOD_Folder/CODE/other_methods/openOOD_code/OpenOOD/results/bronze_2_p2pnet_415", \

+# "--postprocessor=dice",\

+# "--batch-size=100",\

+# "--save-score",\

+# "--save-csv",\

+# ])

+

+

+

+

+

+

+# run_file = ROOT+"/main.py"

+# subprocess.run(["python", run_file, "--config configs/datasets/cifar10/cifar10.yml \

+# configs/datasets/cifar10/cifar10_ood.yml \

+# configs/networks/resnet18_32x32.yml \

+# configs/pipelines/test/test_ood.yml \

+# configs/preprocessors/base_preprocessor.yml \

+# configs/postprocessors/knn.yml ", "--num_workers=8",

+# "--network.checkpoint='results/cifar10_resnet18_32x32_base_e100_lr0.1_default/s0/best.ckpt'",

+# "--mark=0"])

+

+

+# cmd = "python main.py \

+# --config configs/datasets/cifar10/cifar10.yml \

+# configs/datasets/cifar10/cifar10_ood.yml \

+# configs/networks/resnet18_32x32.yml \

+# configs/pipelines/test/test_ood.yml \

+# configs/preprocessors/base_preprocessor.yml \

+# configs/postprocessors/knn.yml \

+# --num_workers 8 \

+# --network.checkpoint 'results/cifar10_resnet18_32x32_base_e100_lr0.1_default/s0/best.ckpt' \

+# --mark 0"

+

+# subprocess.run(cmd, shell=True, cwd=ROOT)

+

+# # subprocess.run(["python", run_file, "--model_type=test"])

+# # subprocess.run(["python", run_file, "--model_type=stage1"])

+# # subprocess.run(["python", run_file, "--model_type=stage2"])

+# # subprocess.run(["python", run_file, "--model_type=stage2_searching"])

+

+# path = ROOT

+# # cmd = 'python -m torch.distributed.launch --nproc_per_node 4 texture_countour_double_GCN.py --model_type=stage2'

+# # subprocess.run(cmd, shell=True, cwd=path)

+# cmd = 'python texture_countour_double_GCN.py --model_type=stage2_searching'

+# subprocess.run(cmd, shell=True, cwd=path)

\ No newline at end of file

diff --git a/OpenOOD/batch_file_deal3_train_method.sh b/OpenOOD/batch_file_deal3_train_method.sh

new file mode 100644

index 0000000000000000000000000000000000000000..b46f68f0b0053fbd2a08ab09e0c784bc79266e13

--- /dev/null

+++ b/OpenOOD/batch_file_deal3_train_method.sh

@@ -0,0 +1,32 @@

+

+SEED=0

+# train

+# python main.py \

+# --config configs/datasets/bronze2/bronze2.yml \

+# configs/networks/opengan.yml \

+# configs/pipelines/train/train_opengan.yml \

+# configs/preprocessors/base_preprocessor.yml \

+# configs/postprocessors/opengan.yml \

+# --dataset.feat_root ./results/bronze2_OursBronze2_feat_extract_opengan_default/s${SEED} \

+# --network.backbone.pretrained True \

+# --network.backbone.checkpoint ./results/pretrained_weights/resnet50_imagenet1k_v1.pth \

+# --optimizer.num_epochs 90 \

+# --seed ${SEED} \

+# --proj_ROOT /home/zhourixin/OOD_Folder/CODE/other_methods/openOOD_code/OpenOOD

+

+# test

+SCHEME="ood" # "ood" or "fsood"

+python main.py \

+ --config configs/datasets/bronze2/bronze2.yml \

+ configs/datasets/bronze2/bronze2_ood.yml \

+ configs/networks/opengan.yml \

+ configs/pipelines/test/test_opengan.yml \

+ configs/preprocessors/base_preprocessor.yml \

+ configs/postprocessors/opengan.yml \

+ --num_workers 8 \

+ --network.backbone.name OursBronze2 \

+ --network.backbone.pretrained True \

+ --network.backbone.checkpoint ./results/bronze2_ours_resnet50_415_NotLine_train/s0/model_state_dict_epoch90.pth \

+ --evaluator.ood_scheme ${SCHEME} \

+ --seed ${SEED} \

+ --proj_ROOT /home/zhourixin/OOD_Folder/CODE/other_methods/openOOD_code/OpenOOD

diff --git a/OpenOOD/batch_file_deal_post_method_Ours_Notline.py b/OpenOOD/batch_file_deal_post_method_Ours_Notline.py

new file mode 100644

index 0000000000000000000000000000000000000000..45de8708212a6f25fbf078cd5e989f223d3256b3

--- /dev/null

+++ b/OpenOOD/batch_file_deal_post_method_Ours_Notline.py

@@ -0,0 +1,128 @@

+import subprocess

+import os

+

+# 设置 PYTHONPATH 环境变量

+pythonpath = '.'

+if 'PYTHONPATH' in os.environ:

+ pythonpath += ':' + os.environ['PYTHONPATH']

+os.environ['PYTHONPATH'] = pythonpath

+

+ROOT = "/home/zhourixin/OOD_Folder/CODE/other_methods/openOOD_code/OpenOOD"

+

+

+run_file = ROOT+"/eval_ood.py"

+

+subprocess.run(["python", run_file, "--id-data=bronze2", \

+ "--root=/home/zhourixin/OOD_Folder/CODE/other_methods/openOOD_code/OpenOOD/results/bronze2_ours_resnet50_415_NotLine_train", \

+ "--postprocessor=openmax",\

+ "--save-score",\

+ "--save-csv",\

+ ])

+

+subprocess.run(["python", run_file, "--id-data=bronze2", \

+ "--root=/home/zhourixin/OOD_Folder/CODE/other_methods/openOOD_code/OpenOOD/results/bronze2_ours_resnet50_415_NotLine_train", \

+ "--postprocessor=msp",\

+ "--batch-size=200",\

+ "--save-score",\

+ "--save-csv",\

+ ])

+

+

+

+subprocess.run(["python", run_file, "--id-data=bronze2", \

+ "--root=/home/zhourixin/OOD_Folder/CODE/other_methods/openOOD_code/OpenOOD/results/bronze2_ours_resnet50_415_NotLine_train", \

+ "--postprocessor=odin",\

+ "--batch-size=10",\

+ "--save-score",\

+ "--save-csv",\

+ ])

+

+subprocess.run(["python", run_file, "--id-data=bronze2", \

+ "--root=/home/zhourixin/OOD_Folder/CODE/other_methods/openOOD_code/OpenOOD/results/bronze2_ours_resnet50_415_NotLine_train", \

+ "--postprocessor=mds",\

+ "--batch-size=100",\

+ "--save-score",\

+ "--save-csv",\

+ ])

+

+

+

+

+subprocess.run(["python", run_file, "--id-data=bronze2", \

+ "--root=/home/zhourixin/OOD_Folder/CODE/other_methods/openOOD_code/OpenOOD/results/bronze2_ours_resnet50_415_NotLine_train", \

+ "--postprocessor=ebo",\

+ "--batch-size=100",\

+ "--save-score",\

+ "--save-csv",\

+ ])

+

+

+

+subprocess.run(["python", run_file, "--id-data=bronze2", \

+ "--root=/home/zhourixin/OOD_Folder/CODE/other_methods/openOOD_code/OpenOOD/results/bronze2_ours_resnet50_415_NotLine_train", \

+ "--postprocessor=gram",\

+ "--batch-size=20",\

+ "--save-score",\

+ "--save-csv",\

+ ])

+

+subprocess.run(["python", run_file, "--id-data=bronze2", \

+ "--root=/home/zhourixin/OOD_Folder/CODE/other_methods/openOOD_code/OpenOOD/results/bronze2_ours_resnet50_415_NotLine_train", \

+ "--postprocessor=gradnorm",\

+ "--batch-size=20",\

+ "--save-score",\

+ "--save-csv",\

+ ])

+

+

+

+subprocess.run(["python", run_file, "--id-data=bronze2", \

+ "--root=/home/zhourixin/OOD_Folder/CODE/other_methods/openOOD_code/OpenOOD/results/bronze2_ours_resnet50_415_NotLine_train", \

+ "--postprocessor=react",\

+ "--batch-size=100",\

+ "--save-score",\

+ "--save-csv",\

+ ])

+

+subprocess.run(["python", run_file, "--id-data=bronze2", \

+ "--root=/home/zhourixin/OOD_Folder/CODE/other_methods/openOOD_code/OpenOOD/results/bronze2_ours_resnet50_415_NotLine_train", \

+ "--postprocessor=mls",\

+ "--batch-size=100",\

+ "--save-score",\

+ "--save-csv",\

+ ])

+

+subprocess.run(["python", run_file, "--id-data=bronze2", \

+ "--root=/home/zhourixin/OOD_Folder/CODE/other_methods/openOOD_code/OpenOOD/results/bronze2_ours_resnet50_415_NotLine_train", \

+ "--postprocessor=klm",\

+ "--batch-size=100",\

+ "--save-score",\

+ "--save-csv",\

+ ])

+

+subprocess.run(["python", run_file, "--id-data=bronze2", \

+ "--root=/home/zhourixin/OOD_Folder/CODE/other_methods/openOOD_code/OpenOOD/results/bronze2_ours_resnet50_415_NotLine_train", \

+ "--postprocessor=vim",\

+ "--batch-size=100",\

+ "--save-score",\

+ "--save-csv",\

+ ])

+

+subprocess.run(["python", run_file, "--id-data=bronze2", \

+ "--root=/home/zhourixin/OOD_Folder/CODE/other_methods/openOOD_code/OpenOOD/results/bronze2_ours_resnet50_415_NotLine_train", \

+ "--postprocessor=knn",\

+ "--batch-size=100",\

+ "--save-score",\

+ "--save-csv",\

+ ])

+

+subprocess.run(["python", run_file, "--id-data=bronze2", \

+ "--root=/home/zhourixin/OOD_Folder/CODE/other_methods/openOOD_code/OpenOOD/results/bronze2_ours_resnet50_415_NotLine_train", \

+ "--postprocessor=dice",\

+ "--batch-size=100",\

+ "--save-score",\

+ "--save-csv",\

+ ])

+

+

+

diff --git a/OpenOOD/batch_file_deal_post_method_p2pNet.py b/OpenOOD/batch_file_deal_post_method_p2pNet.py

new file mode 100644

index 0000000000000000000000000000000000000000..8afbff316d0b408761f63123217efb4ed2f98c75

--- /dev/null

+++ b/OpenOOD/batch_file_deal_post_method_p2pNet.py

@@ -0,0 +1,128 @@

+import subprocess

+import os

+

+# 设置 PYTHONPATH 环境变量

+pythonpath = '.'

+if 'PYTHONPATH' in os.environ:

+ pythonpath += ':' + os.environ['PYTHONPATH']

+os.environ['PYTHONPATH'] = pythonpath

+

+ROOT = "/home/zhourixin/OOD_Folder/CODE/other_methods/openOOD_code/OpenOOD"

+

+

+run_file = ROOT+"/eval_ood.py"

+

+subprocess.run(["python", run_file, "--id-data=bronze2", \

+ "--root=/home/zhourixin/OOD_Folder/CODE/other_methods/openOOD_code/OpenOOD/results/bronze_2_p2pnet_415", \

+ "--postprocessor=openmax",\

+ "--save-score",\

+ "--save-csv",\

+ ])

+

+subprocess.run(["python", run_file, "--id-data=bronze2", \

+ "--root=/home/zhourixin/OOD_Folder/CODE/other_methods/openOOD_code/OpenOOD/results/bronze_2_p2pnet_415", \

+ "--postprocessor=msp",\

+ "--batch-size=200",\

+ "--save-score",\

+ "--save-csv",\

+ ])

+

+

+

+subprocess.run(["python", run_file, "--id-data=bronze2", \

+ "--root=/home/zhourixin/OOD_Folder/CODE/other_methods/openOOD_code/OpenOOD/results/bronze_2_p2pnet_415", \

+ "--postprocessor=odin",\

+ "--batch-size=10",\

+ "--save-score",\

+ "--save-csv",\

+ ])

+

+subprocess.run(["python", run_file, "--id-data=bronze2", \

+ "--root=/home/zhourixin/OOD_Folder/CODE/other_methods/openOOD_code/OpenOOD/results/bronze_2_p2pnet_415", \

+ "--postprocessor=mds",\

+ "--batch-size=100",\

+ "--save-score",\

+ "--save-csv",\

+ ])

+

+

+

+

+subprocess.run(["python", run_file, "--id-data=bronze2", \

+ "--root=/home/zhourixin/OOD_Folder/CODE/other_methods/openOOD_code/OpenOOD/results/bronze_2_p2pnet_415", \

+ "--postprocessor=ebo",\

+ "--batch-size=100",\

+ "--save-score",\

+ "--save-csv",\

+ ])

+

+

+

+subprocess.run(["python", run_file, "--id-data=bronze2", \

+ "--root=/home/zhourixin/OOD_Folder/CODE/other_methods/openOOD_code/OpenOOD/results/bronze_2_p2pnet_415", \

+ "--postprocessor=gram",\

+ "--batch-size=20",\

+ "--save-score",\

+ "--save-csv",\

+ ])

+

+subprocess.run(["python", run_file, "--id-data=bronze2", \

+ "--root=/home/zhourixin/OOD_Folder/CODE/other_methods/openOOD_code/OpenOOD/results/bronze_2_p2pnet_415", \

+ "--postprocessor=gradnorm",\

+ "--batch-size=20",\

+ "--save-score",\

+ "--save-csv",\

+ ])

+

+

+

+subprocess.run(["python", run_file, "--id-data=bronze2", \

+ "--root=/home/zhourixin/OOD_Folder/CODE/other_methods/openOOD_code/OpenOOD/results/bronze_2_p2pnet_415", \

+ "--postprocessor=react",\

+ "--batch-size=100",\

+ "--save-score",\

+ "--save-csv",\

+ ])

+

+subprocess.run(["python", run_file, "--id-data=bronze2", \

+ "--root=/home/zhourixin/OOD_Folder/CODE/other_methods/openOOD_code/OpenOOD/results/bronze_2_p2pnet_415", \

+ "--postprocessor=mls",\

+ "--batch-size=100",\

+ "--save-score",\

+ "--save-csv",\

+ ])

+

+subprocess.run(["python", run_file, "--id-data=bronze2", \

+ "--root=/home/zhourixin/OOD_Folder/CODE/other_methods/openOOD_code/OpenOOD/results/bronze_2_p2pnet_415", \

+ "--postprocessor=klm",\

+ "--batch-size=100",\

+ "--save-score",\

+ "--save-csv",\

+ ])

+

+subprocess.run(["python", run_file, "--id-data=bronze2", \

+ "--root=/home/zhourixin/OOD_Folder/CODE/other_methods/openOOD_code/OpenOOD/results/bronze_2_p2pnet_415", \

+ "--postprocessor=vim",\

+ "--batch-size=100",\

+ "--save-score",\

+ "--save-csv",\

+ ])

+

+subprocess.run(["python", run_file, "--id-data=bronze2", \

+ "--root=/home/zhourixin/OOD_Folder/CODE/other_methods/openOOD_code/OpenOOD/results/bronze_2_p2pnet_415", \

+ "--postprocessor=knn",\

+ "--batch-size=100",\

+ "--save-score",\

+ "--save-csv",\

+ ])

+

+subprocess.run(["python", run_file, "--id-data=bronze2", \

+ "--root=/home/zhourixin/OOD_Folder/CODE/other_methods/openOOD_code/OpenOOD/results/bronze_2_p2pnet_415", \

+ "--postprocessor=dice",\

+ "--batch-size=100",\

+ "--save-score",\

+ "--save-csv",\

+ ])

+

+

+

diff --git a/OpenOOD/batch_file_deal_vim_ablation.py b/OpenOOD/batch_file_deal_vim_ablation.py

new file mode 100644

index 0000000000000000000000000000000000000000..617285d6c0c78280e11c55505d6a24da2c834029

--- /dev/null

+++ b/OpenOOD/batch_file_deal_vim_ablation.py

@@ -0,0 +1,26 @@

+import subprocess

+import os

+

+# 设置 PYTHONPATH 环境变量

+pythonpath = '.'

+if 'PYTHONPATH' in os.environ:

+ pythonpath += ':' + os.environ['PYTHONPATH']

+os.environ['PYTHONPATH'] = pythonpath

+

+ROOT = "/home/zhourixin/OOD_Folder/CODE/other_methods/openOOD_code/OpenOOD"

+

+

+run_file = ROOT+"/eval_ood.py"

+

+

+

+subprocess.run(["python", run_file, "--id-data=bronze2", \

+ "--root=/home/zhourixin/OOD_Folder/CODE/other_methods/openOOD_code/OpenOOD/results/bronze2_ours_resnet50_415_NotLine_train", \

+ "--postprocessor=vim",\

+ "--batch-size=100",\

+ "--save-score",\

+ "--save-csv",\

+ ])

+

+

+# subprocess.r

\ No newline at end of file

diff --git a/OpenOOD/codespell_ignored.txt b/OpenOOD/codespell_ignored.txt

new file mode 100644

index 0000000000000000000000000000000000000000..defc26379052b4f34721a4ff1bf2ada915e9858b

--- /dev/null

+++ b/OpenOOD/codespell_ignored.txt

@@ -0,0 +1,5 @@

+ans

+fpr

+als

+hist

+tha

diff --git a/OpenOOD/configs/datasets/aircraft/aircraft.yml b/OpenOOD/configs/datasets/aircraft/aircraft.yml

new file mode 100644

index 0000000000000000000000000000000000000000..a2be9e29cf86ba2dfc8745aeca0d8220c4b75700

--- /dev/null

+++ b/OpenOOD/configs/datasets/aircraft/aircraft.yml

@@ -0,0 +1,33 @@

+dataset:

+ name: aircraft

+ num_classes: 50

+ pre_size: 512

+ image_size: 448

+

+ interpolation: bilinear

+ normalization_type: aircraft

+

+ num_workers: '@{num_workers}'

+ num_gpus: '@{num_gpus}'

+ num_machines: '@{num_machines}'

+

+ split_names: [train, val, test]

+

+ train:

+ dataset_class: ImglistDataset

+ data_dir: ./data/images_largescale/

+ imglist_pth: ./data/benchmark_imglist/aircraft/train_id.txt

+ batch_size: 32

+ shuffle: True

+ val:

+ dataset_class: ImglistDataset

+ data_dir: ./data/images_largescale/

+ imglist_pth: ./data/benchmark_imglist/aircraft/val_id.txt

+ batch_size: 200

+ shuffle: False

+ test:

+ dataset_class: ImglistDataset

+ data_dir: ./data/images_largescale/

+ imglist_pth: ./data/benchmark_imglist/aircraft/test_id.txt

+ batch_size: 200

+ shuffle: False

diff --git a/OpenOOD/configs/datasets/aircraft/aircraft_oe.yml b/OpenOOD/configs/datasets/aircraft/aircraft_oe.yml

new file mode 100644

index 0000000000000000000000000000000000000000..644e4a6917382bee87dcdbd94ee804bfb0665189

--- /dev/null

+++ b/OpenOOD/configs/datasets/aircraft/aircraft_oe.yml

@@ -0,0 +1,12 @@

+name: aircraft_oe

+

+dataset:

+ name: aircraft_oe

+ split_names: [train, oe, val, test]

+ oe:

+ dataset_class: ImglistDataset

+ data_dir: ./data/images_largescale/

+ imglist_pth: ./data/benchmark_imglist/aircraft/train_oe.txt

+ batch_size: 32

+ shuffle: True

+ interpolation: bilinear

diff --git a/OpenOOD/configs/datasets/aircraft/aircraft_ood.yml b/OpenOOD/configs/datasets/aircraft/aircraft_ood.yml

new file mode 100644

index 0000000000000000000000000000000000000000..5532b0b739f60c2cfdafc5a9a2fcf6e3651c9de7

--- /dev/null

+++ b/OpenOOD/configs/datasets/aircraft/aircraft_ood.yml

@@ -0,0 +1,28 @@

+ood_dataset:

+ name: aircraft_ood

+ num_classes: 50

+

+ dataset_class: ImglistDataset

+ interpolation: bilinear

+ batch_size: 64

+ shuffle: False

+

+ pre_size: 512

+ image_size: 448

+ num_workers: '@{num_workers}'

+ num_gpus: '@{num_gpus}'

+ num_machines: '@{num_machines}'

+ split_names: [val, nearood, farood]

+ val:

+ data_dir: ./data/images_largescale/

+ imglist_pth: ./data/benchmark_imglist/aircraft/val_ood.txt

+ nearood:

+ datasets: [hardood]

+ hard:

+ data_dir: ./data/images_largescale/

+ imglist_pth: ./data/benchmark_imglist/aircraft/test_ood_hard.txt

+ farood:

+ datasets: [easyood]

+ easy:

+ data_dir: ./data/images_largescale/

+ imglist_pth: ./data/benchmark_imglist/aircraft/test_ood_easy.txt

diff --git a/OpenOOD/configs/datasets/bronze2/bronze2.yml b/OpenOOD/configs/datasets/bronze2/bronze2.yml

new file mode 100644

index 0000000000000000000000000000000000000000..0c7341d33ee417ba3345495439d09196e79f3ea9

--- /dev/null

+++ b/OpenOOD/configs/datasets/bronze2/bronze2.yml

@@ -0,0 +1,36 @@

+dataset:

+ name: bronze2

+ num_classes: 11

+ pre_size: 420

+ image_size: 400

+

+ interpolation: bilinear

+ normalization_type: imagenet

+

+ num_workers: '@{num_workers}'

+ num_gpus: '@{num_gpus}'

+ num_machines: '@{num_machines}'

+

+ split_names: [train, val, test]

+

+ train:

+ dataset_class: Bronze2ExcelDataset

+ data_dir: /data/bronze_ID_and_OOD/bronze2NotLine/image_not_line

+ imglist_pth: /data/bronze_ID_and_OOD/bronze2NotLine/not_line_ding_gui_train_val_test/ding_gui_not_line_train.xlsx

+ xml_path: /data/bronze_ID_and_OOD/bronze2NotLine/xmls

+ batch_size: 128

+ shuffle: True

+ val:

+ dataset_class: Bronze2ExcelDataset

+ data_dir: /data/bronze_ID_and_OOD/bronze2NotLine/image_not_line

+ imglist_pth: /data/bronze_ID_and_OOD/bronze2NotLine/not_line_ding_gui_train_val_test/ding_gui_not_line_val.xlsx

+ xml_path: /data/bronze_ID_and_OOD/bronze2NotLine/xmls

+ batch_size: 128

+ shuffle: False

+ test:

+ dataset_class: Bronze2ExcelDataset

+ data_dir: /data/bronze_ID_and_OOD/bronze2NotLine/image_not_line

+ imglist_pth: /data/bronze_ID_and_OOD/bronze2NotLine/not_line_ding_gui_train_val_test/ding_gui_not_line_test.xlsx

+ xml_path: /data/bronze_ID_and_OOD/bronze2NotLine/xmls

+ batch_size: 128

+ shuffle: False

diff --git a/OpenOOD/configs/datasets/bronze2/bronze2_ood.yml b/OpenOOD/configs/datasets/bronze2/bronze2_ood.yml

new file mode 100644

index 0000000000000000000000000000000000000000..9260bd73bd6548291010ebd5aaec4412319edba4

--- /dev/null

+++ b/OpenOOD/configs/datasets/bronze2/bronze2_ood.yml

@@ -0,0 +1,62 @@

+ood_dataset:

+ name: bronze2_ood

+ num_classes: 11

+

+ dataset_class: ImglistDataset

+ interpolation: bilinear

+ batch_size: 32

+ shuffle: False

+

+ pre_size: 256

+ image_size: 224

+ num_workers: '@{num_workers}'

+ num_gpus: '@{num_gpus}'

+ num_machines: '@{num_machines}'

+ split_names: [val, nearood, midood, farood]

+ val:

+ data_dir: /home/zhourixin/OOD_Folder/CODE/other_methods/openOOD_code/OpenOOD/data/images_largescale/

+ imglist_pth: /home/zhourixin/OOD_Folder/CODE/other_methods/openOOD_code/OpenOOD/data/benchmark_imglist/imagenet/val_openimage_o.txt

+

+ nearood: